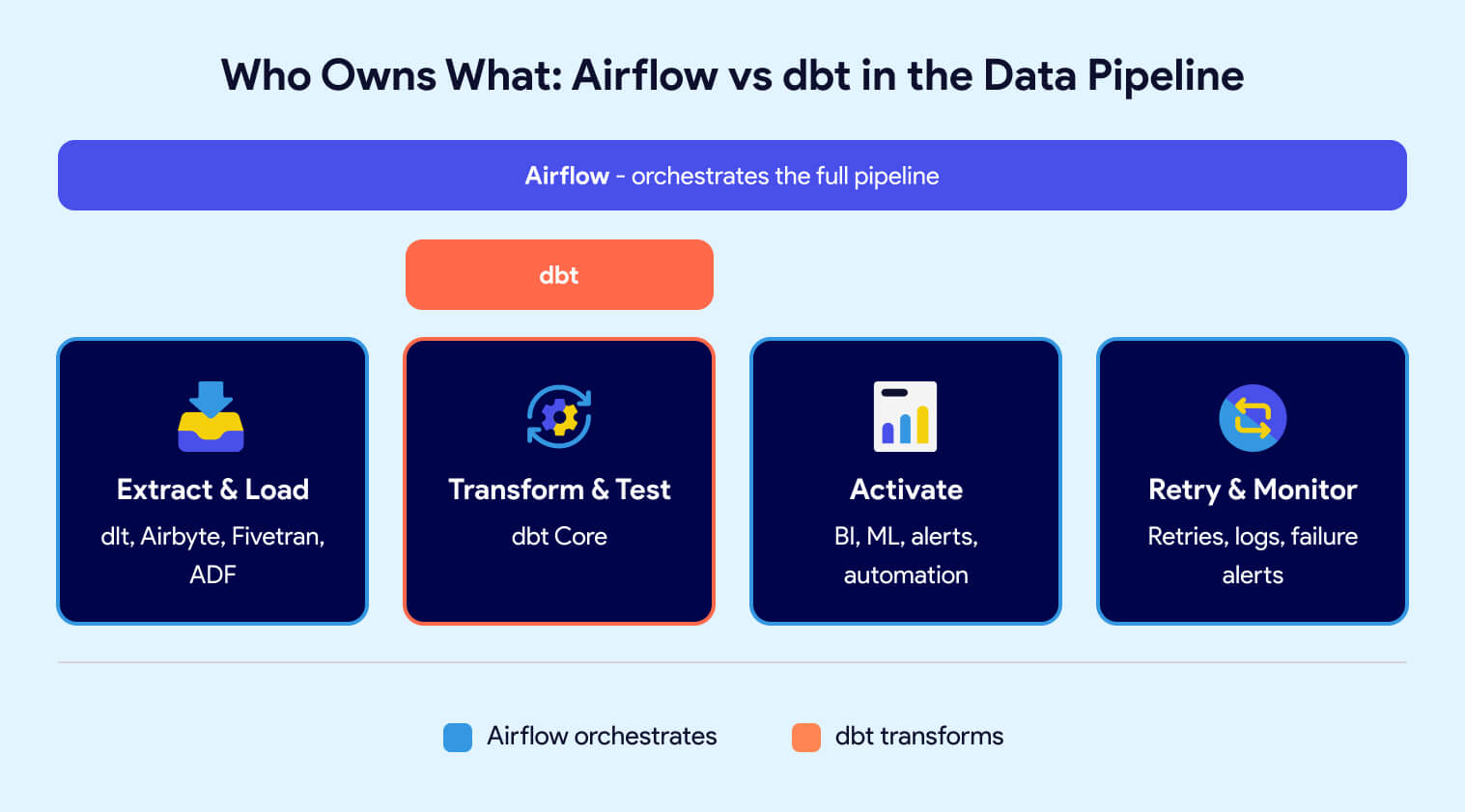

dbt transforms data inside your warehouse using SQL. Airflow orchestrates workflows across your entire data pipeline. They are not competing tools -- they solve different problems. dbt handles the "T" in ELT: modeling, data testing, unit testing, documentation, and lineage. Airflow handles the scheduling, sequencing, retries, failure notifications, observability, and coordination of every step in the pipeline, including ingesting data, triggering dbt, and activation steps. Most production data teams use both. The real decision is not which tool to pick, but how to run them together without drowning in infrastructure overhead.

Airflow is a workflow scheduler, but it is only one part of a broader data orchestration problem that includes dependencies, retries, visibility, and ownership across the entire data lifecycle.

Imagine a scenario where you have a series of tasks: Task A, Task B, and Task C. These tasks need to be executed in sequence every day at a specific time. Airflow enables you to programmatically define the sequence of steps as well as what each step does. With Airflow you can also monitor the execution of each step and get alerts when something fails.

Airflow's core strength is flexibility. You define the code behind each task, not just the sequence and schedule. Whether it's triggering an extraction job, calling a Python script, or integrating with an external platform, Airflow lets you control exactly how each step executes.

But that flexibility is a double-edged sword. Just because you can code everything inside Airflow doesn't mean you should. Overly complex task logic inside Airflow makes workflows harder to debug and maintain. The best practice: use Airflow as the orchestrator, not the execution engine. Let specialized tools handle the heavy lifting. Use tools like dbt for transformation and tools like Azure Data Factory, dlt, Fivetran or Airbyte for extraction. Let Airflow handle the scheduling, coordination, observability, and failure notification.

dbt (data build tool) is an open-source framework that uses SQL and Jinja templating to transform data inside the warehouse. Developed by dbt Labs, dbt handles the "T" in ELT: transforming, testing, documenting, and tracking lineage of data models. It brings software engineering practices like version control, CI/CD, and modular code to analytics workflows.

dbt lets you write transformation logic as SQL select statements, enhanced with Jinja templating for dynamic execution. Each transformation is called a "model." When you run dbt, it compiles those models into raw SQL and executes them against your warehouse, creating or replacing tables and views. dbt resolves dependencies between models automatically, running them sequentially or in parallel as needed.

Unlike traditional ETL tools that abstract SQL behind drag-and-drop interfaces, dbt treats SQL as the primary language for transformation. This makes it immediately accessible to anyone who already knows SQL. But dbt goes further by adding Jinja templating for dynamic scripting, conditional logic, and reusable macros.

A few things set dbt apart from alternatives:

dbt excels at the "T" in ELT but requires complementary tools for extraction, loading, and orchestration.

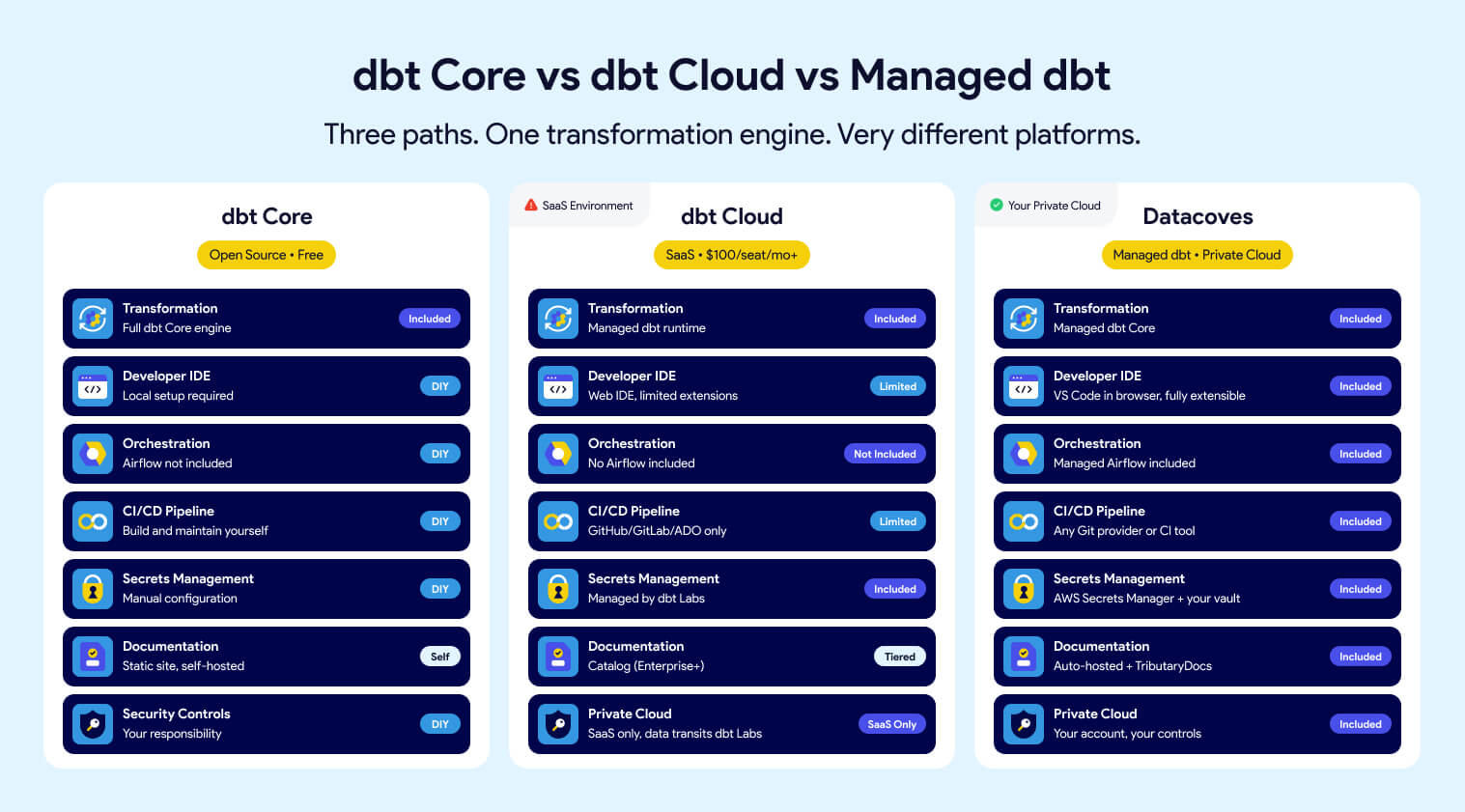

A common misunderstanding in the data community is equating "dbt" with "dbt Cloud." When people say dbt, they typically mean dbt Core -- the open-source framework. dbt Cloud is a commercial product from dbt Labs built on top of dbt Core that adds a scheduler, hosted IDE, monitoring, and managed infrastructure.

The key distinction: you can use dbt Core without paying for dbt Cloud, but you won't get a scheduler, hosted environment, or managed CI/CD. That means you'll need to solve orchestration, deployment, and infrastructure yourself. This is typically done with a tool like Airflow and a platform to tie it all together.

For a deeper comparison, see our article on dbt Cloud vs. dbt Core.

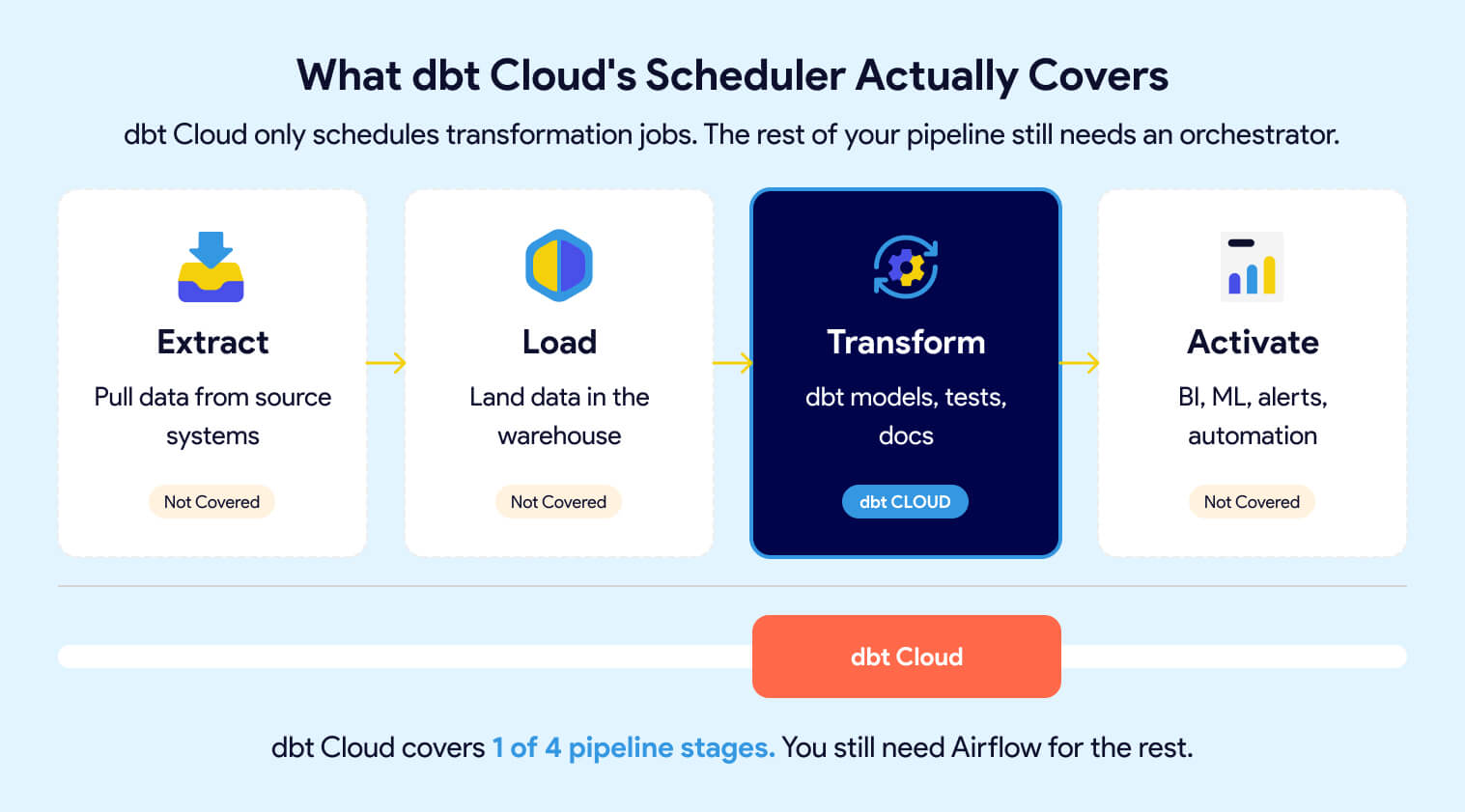

dbt Cloud includes a scheduler that automates dbt runs on a defined cadence. This keeps your transformation models fresh and your data reliable. But it only schedules dbt jobs, nothing else.

Your Extract and Load steps, downstream processes like BI refreshes, ML model retraining, marketing automation, and any cross-system coordination still need an orchestrator. dbt Cloud's scheduler does not replace Airflow. It replaces the need to cron-schedule dbt runs manually, but leaves the rest of the pipeline unmanaged.

Yes, unless your entire pipeline is just dbt transformations with nothing before or after them.

dbt Cloud schedules and runs your dbt jobs. That covers transformation, testing, and documentation. But most production pipelines involve more than that: extracting data from source systems, loading it into the warehouse, refreshing BI dashboards, triggering ML model retraining, sending alerts, and coordinating dependencies across all of these steps.

dbt Cloud does not orchestrate any of those. If your pipeline looks like this:

Extract → Load → Transform → Activate

Then dbt Cloud only covers one step. You still need an orchestrator like Airflow to tie the full pipeline together, manage dependencies between stages, handle retries, and provide end-to-end visibility when something breaks.

For small teams with a simple pipeline (one source, one warehouse, one dashboard), dbt Cloud's scheduler may be enough to start. But as soon as you add sources, downstream consumers, or cross-system dependencies, you'll need Airflow or a platform that includes it.

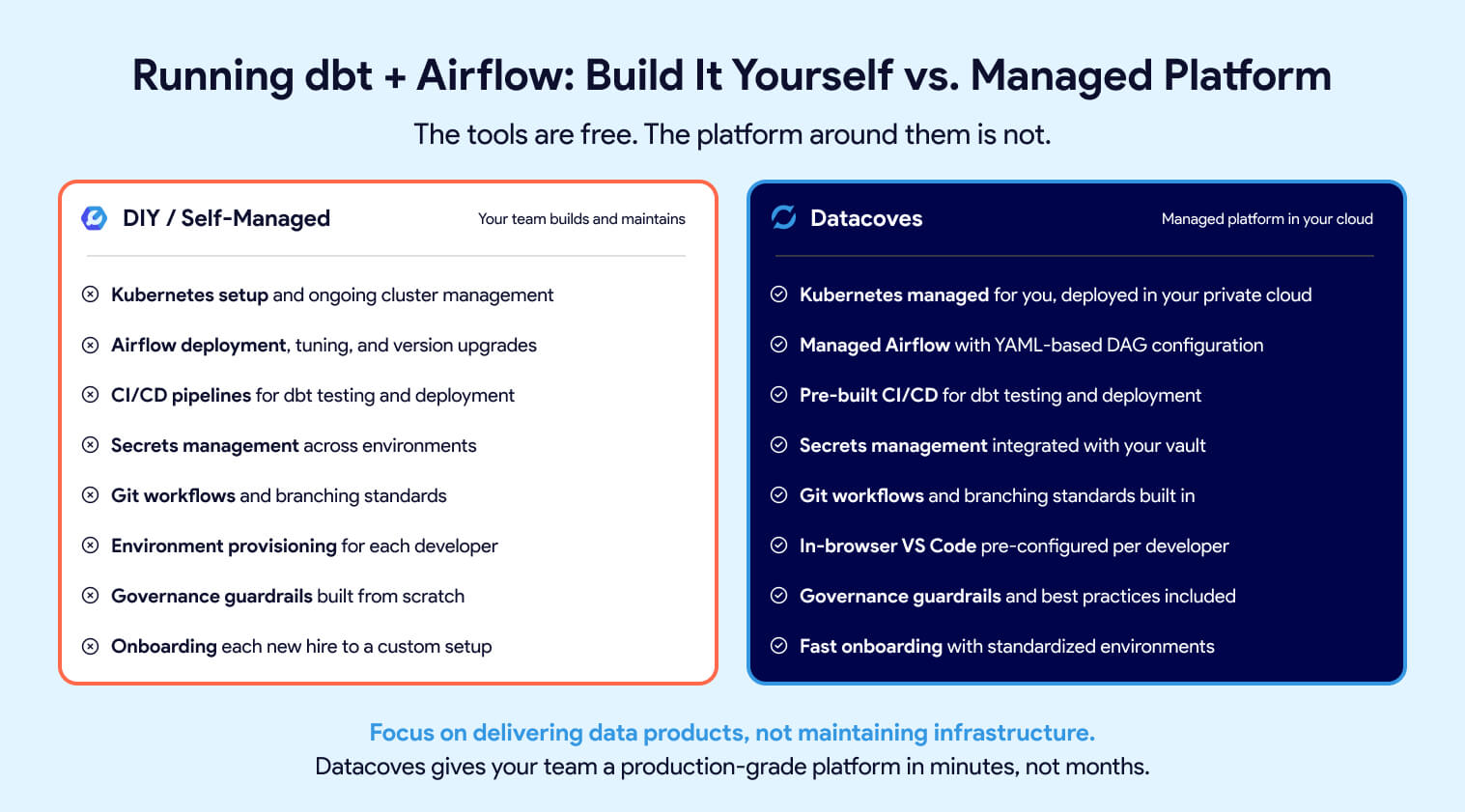

The hidden cost of choosing open-source dbt Core and Airflow is the platform you have to build around them. CI/CD pipelines, secrets management, environment provisioning, Git workflows, Kubernetes tuning, Airflow upgrades -- this is real engineering work that pulls your team away from delivering data products.

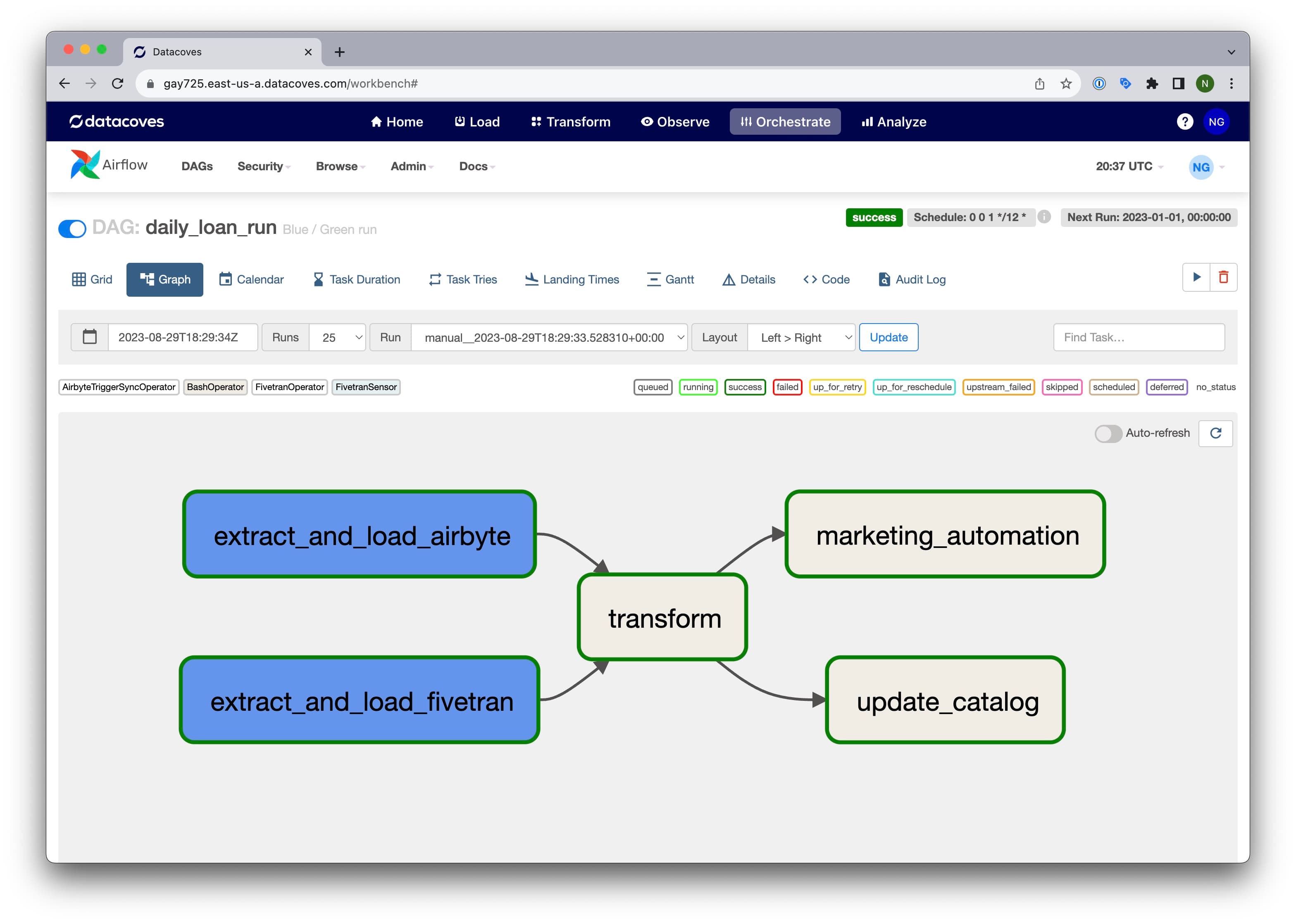

Datacoves eliminates that overhead. It provides managed dbt Core and managed Airflow in a unified environment deployed in your private cloud. Developers get an in-browser VS Code editor with dbt, Python, extensions, and Git pre-configured. Orchestration is handled through Datacoves' managed Airflow, which includes a simplified YAML-based DAG configuration that connects Extract, Load, and Transform steps without writing boilerplate Python.

Best practices, governance guardrails, and accelerators are built in, so teams get a production-grade data platform in minutes instead of months. For a deeper look at the tradeoffs, see our guide on building vs. buying your analytics platform.

To learn more about Datacoves, check out our product page.

This is not an either-or decision. dbt and Airflow solve different problems, and trying to force one tool to do the other's job creates fragile, hard-to-maintain pipelines.

Use dbt for transformation, testing, documentation, and lineage. Use Airflow for scheduling, dependency management, retries, and end-to-end pipeline coordination. The comparison table below shows where each tool fits.

If you need to pick one tool to start with, here's the decision framework:

Start with dbt if your immediate priority is transforming raw data into reliable, tested models in the warehouse. dbt gives you version control, testing, documentation, and lineage from day one. For small teams with simple pipelines, a cron job or dbt Cloud's scheduler can handle automation in the short term.

Start with Airflow if you already have multiple systems that need coordination; extraction from SaaS sources, loading into the warehouse, transformation, and downstream activation. Airflow gives you end-to-end visibility and control across the full pipeline.

The reality: most teams outgrow a single-tool approach quickly. Once your pipeline spans more than one step, you need both. The question isn't which tool to pick, it's how soon you'll need the other one. If you're still evaluating transformation tools, check out our dbt alternatives comparison.

In a production pipeline, the tools fit together like this:

Airflow owns the "when" and "what order." dbt owns the "how" for transformation. Neither tool steps on the other's responsibilities.

The challenge is running them together. Deploying Airflow and dbt Core in a shared, production-grade environment requires Kubernetes, CI/CD, secrets management, environment provisioning, and ongoing maintenance. For teams that don't want to build and maintain that platform layer themselves, a managed solution like Datacoves provides both tools pre-integrated in your private cloud -- so your team focuses on data, not infrastructure.

dbt and Airflow are not competing tools. dbt transforms your data. Airflow orchestrates your pipeline. Trying to use one without the other leaves gaps that show up as fragile pipelines, manual workarounds, and constant firefighting. The teams that struggle most are the ones who pick the right tools but never invest in the platform layer that makes them work together.

The real question is not "dbt vs Airflow," it's how you run them together without building and maintaining a platform from scratch. That infrastructure layer (Kubernetes, CI/CD, secrets, environments, Git workflows, upgrades) is where most teams burn time and budget.

Datacoves gives you managed dbt Core and managed Airflow in a unified environment, deployed in your private cloud, with best practices and governance built in. Your team focuses on delivering data products, not maintaining the platform underneath them.

dbt testing gives data teams a way to validatedata quality, transformation logic, and governance standards directly insidetheir development workflow. Out of the box, dbt Core includes generic tests (unique, not_null, accepted_values, relationships), singular tests for custom SQL assertions, and unit tests for verifying transformation logic before it reaches production. Community packages like dbt-utils and dbt-expectations add dozens more. Combined with CI/CD tools like dbt-checkpoint, teams can enforce testing and documentation standards on every pull request.

This guide covers every layer: what ships with dbt Core, which packages to add, how to store and review results, and how to integrate testing into your CI/CD pipeline.

dbt has two main categories of tests: data tests and unit tests.

Data tests validate the integrity of actual warehouse data and run with every pipeline execution. They come in two forms: generic tests, defined in YAML and applied across models, and singular tests, written as standalone SQL assertions for specific conditions. Under the hood, dbt compiles each data test to a SQL SELECT statement and executes it against your database. If any rows are returned, dbt marks the test as failed.

Unit tests, introduced in dbt 1.8, validate transformation logic using static, predefined inputs. Unlike data tests, they are designed to run during development and CI only, not in production.

.png)

dbt Core ships with four built-in generic tests that cover the most common data quality checks.

unique verifies that every value in a column contains no duplicates. Use this on identifiers like customer_id or order_id to catch accidental duplication in your models.not_null checks that a column contains no null values. This is especially useful for catching silent upstream failures where a field stops being populated.accepted_values validates that a column only contains values from a defined list. For example, a payment_status column might only allow pending, failed, accepted, or rejected. This test will catch it if a new value like approved appears unexpectedly.relationships checks referential integrity between two tables. If an orders table references customer_id, this test verifies that every customer_id in orders has a matching record in the customers table.You apply generic tests by adding them to the model's property YAML file.

Generic tests also support additional configurations that give you more control over how and when they fail.

where limits the test to a subset of rows, useful on large tables where you want to test only recent data or exclude specific values.severity controls whether a test failure blocks execution. Set to warn to flag issues without stopping the pipeline, or keep the default error for critical checks.name overrides the auto-generated test name. Since dbt generates long default names, a custom name makes logs and audit tables much easier to read.

Singular tests are custom SQL assertions saved in your tests/ directory. Each file contains a query that returns the rows failing the test. If the query returns any rows, dbt marks the test as failed.

Use singular tests when built-in generic tests or package tests do not cover your specific business logic. A good example: verifying that sales for one product stay within +/- 10% of another product over a given period. That kind of assertion is too specific for a reusable generic test but straightforward to express in SQL.

When you find yourself writing similar singular tests repeatedly across models, that is a signal to convert the logic into a custom generic test instead.

Custom generic tests work like dbt macros. They can be stored in tests/generic/ or in your macros/ directory. Datacoves recommends tests/generic/ as the default location, but macros/ makes more sense when the test depends on complex macro logic. At minimum, a custom generic test accepts a model parameter, with an optional column_name if the test applies to a specific column. Additional parameters can be passed to make the test more flexible.

Once defined, a custom generic test can be applied across any model or column in your project, just like the built-in tests. This makes them the right choice when you have business logic that repeats across multiple models but is too specific to find in a community package.

Unit tests, available natively since dbt 1.8, validate that your SQL transformation logic produces the expected output before data reaches production.

Unlike data tests that run against live warehouse data on every pipeline execution, unit tests use static, predefined inputs defined as inline values, seeds, or SQL queries in your YAML config. Because the expected outputs don’t change between runs, there’s no value in running unit tests in production. Run them locally during development and in your CI pipeline when new transformation code is introduced.

If your project is on a version older than dbt 1.8, upgrading is the recommended path. Community packages that previously filled this gap (dbt-unit-testing, dbt_datamocktool, dbt-unittest) are no longer actively maintained and are not recommended for new projects.

Source freshness checks aren’t technically dbt tests, but they solve one of the most common silent failure modes in data pipelines: a source stops updating, but the pipeline keeps running without any errors.

Freshness checks are configured in your sources.yml file with warn_after and error_after thresholds. When dbt detects that a source has not been updated within the defined window, it raises a warning or error before your models run. This is especially critical for time-sensitive reporting, where stale data can be worse than no data at all.

dbt Core's built-in tests cover the fundamentals, but the community has built a rich ecosystem of packages that extend testing well beyond what ships out of the box. Packages are installed via your packages.yml file and sourced from dbt Hub.

dbt-utils, maintained by dbt Labs, is the most widely used dbt testing package. It adds 16 generic tests alongside SQL generators and helper macros that complement dbt Core's built-in capabilities.

not_accepted_values is the inverse of accepted_values. Use it to assert that specific values are never present in a column.equal_rowcount confirms that two tables have the same number of rows. This is particularly useful after transformation steps where row counts should be preserved.fewer_rows_than validates that a target table has fewer rows than a source table, which is the expected result after any aggregation step.For the full list of all 16 generic tests with usage examples, see the Datacoves dbt-utils cheatsheet.

dbt-expectations, maintained by Metaplane, ports the Python library Great Expectations into the dbt ecosystem. It gives analytics engineers 62 reusable generic tests without adding a separate tool to the stack.

The package covers seven categories:

The string matching and aggregate function categories in particular cover validations that would otherwise require custom singular tests. Full documentation is available on the dbt-expectations GitHub repository.

dbt_constraints, created by Snowflake, generates database-level primary key, unique key, and foreign key constraints directly from your existing dbt tests. It is primarily designed for Snowflake, with limited support on other platforms.

When added to a project, it automatically creates three types of constraints.

dbt_utils.unique_combination_of_columns.relationships tests.This package is most valuable for teams that want database constraints alongside dbt's test-based validation, not instead of it. Snowflake doesn’t enforce most constraints at write time, but the query optimizer uses them for better execution plans. Some tools also read constraints to reverse-engineer data model diagrams or add joins automatically between tables.

For most dbt projects, dbt-utils and dbt-expectations are the two packages worth adding first. Layer in dbt_constraints when your use case specifically calls for database-level constraints on Snowflake.

By default, dbt doesn’t persist test results between runs. You can change this by setting store_failures: true in your dbt_project.yml or at the individual test level.

This is useful for spot-checking failures after a run, but each execution overwrites the previous results, so it does not give you historical visibility into data quality trends over time. The tools below address that gap with lightweight reporting options.

When store_failures: true is enabled at the project or test level, dbt writes the rows that fail each test into tables in your warehouse under a schema named [your_schema]_dbt_test__audit. This creates one table per test, containing the actual records that triggered the failure.

This is a practical first step for teams that want to inspect failures without setting up a dedicated observability platform. The main limitation: each run overwrites the previous results, so you cannot track failure trends over time without additional tooling.

Datacoves recommends dbt audit tables in a separate database to keep your production environment clean. On platforms like Snowflake, this also reduces overhead for operations like database cloning.

dq-tools stores dbt test results and makes them available for visualization in a BI dashboard. If your team already has a BI layer in place, this is a lightweight way to surface data quality metrics alongside existing reporting without adding a separate observability tool.

datacompy is a Python library for migration validation. It performs detailed table comparisons, reporting on row-level differences, column mismatches, and statistical summaries. It’s not a dbt package, but it integrates naturally into migration workflows that use dbt for transformation.

Standard dbt tests aren’t designed for row-by-row comparison across systems, which makes datacompy a useful complement when you’re migrating from legacy platforms.

The tests covered so far validate data. The tools below validate your dbt project itself, catching governance and compliance issues at the development and PR stage rather than in production.

dbt-checkpoint, maintained by Datacoves, enforces project governance standards automatically so teams don’t rely on manual code review to catch missing documentation, unnamed columns, or hardcoded table references. It runs as a pre-commit hook, blocking non-compliant code before it’s pushed to the main branch, and can also run in CI/CD pipelines to enforce the same checks on every pull request.

Out of the box it validates things like whether models and columns have descriptions, whether all columns defined in SQL are present in the corresponding YAML property file, and whether required tags or metadata are in place.

It's a natural fit for dbt Core teams that want Git-native governance without additional infrastructure. The tradeoff: it requires familiarity with pre-commit configuration to set up and extend. Like all Python-based governance tools, it doesn't run inside the dbt Cloud IDE.

dbt-bouncer, maintained by Xebia, takes an artifact-based approach, validating against manifest.json, catalog.json, and run_results.json rather than running as a pre-commit hook. It requires no direct database connection and can run in any CI/CD pipeline that has access to dbt artifacts. Checks cover naming patterns, directory structure, and description coverage, and it can run as a GitHub Action or standalone Python executable.

dbt-project-evaluator, maintained by dbt Labs, takes a different approach. Rather than running as an external tool, it is a dbt package: it materializes your project's DAG structure into your warehouse and runs dbt tests against it.

It checks for DAG issues (model fanout, direct joins to sources, unused sources), testing and documentation coverage, naming convention violations, and performance problems like models that should be materialized differently.

The main limitation is adapter support: it works on BigQuery, Databricks, PostgreSQL, Redshift, and Snowflake, but not on Fabric or Synapse. Because it materializes models into your warehouse, it has a slightly higher execution cost than artifact-based tools.

dbt-score, maintained by Picnic Technologies, is a Python-based CLI linter that reads your manifest.json and assigns each model a score from 0 to 10 based on metadata quality: missing descriptions, absent owners, undocumented columns, models without tests.

The scoring approach makes it easy to track improvement over time and prioritize which models need attention, without enforcing hard pass/fail gates on every PR. Custom rules are fully supported. It requires no database connection and no dbt run, just a manifest.json.

These tools aren’t mutually exclusive, and many teams combine them. For dbt Core teams, dbt-checkpoint is the recommended starting point: it enforces governance at the PR stage with minimal setup and can run both locally and in CI/CD pipelines. dbt-bouncer is worth evaluating if your team wants artifact-based validation running in an external CI/CD pipeline. Add dbt-project-evaluator when you want DAG-level structural checks alongside documentation and testing coverage. Layer in dbt-score for ongoing visibility into metadata quality across your project without hard enforcement gates.

dbt testing isn’t an all-or-nothing investment. The most effective approach is to start with what ships in dbt Core and expand coverage as your team builds confidence.

A practical progression looks like this: start with unique, not_null, and relationships tests on source tables and mart models. Add dbt-utils and dbt-expectations as your testing needs grow beyond the basics. When your team is ready to enforce governance and metadata standards, dbt-checkpoint is the simplest starting point for dbt Core teams, with dbt-project-evaluator and dbt-score as complementary layers.

If your organization is still running transformations in legacy tools like Talend, Informatica, or Python, you do not need to wait for a full migration to start benefiting from dbt testing. dbt sources can point to any table in your warehouse, whether or not dbt created it, so you can layer dbt tests and documentation on top of your existing pipeline today. See Using dbt to Document and Test Data Transformed with Other Tools for a step-by-step walkthrough.

Where teams hit friction isn’t usually the tests themselves. It’s managing the infrastructure, environments, CI/CD pipelines, and orchestration around them. Datacoves handles that layer, giving your team a managed dbt and Airflow environment so they can focus on building and testing models rather than maintaining tooling. Learn more about how Datacoves compares to other dbt solutions.

You now know what dbt (data build tool) is all about. You are being productive, but you forgot what `dbt build` does or you forgot what the @ dbt graph operator does. This handy dbt cheat sheet has it all in one place.

With the advent of dbt 1.6, we updated the awesome dbt cheat sheet created originally by Bruno de Lima

We have also moved the dbt jinja sheet sheet to a dedicated post.

This reference summarizes all the dbt commands you may need as you run your dbt jobs or study for your dbt certification.

If you ever wanted to know what the difference between +model and @model is in your dbt run, you will find the answer. Whether you are trying to understand dbt graph operators or what the dbt retry command does, but this cheat sheet has you covered. Check it out below.

These are the principal commands you will use most frequently with dbt. Not all of these will be available on dbt Cloud

The dbt commands above have options that allow you to select and exclude models as well as deferring to another environment like production instead of building dependent models for a given run. This table shows which options are available for each dbt command

By combining the arguments above like "-s" with the options below, you can tell dbt which items you want to select or exclude. This can be a specific dbt model, everything in a specific folder, or now with the latest versions of dbt, the specific version of a model you are interested in.

dbt Graph Operator provide a powerful syntax that allow you to hone in on the specific items you want dbt to process.

The following commands are used less frequently and perform actions like initializing a dbt project, installing dependencies, or validating that you can connect to your database.

The flags below immediately follow the dbt command and go before the subcommand e.g. dbt <FLAG> run

Read the official dbt documentation

As a managed dbt Core solution, the Datacoves platform simplifies the dbt Core experience and retains its inherent flexibility. It effectively bridges the gap, capturing many benefits of dbt Cloud while mitigating the challenges tied to a pure dbt Core setup. See if Datacoves dbt pricing is right for your organization or visit our product page.

Please contact us with any errors or suggestions.

When you are learning to use a new tool or technology, one of the hardest things is learning all the new terminology. As we pick up language throughout our lives, we develop an association between words and our mental model of what they represent. The next time we see the word again that picture pops up in our head and if the word is now being used to mean something new, we must create a new mental model. . In this post, we introduce some core dbt (data building tool)terminology and how it all connects.

Language understanding is interesting in that once we have a mental model of a term, we have a hard time grasping the new association. I still remember the first time I spoke to someone about the Snowflake Data Warehouse, and they used the term warehouse. To me, the term had two mental models. One was a place where we store a lot of physical goods, type Costco Warehouse into Google and the first result is Costco Wholesale, a large retailer in the US that is so big it is literally a warehouse full of goods.

I have also worked in manufacturing, so I also associated a warehouse as the place where raw materials and finished goods are stored.

In programming, we would say we are overloading the term warehouse to mean different things.

In some programming languages, function overloading or method overloading is the ability to create multiple functions of the same name with different implementations – Wikipedia

We do this type of thing all the time and don’t think twice about it. However, if I say “I need a bass” do you know what I am talking about?

In my Snowflake example, I knew the context was technology and more specifically something to do with databases, so I already had a mental model for a warehouse. It’s even in Wikipedia's description of the company.

Snowflake Inc. is a cloud computing-based data warehousing company - Wikipedia

I knew of data warehouses from Teradata and Amazon (Redshift), so it was natural for me to think of a warehouse as a technology and a place where lots of data is stored. In my mind, I quickly thought of

For those new to the term warehouse, I may have lost you already. Maybe you are new to dbt and you come from the world of tools like Microsoft Excel, Alterix, Tableau, and PowerBI. If you know all this, grant me a few minutes to bring everyone up to speed.

Let’s step back and first define a database.

A database is an organized collection of structured information, or data, typically stored electronically in a computer system - Oracle

Ok, you probably know Excel. You have probably also seen an Excel Workbook with many sheets. If you organize your data neatly in Excel like the image below, we could consider that workbook a database.

Going back to the definition above “organized collection of structured information” you can see that we have structured information, a list of orders with a Date, Order Quantity, and Order Amount. We also have a collection of these, namely Orders and Invoices.

In database terms, we call each Excel sheet a table and each of the columns an attribute.

Now back to a warehouse. This was my mental model of a warehouse.

A data warehouse (DW or DWH), also known as an enterprise data warehouse (EDW), is a system used for reporting and data analysis and is considered a core component of business intelligence. DWs are central repositories of integrated data from one or more disparate sources. They store current and historical data in one single place that are used for creating analytical reports for workers throughout the enterprise - Wikipedia

Again, if you are new to all this jargon, the above definition might not make much sense to you. Going back to our Excel Example. In an organization, you have many people with their own “databases” like the example above. Jane has one, Mario has another, Elena has a third. All have some valuable information we want to combine in order to make better decisions. So instead of keeping these Excel workbooks separately, we put them all together into a database and now we call that a warehouse. We use this central repository for our “business intelligence”

So, knowing all of this, when I heard of a Snowflake warehouse the above is what I thought. It is the place where we have all the data, duh. Just like Redshift and Teradata. But look at what the people at Snowflake did, they changed the meaning on me.

A virtual warehouse, often referred to simply as a “warehouse”, is a cluster of compute resources in Snowflake. - Snowflake

The term warehouse here is no longer about the storage of things it now means “cluster of compute” A what of what?

Ok, let’s break this down. You are probably reading this on a laptop or some other mobile device. That device stores all your documents and when you perform some actions it “computes” certain things. Well, in Snowflake the storage of the information is separate and independent of the computation on the things that are stored. So, you can store things once and connect different “computers” to it. Imagine you were performing a task on your laptop, and it was slow. What if you could reach in your desk drawer, pull out a faster computer, and speed up the task that was slow, well, in Snowflake you can. Also, instead of just having one computer doing the work, they have a cluster of computers working together to get the job done even faster.

As you can see, language is tricky, and creating a shared understanding of it is crucial to advancing your understanding and mastery of the technology. Every Snowflake user develops the new mental model for a warehouse and using it is second nature, but we forget that these terms that are now natural to us may still be confusing to newcomers.

Let’s start with dbt. When you join the dbt Slack community you will inevitably learn that the preferred way to write dbt is all lower case. Not DBT, not Dbt, just dbt. I still don’t know why exactly, but you may have noticed that everyone in this space always puts “dbt (Data Build Tool)”

If you have some knowledge of Behavioral Therapy you may already know that DBT has a different meaning. Dialectical behavior therapy (DBT)

Dialectical behavioral therapy (DBT) is a type of cognitive-behavioral therapy. Cognitive-behavioral therapy tries to identify and change negative thinking patterns and pushes for positive behavioral changes.

Did you notice how they do the inverse? They spell out Dialectical behavior therapy and put DBT in parenthesis. So, maybe the folks at Fishtown Analytics, now dbt Labs came across this other meaning for DBT and chose to differentiate by using lowercase, or maybe it was to mess with all of the newbies lol.

So update your auto-correct and don’t let dbt become DBT or Dbt or you will hear from someone in the community, haha.

Now let’s do a quick rundown of terms you will hear in dbt land which may confuse you as you start your dbt journey. I will link to the documentation with more information. My job here is to hopefully create a good mental model for you, not to teach you all the ins and outs of all of these things.

This is simply some data that you put into a file and make it part of your project. You put it in the seeds folder within your dbt project, but don’t use this as your source to populate your data warehouse, these are typically small files you may use as lookup tables. If you are using an older version of dbt, the folder would be data instead of seeds. That was another source of confusion, so now the term seed and the directory seed are more tightly connected. The format of these files must be CSV, more information can be found via the link above.

Jinja is a templating engine with syntax similar to the Python programming language that allows you to use special placeholders in your SQL code to make it dynamic. The stuff you see with {{ }} is Jinja.

Without Jinja, there is no dbt. I mean it is the combination of Jinja with SQL that gives us the power to do things that would otherwise be very difficult. So, when you see the lineage you get in the dbt documentation, you can thank Jinja for that.

I knew you would have this question. Well, a macro is simply a reusable piece of code. This too adds to the power of dbt. Every newcomer to dbt will quickly learn about the ref and source macros. These are the cornerstone of dbt. They help capture the relationship and sequence of all your data transformations. Sometimes you are using macros and you may not even realize it. Like the not_null test in your yml file, that’s a macro.

Behind the scenes, dbt is taking information in your yml file and sending parameters to this macro. In my example, the parameter model gets replaced with base_cases (along with the database name and schema name) and colum_name gets replaced with cases. The compiled version of this test looks like this:

There are dbt packages like dbt-expectations that extend the core dbt tests by adding a bunch of test macros, so check it out.

What do you do when you have a lot of great macros that you want to share with others in the community? You create a dbt package of course.

But what is a dbt package? A package is simply a mini dbt project that can be incorporated into your dbt project via the packages.yml file. There are a ton of great packages and the first one you will likely run into is dbt-utils. These are handy utilities that will make your life easier. Trust me, go see all the great things in the dbt-utils package.

Packages don’t just have macros though. Remember, they are mini dbt projects, so some packages incorporate some data transformations to help you do your analytics faster. If you and I both need to analyze the performance of our Google Ads, why should we both have to start from scratch? Well, the fine folks over at Fivetran thought the same thing and created a Google Ads package to help.

When you run the command dbt deps, dbt will look at your packages.yml file and download the specified packages to the dbt_packages directory of your dbt project. If you are on an older version of dbt, packages will be downloaded to the dbt_modules directory instead, but again you can see how this could be confusing hence the updated directory name.

There are many packages and new ones arrive regularly. You can see a full listing on dbt hub.

This is the website maintained by dbt Labs with a listing of dbt packages.

As a side note, we at Datacoves also maintain a similar listing of Python libraries that enhance the dbt experience in our dbt Libraries page. Check out all the libraries that exist. From additional database adapters to tools that can extract data from your BI tool and connect it with dbt, there’s a wealth of great open-source projects that take dbt to another level. Keep in mind that you cannot install Python libraries on dbt Cloud.

These are the SQL files you find in the models directory. These files specify how you want to transform your data. By default, each of these files creates a view in the database, but you can change the materialization of a model to something else and for example, have dbt create a table instead.

Materializations define what dbt will do when it runs your models. Basically, when you execute dbt run this is what happens.

All the code that dbt compiles and runs can be found in the dbt target directory

This term can be ambiguous to a new dbt user. This is because in dbt we use it interchangeably to mean two different things. As I used it above, I meant the directory within your dbt project where dbt commands write their output. If you look in this directory, you will see the compiled and run directories where I found the code I showed above.

Now that you know what dbt is doing under the hood, you can look in this directory to see what will be executed in the database. When you need to do some debugging, you should be able to take code directly from the compiled directory and run it on your database.

This is the other meaning for target. It refers to where dbt will create/materialize the objects in your database.

Again, dbt first compiles your model code and creates the files in the compiled directory. It then wraps the compiled code with the specified materialization and saves the resulting code in the run directory. Finally, it executes that code in your database target. It is the final file in the run directory that is executed in your database.

The image above is the code that runs in my Snowflake instance.

But how does dbt know which database target to use? You told it when you set up your dbt profile which is normally stored in a folder called .dbt in your computer's home folder (dbt Cloud and Datacoves both abstract this complexity for you).

When you start using dbt, you learn of a file called profiles.yml This file has your connection information to the database and should be kept secret as it typically contains your username and password.

This file is called profiles, plural, because you can have more than one profile which you eventually realize is where the target database is defined. Here is a case where you can argue that a better name for this file is targets.yml, but you will learn later why the name profiles.yml was probably chosen and why this name makes sense.

Notice above that I have two different dbt targets defined below the word outputs, dev and prd. dbt can only work on one target at a time so if you want to run dbt against two different databases you can specify them here. Just copy the dev target, give it a new name, and change some of the parameters.

Think of the word outputs on line 3 above as targets. Notice in line 2 the line target: dev this tells dbt which target it should use as your default. In my case, unless I specify otherwise, dbt will use the dev target as my default connection. Hence it will replace the Jinja ref macro with my development database.

How would you use the other target? You simply pass the target parameter to the dbt command like

dbt run --target prd or dbt run -t prd

What is that default: thing on the first line of my profiles.yml file?

Well you see, that’s the name given to your dbt profile, which by default is well, default.

The dbt project is what is created when you create a project via the dbt init command. It includes all of the folders you typically associate with a dbt project and includes a configuration file called dbt_project.yml. If you look at your dbt_project.yml file, you will find something similar to this.

In line 10 you can see which profile dbt will look for in your profiles.yml file. If I change that line and try to run dbt, I will get an error.

NOTE: For those paying close attention, you may have seen I used-s and not -m when selecting a specific model to run. This is the new/preferred way to select what dbt will run.

So now you see why profiles.yml is called profiles.yml and not targets.yml, because you can have multiple profiles in the file. In practice, I think people normally only have one profile, but nothing is preventing you from creating more and it might be handy if you have multiple dbt projects each with different connection information.

Those smart folks at Fishtown Analytics build in this flexibility for a very specific use case. You see, they were originally an analytics consulting company and developed dbt to help them do their work more efficiently. You can imagine that they were working with multiple clients whose project timelines overlapped so by having multiple profiles they could point each independent dbt project to a different profile in the profiles.yml file with each client's database connection information. Something like this.

Now that I have a profile called company_a in my profiles.yml that matches what I defined in my dbt_project.yml dbt will run correctly.

There is a ton of stuff to learn in your dbt journey and starting out with a solid foundation can help you better communicate and quickly progress through the learning curve.

Fishtown Analytics, now dbt Labs, created dbt to meet a real need they had and some of their shared vocabularies made it into the names we now use in the community. Those of us who have made it past the initial learning curve sometimes forget how daunting all the terminology can be for a newcomer.

There is a wealth of information you can find in the dbt documentation and our own dbt cheat sheet, but it takes some time to get used to all the new terms and understand how it's all connected. So next time you come across a newbie, think about the term that you are about to use and the mental model they will have when you tell them to update the seed. We need to take our new dbt seeds (people) and mature them into strong trees.

In our previous article we wrote about the various dbt tests, we talked about the importance of testing data and how dbt, a tool developed by dbt Labs, helps data practitioners validate the integrity of their data. In that article we covered the various packages in the dbt ecosystem that can be used to run a variety of tests on data. Many people have legacy ETL processes and are unable to make the move to dbt quickly, but they can still leverage the power of dbt and by doing so slowly begin the transition to this tool. In this article, I’ll discuss how you can use dbt to test and document your data even if you are not using dbt for transformation.

Ideally, we can prevent erroneous data from ever reaching our decision makers and this is what dbt was created to do. dbt allows us to embed software engineering best practices into data transformation. It is the “T” in ELT (Extract, Load, and Transform) and it also helps capture documentation, testing, and lineage. Since dbt uses SQL as the transformation language, we can also add governance and collaboration via DataOps, but that’s a topic for another post.

I often talk to people who find dbt very appealing, but they have a lot of investment in existing tools like Talend, Informatica, SSIS, Python, etc. They often have gaps in their processes around documentation and data quality and while other tools exist, I believe dbt is a good alternative and by leveraging dbt to fill the gaps in your current data processes, you open the door to incrementally moving your transformations to dbt,

Eventually dbt can be fully leveraged as part of the modern data workflow to produce value from data in an agile way. The automated and flexible nature of dbt allows data experts to focus more on exploring data to find insights.

The term ELT can be confusing, some people hear ELT and ETL and think they are fundamentally the same thing. This is muddied by marketers who try to appeal to potential customers by suggesting their tool can do it all. The way I define ELT is by making sure that data is loaded from the source without any filters or transformation. This is EL (Extract and Load). We keep all rows and all columns. Data is replicated even if there is no current need. While this may seem wasteful at first, it allows Analytic and Data Engineers to quickly react to business needs. Have you ever faced the need to answer a question only to find that the field you need was never imported into the data warehouse? This is common especially in traditional thinking where it was costly to store data or when companies had limited resources due to data warehouses that coupled compute with storage. Today, warehouses like Snowflake have removed this constraint so we can load all the data and keep it synchronized with the sources. Another aspect of modern EL solutions is making the process to load and synchronize data simple. Tools like Fivetran and Airbyte allow users to easily load data by simply selecting pre-build connectors for a variety of sources and selecting the destination where the data should land. Gone are the days of creating tables in target data warehouses and dealing with changes when sources add or remove columns. The new way of working is helping users set it and forget it.

In an environment where other transformation tools are used, you can still leverage dbt to address gaps in testing. There are over 70 pre-built tests that can be leveraged, and custom tests can be created by just using SQL. dbt can test data anywhere in the transformation lifecycle. It can be used at the beginning of the workflow to test or verify assumptions about data sources and the best part is that these data sources or models do not need to be a part of any ongoing project within dbt. Imagine you have a raw customer table you are loading into Snowflake. We can connect this table to dbt by creating a source yml file where we tell dbt where to find the table by providing the name of the database, schema, and table. We can then add the columns to the table and while we are at it, we can add descriptions.

The image below illustrates how test would be added for a CUSTOMER table in the SNOWFLAKE_SAMPLE_DATA database in the TPCH_SF100 schema.

So far we have done what you would learn on a standard dbt tutorial, you start with some source, connect it to dbt, and add some tests. But the reality is, dbt doesn’t really care if the table that we are pointing to is a true "source" table or not. To dbt, any table can be a source, even an aggregation, reporting table, or view. The process is the same. You create a yml file, specify the “source” and add tests.

Let’s say we have a table that is an aggregate for the number of customers by market segment. We can add a source that points to this table and check for the existence of specific market segments and a range of customers by segment.

Using this approach, we can leverage the tests available in dbt anywhere in the data transformation pipeline. We can use dbt_utils.equal_rowcount to validate that two relations have the same number of rows to assure that a transformation step does not inadvertently drop some rows.

When we are aggregating, we can also check that the resulting table has fewer rows than the table we are aggregating by using the dbt_utils.fewer_rows_than test.

Notice that you can use the source macro when referring to another model outside of dbt. As long as you register both models as sources, you can refer to them. So when you see documentation that refers to the ref() macro, just substitute with the source macro as I did above.

Also, note that even though documentation may say this is a model test, you can use this in your source: definition as I have done above.

In dbt sources, we can also add documentation like so:

These descriptions will then show up in the dbt docs.

dbt is a great tool for transforming data, capturing documentation, and lineage, but if your company has a lot of transformation scripts using legacy tools, the migration to dbt may seem daunting and you may think you cannot leverage the benefits of dbt.

By leveraging source definitions you can take advantage of dbt’s ecosystem of tests and ability to document even if transformations are done using other tools.

Gradually the organization will realize the power of dbt and you can gradually migrate to dbt. For the data to be trusted, it needs to be documented and tested and dbt can help you in this journey.

.png)

dbt Core and dbt Cloud both run the same transformation engine. The difference is in who manages the infrastructure around it.

dbt Core is open-source and free. It gives you full control over your environment but requires your team to build and maintain orchestration, CI/CD, developer environments, and secrets management.

dbt Cloud is a managed SaaS platform built on dbt Core. It simplifies setup with a built-in IDE, job scheduler, and CI/CD, but limits flexibility, restricts private cloud deployment, and can get expensive at scale.

Managed dbt Core platforms like Datacoves offer a third path: the operational simplicity of dbt Cloud with the flexibility and security of dbt Core, deployed in your own private cloud.

The right choice depends on your team's engineering capacity, security requirements, and how much infrastructure you want to own.

dbt Core and dbt Cloud both run the same transformation engine. The difference is in who manages the infrastructure around it.

dbt (data build tool) is an open-source transformation framework for building, testing, and deploying SQL-based data models. When people say "dbt," they're almost always talking about dbt Core, the engine that everything else is built on.

dbt Core is the open-source CLI tool maintained by dbt Labs. It's free, runs in any environment, and gives teams full control over their setup. Scheduling, CI/CD, and developer tooling are not included. Teams assemble those separately.

dbt Cloud is a managed SaaS platform built on dbt Core. It adds a web IDE, job scheduler, CI/CD integrations, a proprietary semantic layer, and metadata APIs. Setup is faster, but flexibility and private cloud deployment are limited.

Managed dbt platforms like Datacoves run dbt inside your own cloud with the surrounding infrastructure already in place: IDE, orchestration, CI/CD, secrets management, all managed for you.

All three run the same transformation engine. Everything else is a platform decision.

The table below covers the key decision points. Sections that follow go deeper on each one.

With dbt Core, every developer sets up their own environment. That means installing dbt, configuring a connection to the warehouse, managing Python versions, and handling dependencies like SQLFluff or dbt Power User. On paper, straightforward. In practice, setup can take anywhere from a few hours to several days depending on the developer's experience and the organization's IT constraints.

Pre-configured company laptops often ship with software that may conflict with dbt. Proxy settings, restricted package registries, and corporate firewall rules add friction before a developer writes a single line of SQL.

The upside is full control. Teams can use any IDE they prefer: VS Code, Cursor, PyCharm, or whatever fits their workflow. There are no constraints on tooling choices, and developers who already have strong local environment preferences can keep working the way they work best.

The maintenance challenge grows with team size. Every dbt version upgrade needs to happen in sync across all developers. On a small team that's manageable. On a team of 20 or more, someone is always on a different version, and those mismatches cause inconsistent behavior, failed CI runs, and debugging sessions that should never have happened. Organizations that skip upgrades to avoid the coordination cost accumulate technical debt that gets harder to unwind over time.

dbt Cloud's web IDE lets developers log in through a browser and start writing SQL without installing anything locally. No Python, no CLI, no profiles.yml. For analytics engineers who are new to dbt or unfamiliar with command-line tools, this is a genuine advantage.

The trade-off is flexibility. The web IDE does not support VS Code extensions or custom Python libraries. Teams that rely on SQLFluff configurations, internal Python packages, or warehouse-specific extensions like the Snowflake VS Code plugin will find it limiting.

dbt Cloud also offers a CLI option that lets developers work locally in VS Code while dbt Cloud handles compute. Many teams end up running both: newer analysts in the web IDE, senior engineers on the CLI. But the CLI path reintroduces the local environment problems the web IDE was supposed to solve. SQLFluff versions, Python dependencies, and VS Code extensions still need to be installed and kept in sync across every developer's machine. On larger teams, that version drift shows up quickly.

Datacoves provides VS Code running in the browser, fully managed and pre-configured. Developers get the VS Code they already know, without any local installation. Warehouse connections, Git configuration, Python environments, and tooling like SQLFluff are set up out of the box.

Where Datacoves differs from dbt Cloud's web IDE: the environment is fully extensible. Teams can install any VS Code extension, add internal Python libraries, and configure the workspace to match their standards. Organizations with proprietary packages or warehouse-specific tooling can bring those into the environment without workarounds.

Onboarding a new developer is a matter of clicks, not days. When dbt or a dependent library needs an upgrade, Datacoves handles it. Developers work in a consistent, current environment without touching it.

dbt Core has no built-in scheduler. Teams choose their own orchestration tool, with Apache Airflow being the most common choice in enterprise environments. This gives full flexibility: you can connect ingestion, transformation, and downstream activation steps into a single pipeline, trigger internal tools behind the firewall, and orchestrate anything in your stack.

That flexibility comes with real cost. Airflow is not simple to operate. Running it reliably at scale requires Kubernetes knowledge, careful resource management, and dedicated engineering attention. A production-grade Airflow setup with separate local development, testing, and production environments is a multi-month investment for most teams. When you add advanced features like external secrets management, alerting, and DAG version control and the scope grows further.

Teams that underestimate this often end up with a fragile single-environment setup or become dependent on the key people who understand how everything works until it doesn't.

dbt Cloud includes a built-in job scheduler with a clean UI for configuring run frequency, retries, and alerts. For teams that only need to run dbt on a schedule, it works well and requires no additional tooling.

The limitation becomes clear when pipelines grow beyond dbt. If you need to connect an ingestion step before transformation, trigger a downstream tool after a model run, or orchestrate anything outside dbt's scope, the built-in scheduler is not enough. dbt Cloud offers an API to trigger jobs from an external orchestrator, but that adds integration overhead and means maintaining two systems.

Enterprise teams with existing Airflow infrastructure often end up running dbt Cloud jobs triggered by Airflow anyway, which raises the question of why they're paying for a scheduler they're not using.

Datacoves includes managed Airflow as part of the platform. Two environments come pre-configured: a personal Airflow sandbox for each developer to test DAGs without affecting anyone else, and a shared Teams Airflow for production workflows. Both are pre-integrated with dbt and Airflow, so DAG creation for dbt runs is straightforward without custom operators or glue code.

Because Airflow runs inside your private cloud alongside dbt, it can reach internal systems, on-premise databases, and tools behind the corporate firewall. End-to-end pipelines that include ingestion, transformation, and activation steps all run in one orchestration layer without external API calls or cross-network dependencies.

Spinning up additional Airflow environments takes minutes, so enterprises can provision separate development, testing, and production environments without infrastructure work. Teams with complex testing requirements or multiple projects can have as many environments as they need.

Datacoves also supports simplified DAG creation using YAML, reducing the Python burden on teams that are primarily SQL-focused.

dbt Cloud covers transformation and scheduling, but it does not cover orchestration of the broader pipeline. Teams still need to run and maintain Airflow or another orchestrator alongside it.

dbt Core gives teams complete control over their CI/CD pipeline. Any Git provider works: GitHub, GitLab, Bitbucket, Azure DevOps, or internal systems like Bitbucket Server. Any CI tool works too: GitHub Actions, GitLab CI, Jenkins, CircleCI, or whatever the organization already runs behind the firewall.

That flexibility is genuinely valuable for enterprises that have invested in internal tooling. A team on Jenkins with Bitbucket can build a world-class dbt CI pipeline without compromising on either tool.

The cost is setup time. Docker images need to be built and maintained with the right dbt version, SQLFluff configuration, and Python dependencies. CI runners need to be provisioned and kept current. Notification routing to Slack, MS Teams, or email needs to be configured separately. None of this is insurmountable, but it adds up fast and requires platform engineering skills that not every data team has.

Developers also have no way to run CI checks locally before pushing, which means failed CI runs often require multiple commits to fix, slowing down the feedback loop.

dbt Cloud has built-in CI that automatically triggers a run when a pull request is opened. It builds only the modified models and their downstream dependencies in a temporary schema, posts results back to the PR, and cleans up when the PR is merged or closed. For teams on GitHub or GitLab, this works well and requires minimal configuration.

The constraints appear quickly in enterprise contexts. Native automated CI only works with GitHub, GitLab, and Azure DevOps on Enterprise plans. Teams on Bitbucket, AWS CodeCommit, Jenkins, or any internal Git or CI system get no automated CI. They can use the dbt API to trigger jobs manually, but that requires custom integration work that undermines the simplicity dbt Cloud is supposed to provide.

Customization is also limited. The CI pipeline runs dbt checks. Adding custom steps, internal validation scripts, or governance checks outside of what dbt Cloud natively supports requires workarounds. Teams with mature DataOps practices often find the built-in CI too rigid to fit their standards.

Datacoves provides pre-built CI/CD pipelines that work with any Git provider and any CI tool, including Jenkins and internal enterprise systems behind the firewall. The pipeline comes configured with dbt testing, SQLFluff linting, dbt-checkpoint governance checks, and deployment steps out of the box.

Developers can run the same CI checks locally before pushing changes, which catches issues before they reach the pipeline and dramatically reduces the back-and-forth of fixing failed CI runs. When the local check passes, the CI check passes.

Because the pipeline is fully customizable, teams can add any step they need: internal approval workflows, custom validation scripts, notifications to MS Teams, or integration with ticketing systems like Jira. There are no constraints on providers or tools.

dbt Core has no built-in semantic layer. Teams choose from several mature options depending on their warehouse and BI tool preferences.

Cube.dev is the most widely adopted open-source choice. It provides a headless semantic layer with its own API, caching, and broad BI tool support. Lightdash and Omni are strong alternatives that integrate tightly with dbt models and work well for teams that want metric definitions to live close to their transformation code.

For Snowflake users, the dbt_semantic_view package lets teams manage Snowflake Semantic Views directly from their dbt project. Metrics defined this way live in the warehouse itself and are accessible to any tool connected to Snowflake, without routing data through a third-party service.

The open-source path requires more setup and maintenance than a managed semantic layer, but it gives teams full control over where metrics are defined, how they are served, and which tools consume them.

dbt Cloud includes a hosted semantic layer powered by MetricFlow. MetricFlow was acquired from Transform in 2023 and open-sourced under Apache 2.0 at Coalesce 2025. The engine itself is now free to use. The hosted service in dbt Cloud is a paid feature available on Starter plans and above. Usage is metered by queried metrics per month and caching, which reduces repeated warehouse hits, is an Enterprise-only feature.

Supported BI integrations include Tableau, Power BI, Google Sheets, and Excel, among others. Most are generally available. The exception is Power BI, which is still in public preview and requires additional setup through an On-premises Data Gateway for Power BI Service.

Warehouse support is incomplete. Microsoft Fabric is not supported. When queries run through the dbt Cloud semantic layer, data passes through dbt Labs servers on the way back from the warehouse. For organizations in regulated industries with strict data residency requirements, that is a hard blocker.

The spec itself is also in flux. dbt Labs recently modernized the MetricFlow YAML spec with the Fusion engine, and the new spec is coming to dbt Core in version 1.12. dbt Labs has also joined the Open Semantic Interchange initiative alongside Snowflake, Salesforce, BlackRock, and others to work toward an open standard, though no engine is fully OSI compliant yet. Teams investing heavily in the dbt Cloud semantic layer today should be aware that the spec is still evolving.

Datacoves does not lock teams into a single semantic layer approach. Depending on your warehouse and BI stack, you can use Snowflake Semantic Views via a dbt package, Cube.dev, Lightdash, or Omni. All options run inside your private environment, with no query data passing through third-party servers.

Because Datacoves runs dbt Core, teams can adopt MetricFlow natively when dbt Core 1.12 ships the new spec. No migration friction, no proprietary hosting layer to work around, and no metered query limits to plan around.

The OSI standard is still developing. Until compliance is widespread across tools, flexibility is the lower-risk position. Datacoves gives you that flexibility without requiring a bet on any single vendor's implementation.

dbt Core generates documentation automatically from your project: model descriptions, column definitions, tests, and a DAG showing upstream and downstream dependencies. You run dbt docs generate to build the static site and dbt docs serve to view it locally.

The limitation is hosting. dbt Core produces a static artifact. Your team is responsible for serving it somewhere accessible, keeping it updated after each run, and managing access controls. Many teams end up with stale docs because the pipeline to publish and refresh them is never properly automated. As projects grow across multiple teams and hundreds of models, the static site format also becomes a constraint. Navigation slows down, search is limited, and there is no real multi-project support.

dbt Cloud hosts your documentation automatically and updates it after each production run. On Starter plans, teams get dbt Catalog rather than the static dbt Docs experience. The features that matter most at enterprise scale, including column-level lineage, multi-project lineage, and project recommendations, are gated behind the Enterprise plan.

It is also worth noting that Snowflake now provides native lineage including column-level lineage directly in the platform, which covers a significant portion of what teams historically needed a separate docs tool to provide.

Datacoves automates documentation generation and hosting as part of the CI/CD pipeline. Docs are updated on every merge without manual intervention, and the hosted site is available to your full team inside your private environment at no additional cost.

For teams that have outgrown the static dbt docs experience, Datacoves also offers TributaryDocs. Unlike the default dbt docs site, TributaryDocs is a client-server application, which means it scales to enterprise-sized projects without the performance and navigation limitations of a static site. It includes an MCP server, enabling AI tools to query your documentation directly and making your data catalog part of your AI-assisted development workflow.

Datacoves customers can also connect external catalogs like Alation or Atlan, or use the catalog built into their warehouse. Snowflake, for example, includes native column-level lineage directly in the platform.

dbt Core produces a set of artifacts after every run: manifest.json, catalog.json, and run_results.json. These files contain your full project metadata and are the foundation for any custom tooling, observability integrations, or downstream automation you want to build.

Because dbt Core is open source, you have complete access to these artifacts and full control over how you use them. The tradeoff is that everything is self-managed. Parsing artifacts, building pipelines around them, and integrating with other systems requires custom engineering work that your team owns and maintains.

dbt Cloud exposes a set of APIs including the Discovery API for metadata queries, the Administrative API for managing jobs and environments, and webhooks for event-driven automation. These are well-documented and cover most standard integration scenarios.

The limitations show up at the edges. CI/CD integrations are constrained to supported Git providers. Some API capabilities are plan-gated, with full access requiring Enterprise. Teams building complex internal tooling or integrating with systems outside dbt's supported ecosystem may find the platform less flexible than working directly with dbt Core artifacts.

Datacoves runs dbt Core, so all native artifacts are available with no restrictions. Teams can build against manifest.json and run_results.json directly, integrate with any internal system, and use any CI tool or Git provider without platform constraints.

Datacoves also provides a dbt API that enables pushing and pulling artifacts programmatically. This is particularly useful for slim CI, where only changed models are tested, and for deferral, where development runs reference production state without rebuilding the entire project.

On the orchestration side, Datacoves exposes the Airflow API, giving teams full programmatic control over their pipelines. This enables event-driven architectures using Airflow datasets, where DAGs trigger based on data availability rather than fixed schedules. Datacoves also uses run_results.json within Airflow to enable retries from the point of failure, so when a model fails mid-run, the DAG resumes from that model rather than restarting the entire pipeline.

For teams that want API-driven metadata beyond what dbt Core artifacts provide, TributaryDocs exposes an MCP server that makes your documentation and lineage queryable by AI tools and external systems.

dbt Core has no built-in AI capabilities. Teams can integrate any AI tool they choose by connecting it to their local development environment. VS Code extensions like GitHub Copilot, Cursor, or any MCP-compatible client can work alongside dbt Core projects with full access to your codebase.

The flexibility is real, but so is the setup overhead. Each developer configures their own AI tooling independently, which means inconsistent experiences across the team and no centralized control over which models or providers are in use.

dbt Cloud includes dbt Copilot, an AI assistant built into the Cloud IDE. Copilot can generate documentation, tests, semantic models, and SQL based on the context of your dbt project. It is generally available on Enterprise plans and available in limited form on Starter.

The constraint is that Copilot is tied to OpenAI. Teams cannot bring their own LLM or route requests through their own Azure OpenAI instance unless they are on Enterprise and configure bring-your-own-key. Usage is also metered: 100 actions per month on Developer, 5,000 on Starter, and 10,000 on Enterprise. dbt Cloud also provides its own MCP server for integrating dbt context into AI workflows, but does not support connecting arbitrary third-party MCP servers within the platform. For organizations with strict data governance policies around which AI providers can touch their code and metadata, the lack of model choice is a hard limitation.

Datacoves supports any LLM your organization has approved. Teams can connect Anthropic, OpenAI, Azure OpenAI, GitHub Copilot, or Snowflake Cortex CLI directly to the VS Code environment without platform restrictions. Snowflake Cortex CLI also supports skills, enabling teams to build custom AI-powered workflows grounded in their warehouse data. There are no metered AI actions and no dependency on a single provider.

Because Datacoves provides VS Code in the browser, teams can configure any MCP server alongside their dbt project, not just a single platform-provided one. This means connecting Snowflake's MCP server, TributaryDocs' MCP server, or any other MCP-compatible tool is a configuration choice, not a platform constraint.

For organizations in regulated industries where AI provider choice is a compliance requirement, the bring-your-own-LLM architecture is not a nice-to-have. It is a prerequisite.

dbt Core has no built-in security controls. All security decisions sit with your team: where the environment runs, how credentials are managed, who has access, and how secrets are stored. For teams with the engineering capacity to implement this properly, that is complete flexibility. For everyone else, it is undifferentiated heavy lifting.

The most common gaps are secrets management, environment isolation, and consistent access controls across developers. These are solvable problems, but solving them requires deliberate investment and ongoing maintenance.

dbt Cloud is a SaaS product. Your data stays in your warehouse, but your code, metadata, and credentials pass through dbt Labs infrastructure. For many teams that is an acceptable tradeoff. For organizations in regulated industries such as pharma, healthcare, finance, and government, it often is not.

dbt Cloud offers SSO, role-based access control, and SOC 2 Type II compliance. PrivateLink and IP restrictions are available, but only on Enterprise+ plans. Teams that need their entire development and orchestration environment to remain inside their own network perimeter will find that dbt Cloud cannot meet that requirement regardless of plan.

Datacoves can be deployed in your private cloud account. Your code, your credentials, your metadata, and your pipeline execution all stay inside your own network. There is no VPC peering required and no data transiting a third-party SaaS environment.

Datacoves integrates with your existing identity provider via SSO and SAML, connects to your secrets management system such as AWS Secrets Manager, and supports your organization's logging and audit requirements. Security controls are not bolt-ons, they are part of the deployment architecture from day one.

For organizations in regulated industries, this is the architecture that passes security reviews without exceptions. You are not asking your security team to approve a SaaS vendor touching your pipeline. You are showing them that everything runs in your own account, under your own controls.

dbt Core is free. The cost is everything around it. A team that builds its own platform on dbt Core needs to provision and maintain developer environments, stand up and operate Airflow, build CI/CD pipelines, manage secrets, handle upgrades, and onboard every new developer into a custom setup.

That work falls on your most senior engineers. It is not a one-time cost. Every version upgrade, every new team member, and every incident that traces back to environment inconsistency is time your team is not spending on data products. Open source looks free the way a free puppy looks free.

dbt Cloud starts at $100 per developer seat per month on the Starter plan, capped at five developers. Full enterprise capabilities require an Enterprise contract with custom pricing. Semantic Layer usage is metered separately. Copilot usage is metered separately. Teams that grow beyond five developers or need features like multi-project lineage, column-level lineage, or advanced CI/CD will find that the total bill looks very different from the entry price.

There is also an indirect cost. dbt Cloud covers transformation and scheduling, but it does not cover orchestration of the broader pipeline. Teams still need to run and maintain Airflow or another orchestrator alongside it, which means the dbt Cloud platform cost is only part of the picture.

Datacoves provides the full environment: VS Code, dbt Core, Airflow, CI/CD, secrets management, documentation hosting, and governance guardrails. There is no separate orchestration bill, no environment infrastructure to maintain, and no platform engineering team required to keep it running.

Onboarding a new developer takes minutes, not days. Datacoves customers report reducing onboarding time by approximately 30 hours per developer. At scale, across a team of 20 or 30 engineers, that compounds quickly.

The right comparison is not Datacoves versus dbt Cloud's license fee. It is Datacoves versus the total cost of dbt Cloud plus Airflow infrastructure plus the engineering time to build and maintain the environment around them.

Most comparisons of dbt Core and dbt Cloud treat the choice as binary. It is not.

dbt Core gives you full control and zero cost, but leaves your team responsible for building and maintaining everything around it. dbt Cloud removes that burden but constrains your tooling, your security posture, and your budget as you scale. Both options make tradeoffs that many enterprise teams cannot accept.

The third option is a managed dbt platform that runs in your own cloud, on your own terms.

A managed dbt platform provides the operational simplicity of dbt Cloud with the flexibility and security of dbt Core, deployed in your own private cloud.

Datacoves delivers the operational simplicity of dbt Cloud without the SaaS architecture, the vendor lock-in, or the platform constraints. Your team gets a fully configured environment from day one: VS Code in the browser, dbt Core, managed Airflow, CI/CD pipelines, secrets management, and governance guardrails, all running inside your private cloud account.

You keep full ownership of your code and your data. You choose your warehouse, your Git provider, your CI tool, your LLM, and your BI stack. When your requirements change, the platform adapts. There is no migration to a new vendor and no renegotiation of what the platform will and will not support.

For enterprise teams in regulated industries, for organizations that have outgrown dbt Cloud's constraints, and for data leaders who want the best-practice foundation of a managed platform without surrendering control, Datacoves is the path that does not require a compromise.

Datacoves doesn't replace your tools. It gives them a proper home.

The right choice depends on your team's size, security requirements, and how much of the platform you want to own.

Choose dbt Core if:

Choose dbt Cloud if:

Choose Datacoves if:

If you are evaluating dbt Core and dbt Cloud and neither feels quite right, that is usually a signal. Most enterprise teams do not lack good tools. They lack a proper platform to run them in.

Ready to see how Datacoves works in your environment?

Book a demo to walk through the platform with a Datacoves expert.