Organizations often opt for open-source tools because "free" seems like an easy decision, especially compared to the higher price of managed versions of the same tooling. However, as with many things, there is no such thing as a free lunch. When choosing these open-source tools, it is easy to say that the Airflow and dbt pricing is $0 dollars meaning a cost-saving choice, but hidden expenses that are hard to ignore will quickly be revealed.

dbt Core and Apache Airflow are a natural pair in modern data analytics. dbt Core simplifies SQL-based data transformations, empowering data teams to create and maintain clean, well-documented, structured pipelines. Apache Airflow takes care of orchestrating these workflows, automating the movement and processing of data through the data engineering life cycle. Together, they can drive a powerful analytics stack that’s flexible and scalable—when used correctly. But this flexibility often comes at a price.

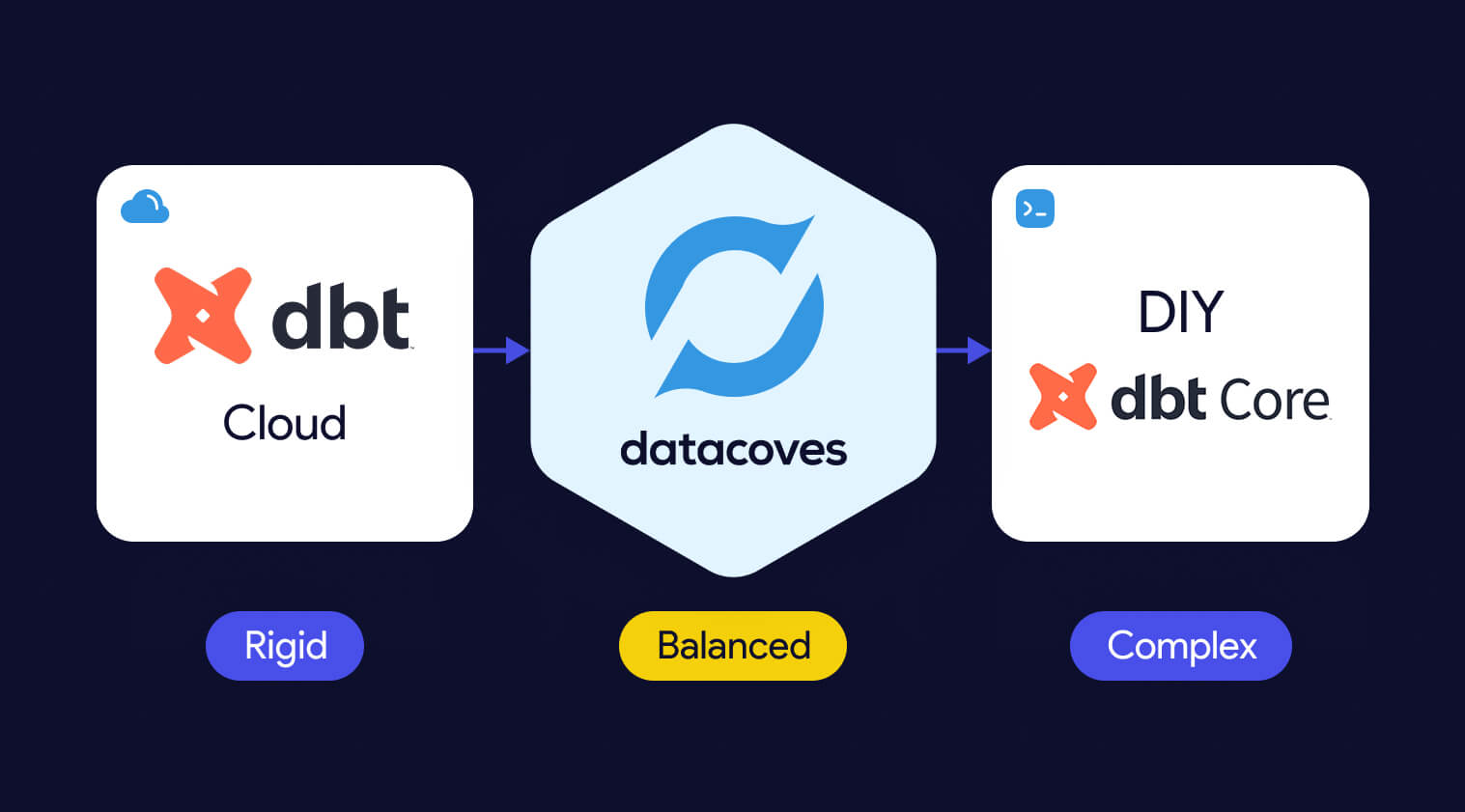

In this article, we’ll examine the build vs. buy dilemma, highlighting the flexibility and true costs of open-source tools like dbt Core and Apache Airflow. We’ll also compare them to managed solutions such as dbt Cloud pricing and Datacoves pricing, providing the insights you need to evaluate the trade-offs and choose the best option for your organization.

The open-source tool dbt is free to download and use. However, the actual cost emerges when considering the technical resources required for effective implementation and management. Tasks such as setting up infrastructure, ensuring scalability, and maintaining the tool demand skilled engineers.

Assuming a team of 2–4 engineers is responsible for these tasks, with annual salaries ranging from $120,000 to $160,000 (approximately $10,000 to $13,000 per month), even dedicating 25–50% of their time to managing dbt Core results in a monthly cost of $5,000 to $26,000. As your use of dbt scales, you may need to hire a dedicated team to manage the open-source solution full-time, leading to costs equating to 100% of their combined salaries.

So we can begin to see the true open source dbt pricing, especially at scale. In addition to engineering labor are other costs such as time, and effort required to maintain and scale the platform. More on that later.

Just on engineering pricing alone, we begin to see the comparison between the open-source and managed solutions. dbt Labs offers a hosted solution, dbt Cloud, with added features and tiered pricing options.

Opting for a managed solution will allow your organization to cut engineering costs down or allow your engineers to focus on other projects. However, while dbt Cloud reduced the infrastructure burden a bit, it only focuses on the T of ELT. Meaning, you still need engineers to manage the other pieces of the stack which can result in a disconnected data pipeline.

It is worth noting that some companies decide to use dbt cloud for the scheduler feature which can quickly become limiting as workflows become more complex. The next step is always a full fledged orchestrator such as Airflow.

Just like dbt Core, Apache Airflow is also free to use, but the true cost comes from deploying and maintaining it securely and at scale, which requires significant expertise, particularly in areas like Kubernetes, dependency management, and high-availability configurations.

Assuming 2–4 engineers with annual salaries between $130,000 and $170,000 (around $11,000 to $14,000 per month) dedicate 25–50% of their time to Airflow, the monthly cost ranges from $5,500 to $28,000. The pattern we saw with dbt Core rings true here as well. As your workflows grow, hiring a dedicated team to manage Airflow becomes necessary, leading to costs equating to 100% of their salaries.

For teams looking to sidestep the complexities of managing Airflow in-house, managed solutions provide an appealing alternative:

A managed Airflow solution typically costs between $5,000 and $15,000 per year, depending on workload, resource requirements, and the number of Airflow instances. By choosing a managed solution, organizations can see cost savings in the infrastructure maintenance, overall maintenance stress and more.

Setting up and managing infrastructure for Airflow and dbt Core isn’t as straightforward—or as “free”—as it might seem. The day-to-day work from managing Python virtual environments, keeping dependencies in check, and tackling scaling challenges require ongoing expertise and attention. In addition to salaries and benefits, what starts as an open-source experiment can quickly morph into a significant operational overhead full of hidden costs. Let’s dive into how by looking at time and expertise, security and compliance, and scaling complexities which, if not considered, can lead to possible side effects such as extended downtime, security issues and more.

The time it takes to configure, customize, and maintain a complex open-source solution is often underestimated. It’s not until your team is deep in the weeds—resolving issues, figuring out integrations, and troubleshooting configurations—that the actual costs start to surface. With each passing day your ROI is threatened. You want to start gathering insights from your data as soon as possible. Datacoves helped Johnson and Johnson set up their data stack in weeks

And then there’s the learning curve. Not all engineers on your team will be senior, and turnover is inevitable. New hires will need time to get up to speed before they can contribute effectively. This is the human side of technology: while the tools themselves might move fast, people don’t. That ramp-up period, filled with training and trial-and-error, represents yet another hidden cost.

Security and compliance add another layer of complexity. With open-source tools, your team is responsible for implementing best practices—like securely managing sensitive credentials with a solution like AWS Secrets Manager. Unlike managed solutions, these features don’t come prepackaged and need to be built integrated with the system.

Compliance is no different. Ensuring your solution meets enterprise governance requirements takes time, research, and careful implementation. It’s a process of iteration and refinement, and every hour spent here is another hidden cost as well as risking security if not done correctly.

Scaling open-source tools is where things often get complicated. Beyond everything already mentioned, your team will need to ensure the solution can handle growth. For many organizations, this means deploying on Kubernetes. But with Kubernetes comes steep learning curves and operational challenges. Making sure you always have a knowledgeable engineer available to handle unexpected issues and downtimes can become a challenge. Extended downtime due to this is a hidden cost since business user are impacted as they become reliant on your insights.

Throughout this article, we have uncovered the true costs of open-source tools, bringing us to the critical decision between building in-house or buying a managed solution. Even after we have uncovered the actual cost of open-source, the decision isn’t just about price—it’s also about flexibility a custom build offers.

Managed solutions often adopt a one-size-fits-all approach designed to attract the widest range of customers. While this can simplify implementation for many organizations, it may not always meet the specific needs of your team. To make an informed decision, let’s examine the key advantages and challenges of each approach.

Pros:

Cons:

Example:

A team building Airflow in-house may spend weeks configuring a Kubernetes-backed deployment, managing Python dependencies, and setting up DAG synchronizing files via S3 or Git. While the outcome can be tailored to their needs, the time and expertise required represent a significant investment.

Pros:

Cons:

Example:

Using a solution like MWAA, teams can leverage managed Airflow eliminating the need for infrastructure worries however it may not have the flexibility or interoperability with other aspects of their stack

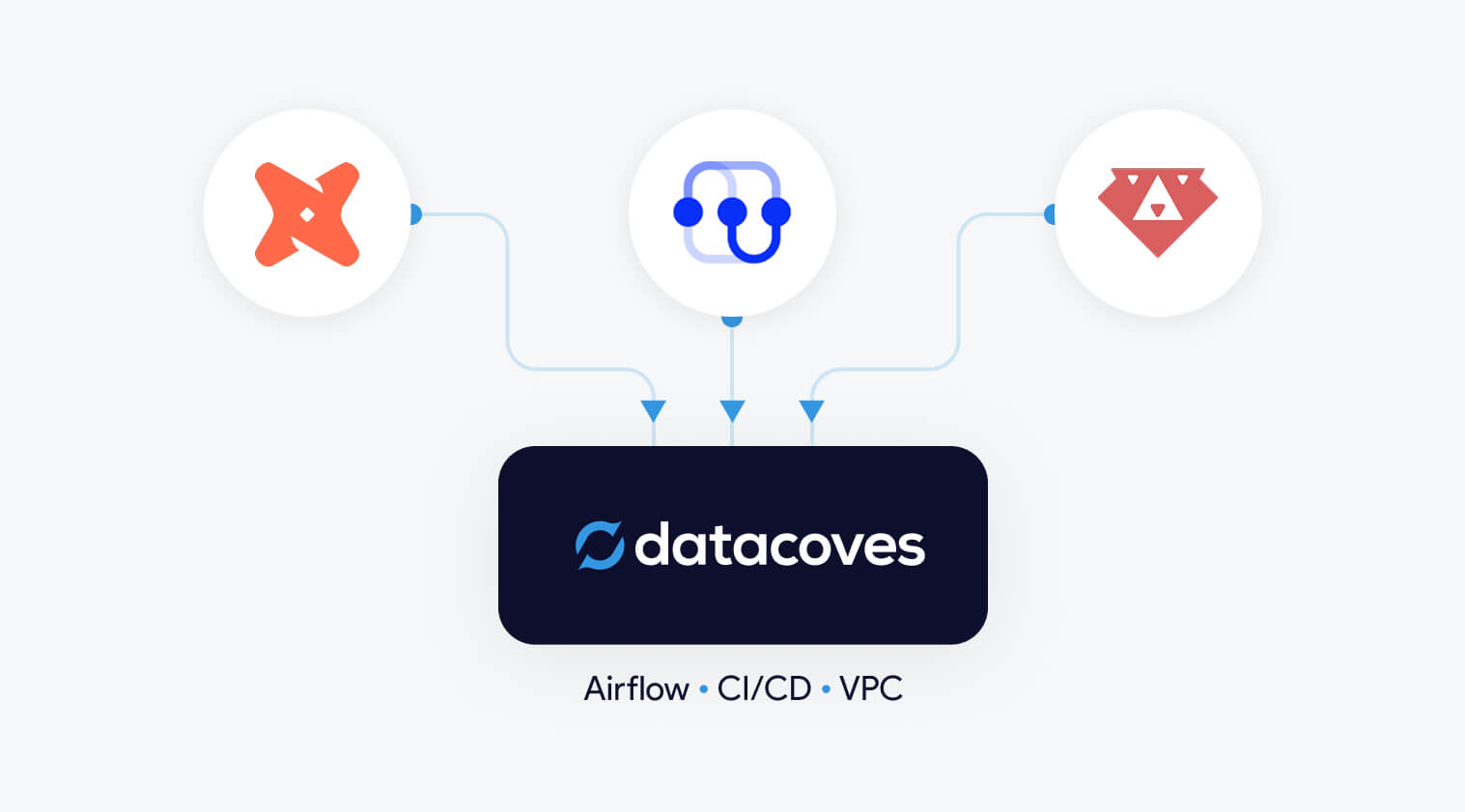

Whereas using a solution like Datacoves, teams can leverage managed Airflow and pre-configured environments for dbt Core. This eliminates the need for infrastructure setup, simplifies day-to-day operations, and allows teams to focus on deriving value from their analytics, not maintaining the tools that support them.

There is no universal right answer to the build vs. buy dilemma—every use case is unique. However, it’s important to recognize that many problems have already been solved. Unless there is a compelling reason to reinvent the wheel, leveraging existing solutions can save time, money, and effort.

In Fundamentals of Data Engineering, Joe Reis and Matt Housley emphasize the importance of focusing on delivering insights rather than getting entangled in the complexities of building and maintaining data infrastructure. They advocate for using existing solutions wherever possible to streamline processes and allow teams to concentrate on extracting value from data. The key question to ask is: Will building this solution provide your organization with a competitive edge? If the answer is no, it’s worth seeking out an existing solution that fits your needs. Managed platforms can reduce the need for dedicated personnel as we saw above and provide predictable costs, making them an attractive option for many teams.

This philosophy underpins why we built Datacoves. We believe data teams shouldn’t be bogged down by the operational complexities of tools like dbt and Airflow. And we also believe that Data teams should have access to the flexibility a custom-built solution has to offer. Datacoves offers the flexibility these tools are known for while removing the infrastructure burden, enabling your team to focus on what really matters: generating actionable insights that drive your organization forward.

Datacoves delivers the best of both worlds: the flexibility of a custom-built open-source solution combined with the rich features and zero-infrastructure maintenance of a managed platform—all with minimal vendor lock-in. How does Datacoves achieve this? By focusing on open-source tools and eliminating the burden of maintenance. Datacoves has already done the challenging work of identifying the best tools for the job, configuring them to work seamlessly together, and optimizing performance.

With Datacoves, your team can stop worrying about infrastructure and focus entirely on generating insights. The platform includes bundled in-browser VS Code, dbt Core, and Python extensions, alongside ready-to-use virtual environments tailored to analytics needs. Add to this a fully managed Airflow experience, and you have a solution where the code remains yours, but the operational headaches are gone.

Datacoves has enhanced Airflow with features designed to make DAG development more intuitive and enjoyable:

One of the key benefits of Datacoves is the elimination of hidden costs through its all-in-one platform approach. Teams often realize too late that piecing together the modern data stack—combining open-source tools, hosting solutions, and server infrastructure—results in unpredictable costs. A single misstep in configuration can lead to high cloud bills.

Datacoves removes the guesswork. Its optimized infrastructure provides predictable billing for non-variable services, along with clear guidelines for variable costs. By implementing best practices and optimizations, Datacoves ensures that your costs remain as low as possible without sacrificing performance.

Datacoves makes it easier for teams to harness the power of open-source tools like dbt and Airflow, without the operational burden. From simplifying complex workflows to delivering enterprise-grade infrastructure and predictable costs, Datacoves empowers teams to focus on what matters most: driving insights and business value.

Open-source tools like Airflow are incredibly powerful, offering flexibility and extensibility that modern analytics teams need. However, as we have seen, the initial appeal of "free" tools is not true. Actual costs exist in the form of salaries and benefits and hidden costs like costs of implementation, scaling, and long-term maintenance are very real and expensive. Paid solutions are around for a reason and finding the best one that suits your needs is essential.

If your team is looking to scale its analytics stack without the operational burden of managing open-source tools, Datacoves offers the perfect balance of flexibility, simplicity, and cost-efficiency. Explore Datacoves to learn more about our all-in-one platform for dbt Core and Airflow or check out our case studies and testimonials to see how other teams have accelerated their analytics engineering journey with Datacoves.

Any experienced data engineer will tell you that efficiency and resource optimization are always top priorities. One powerful feature that can significantly optimize your dbt CI/CD workflow is dbt Slim CI. However, despite its benefits, some limitations have persisted. Fortunately, the recent addition of the --empty flag in dbt 1.8 addresses these issues. In this article, we will share a GitHub Action Workflow and demonstrate how the new --empty flag can save you time and resources.

dbt Slim CI is designed to make your continuous integration (CI) process more efficient by running only the models that have been changed and their dependencies, rather than running all models during every CI build. In large projects, this feature can lead to significant savings in both compute resources and time.

dbt Slim CI is implemented efficiently using these flags:

--select state:modified: The state:modified selector allows you to choose the models whose "state" has changed (modified) to be included in the run/build. This is done using the state:modified+ selector which tells dbt to run only the models that have been modified and their downstream dependencies.

--state <path to production manifest>: The --state flag specifies the directory where the artifacts from a previous dbt run are stored ie) the production dbt manifest. By comparing the current branch's manifest with the production manifest, dbt can identify which models have been modified.

--defer: The --defer flag tells dbt to pull upstream models that have not changed from a different environment (database). Why rebuild something that exists somewhere else? For this to work, dbt will need access to the dbt production manifest.

You may have noticed that there is an additional flag in the command above.

--fail-fast: The --fail-fast flag is an example of an optimization flag that is not essential to a barebones Slim CI but can provide powerful cost savings. This flag stops the build as soon as an error is encountered instead of allowing dbt to continue building downstream models, therefore reducing wasted builds. To learn more about these arguments you can use have a look at our dbt cheatsheet.

The following sample Github Actions workflow below is executed when a Pull Request is opened. ie) You have a feature branch that you want to merge into main.

Checkout Branch: The workflow begins by checking out the branch associated with the pull request to ensure that the latest code is being used.

Set Secure Directory: This step ensures the repository directory is marked as safe, preventing potential issues with Git operations.

List of Files Changed: This command lists the files changed between the PR branch and the base branch, providing context for the changes and helpful for debugging.

Install dbt Packages: This step installs all required dbt packages, ensuring the environment is set up correctly for the dbt commands that follow.

Create PR Database: This step creates a dedicated database for the PR, isolating the changes and tests from the production environment.

Get Production Manifest: Retrieves the production manifest file, which will be used for deferred runs and governance checks in the following steps.

Run dbt Build in Slim Mode or Run dbt Build Full Run: If a manifest is present in production, dbt will be run in slim mode with deferred models. This build includes only the modified models and their dependencies. If no manifest is present in production we will do a full refresh.

Grant Access to PR Database: Grants the necessary access to the new PR database for end user review.

Generate Docs Combining Production and Branch Catalog: If a dbt test is added to a YAML file, the model will not be run, meaning it will not be present in the PR database. However, governance checks (dbt-checkpoint) will need the model in the database for some checks and if not present this will cause a failure. To solve this, the generate docs step is added to merge the catalog.json from the current branch with the production catalog.json.

Run Governance Checks: Executes governance checks such as SQLFluff and dbt-checkpoint.

As mentioned in the beginning of the article, there is a limitation to this setup. In the existing workflow, governance checks need to run after the dbt build step. This is because dbt-checkpoint relies on the manifest.json and catalog.json. However, if these governance checks fail, it means that the dbt build step will need to run again once the governance issues are fixed. As shown in the diagram below, after running our dbt build, we proceed with governance checks. If these checks fail, we need to resolve the issue and re-trigger the pipeline, leading to another dbt build. This cycle can lead to unnecessary model builds even when leveraging dbt Slim CI.

The solution to this problem is the --empty flag in dbt 1.8. This flag allows dbt to perform schema-only dry runs without processing large datasets. It's like building the wooden frame of a house—it sets up the structure, including the metadata needed for governance checks, without filling it with data. The framework is there, but the data itself is left out, enabling you to perform governance checks without completing an actual build.

Let’s see how we can rework our Github Action:

Checkout Branch: The workflow begins by checking out the branch associated with the pull request to ensure that the latest code is being used.

Set Secure Directory: This step ensures the repository directory is marked as safe, preventing potential issues with Git operations.

List of Files Changed: This step lists the files changed between the PR branch and the base branch, providing context for the changes and helpful for debugging.

Install dbt Packages: This step installs all required dbt packages, ensuring the environment is set up correctly for the dbt commands that follow.

Create PR Database: This command creates a dedicated database for the PR, isolating the changes and tests from the production environment.

Get Production Manifest: Retrieves the production manifest file, which will be used for deferred runs and governance checks in the following steps.

*NEW* Governance Run of dbt (Slim or Full) with EMPTY Models: If there is a manifest in production, this step runs dbt with empty models using slim mode and using the empty flag. The models will be built in the PR database with no data inside and we can now use the catalog.json to run our governance checks since the models. Since the models are empty and we have everything we need to run our checks, we have saved on compute costs as well as run time.

Generate Docs Combining Production and Branch Catalog: If a dbt test is added to a YAML file, the model will not be run, meaning it will not be present in the PR database. However, governance checks (dbt-checkpoint) will need the model in the database for some checks and if not present this will cause a failure. To solve this, the generate docs step is added to merge the catalog.json from the current branch with the production catalog.json.

Run Governance Checks: Executes governance checks such as SQLFluff and dbt-checkpoint.

Run dbt Build: Runs dbt build using either slim mode or full run after passing governance checks.

Grant Access to PR Database: Grants the necessary access to the new PR database for end user review.

By leveraging the dbt --empty flag, we can materialize models in the PR database without the computational overhead, as the actual data is left out. We can then use the metadata that was generated during the empty build. If any checks fail, we can repeat the process again but without the worry of wasting any computational resources doing an actual build. The cycle still exists but we have moved our real build outside of this cycle and replaced it with an empty or fake build. Once all governance checks have passed, we can proceed with the real dbt build of the dbt models as seen in the diagram below.

dbt Slim CI is a powerful addition to the dbt toolkit, offering significant benefits in terms of speed, resource savings, and early error detection. However, we still faced an issue of wasted models when it came to failing governance checks. By incorporating dbt 1.8’s --empty flag into your CI/CD workflows we can reduce wasted model builds to zero, improving the efficiency and reliability of your data engineering processes.

🔗 Watch the vide where Noel explains the --empty flag implementation in Github Actions:

Jinja templating in dbt offers flexibility and expressiveness that can significantly improve SQL code organization and reusability. There is a learning curve, but this cheat sheet is designed to be a quick reference for data practitioners, helping to streamline the development process and reduce common pitfalls.

Whether you're troubleshooting a tricky macro or just brushing up on syntax, bookmark this page. Trust us, it will come in handy and help you unlock the full potential of Jinja in your dbt projects.

If you find this cheat sheet useful, be sure to check out our Ultimate dbt Jinja Functions Cheat Sheet. It covers the specialized Jinja functions created by dbt, designed to enhance versatility and expedite workflows.

This is the foundational syntax of Jinja, from how to comment to the difference between statements and expressions.

Define and assign variables in different data types such as strings, lists, and dictionaries.

Jinja allows fine-grained control over white spaces in compiled output. Understand how to strategically strip or maintain spaces.

In dbt, conditional structures guide the flow of transformations. Grasp how to integrate these structures seamlessly.

Discover how to iterate over lists and dictionaries. Understand simple loop syntax or accessing loop properties.

These logical and comparison operators come in handy, especially when defining tests or setting up configurations in dbt.

Within dbt, you may need to validate if a variable is defined or a if a value is odd or even. These Jinja Variable tests allow you to validate with ease.

Macros are the backbone of advanced dbt workflows. Review how to craft these reusable code snippets and also how to enforce data quality with tests.

Fine-tune your dbt data models with these transformation and formatting utilities.

Please contact us with any errors or suggestions.

Implementing dbt (data build tool) can revolutionize your organization's data maturity, however, if your organization is not ready to take advantage of the benefits of dbt it might not be the right time to start. Why? Because the success of data initiatives often hinges on aspects beyond the tooling itself.

Many companies rush into implementing dbt without assessing their organization’s maturity and this leads to poor implementation. The consequences that come from a poorly implemented dbt initiative can leave the organization frustrated, overwhelmed with technical debt, and wasted resources. To avoid these pitfalls and ensure your organization is truly ready for dbt, you should complete an assessment of your organization's readiness by answering the questions presented later in this article.

Before diving into the maturity assessment questions, it’s important to understand what data maturity means. Data maturity is the extent to which an organization can effectively leverage its data to drive business value. It encompasses multiple areas, including:

Data-Driven Culture: Fostering an environment where data is integral to decision-making processes.

Data Quality: Ensuring data is accurate, consistent, and reliable.

Data Governance: Implementing policies and procedures to manage data assets.

Data Integration: Seamlessly combining data from various sources for a unified view.

A mature data organization not only ensures data accuracy and consistency but also embeds data-driven decision-making into its core operations.

By leveraging dbt's features, organizations can significantly enhance their data maturity, leading to better decision-making, improved data quality, robust governance, and seamless integration. For example:

Data-Driven Culture: By using dbt, you can improve many aspects that contribute to creating a data-driven culture within an organization. One way is by encouraging business users to be involved in providing or reviewing accurate model and column descriptions which are embedded in dbt. You can also involve them in defining what data to test with dbt. Better Data Quality will improve trust in the data. More trust in the data will always lead to more frequent use and reliance on it.

Data Quality and Observability: dbt enables automated testing and validation of data transformations. This ensures data quality by catching issues like schema changes or data anomalies early in the pipeline. As your data quality and data observability needs grow you can assess where you are on the data maturity curve. For example, in a sales data model, we can write tests to ensure there are no negative order quantities and that each order has a valid customer ID. With dbt you can also understand data lineage and this can improve impact and root cause analysis when insights don’t seem quite right.

Data Governance: dbt facilitates version control and documentation for all transformations, enhancing transparency and accountability. Organizations can track changes to data models ensuring compliance with data governance policies.

Data Integration: dbt supports the integration of data from multiple sources by providing a framework for consistent and reusable transformations. This allows for the creation of unified data models that provide a holistic view of business operations.

Now that we understand what data maturity is and how dbt can help improve it, you might be ready to jump on the dbt bandwagon. But first, we encourage you to assess your organization’s readiness for dbt. The journey to data maturity involves not only choosing the right tools but also ensuring that your organization is philosophically and operationally prepared to take full advantage of these tools. It is important to recognize that dbt’s approach requires a shift in mindset towards modern data practices, emphasizing transparency, collaboration, and automation.

To determine if your organization is mature enough for dbt or if dbt is the right fit, consider the following assessment questions:

dbt requires a philosophical alignment with its principles, such as ELT (Extract, Load, Transform) instead of the traditional ETL (Extract, Transform, Load) approach. dbt is also based on idempotency meaning that given the same input, you will always get the same output. This is different than traditional ETL that may use incompatible constructs like Auto-Incrementing Primary Keys. If your organization prefers processes that are incompatible with dbt’s methodology, you will face challenges fighting the dbt framework to make it do something it was not intended to do.

Simply migrating existing processes and code to dbt without rethinking them won’t leverage dbt’s full potential. Assess whether you’re ready to redesign your workflows to take advantage of dbt’s capabilities such as incremental tables, snapshots, seeds, etc.

dbt offers excellent features for data quality and documentation. Evaluate if your team is prepared to prioritize the utilization of these features to enhance transparency and trust in your data. Tests and model descriptions will not write themselves. When it comes to good descriptions, they shouldn't come from a data engineering team that does not know how the data is used or the best data quality rules to implement. Good descriptions must involve business user review at a minimum.

The goal of dbt is to empower various teams including IT and business users by using the same tooling. Consider if your organization is ready to foster this cross-functional collaboration. When you implement dbt correctly, you will empower anyone who knows SQL to contribute. You can have multiple teams contribute to the insight delivery process and still ensure proper governance and testing before updating production.

Automation is key to achieving efficiency with dbt. Implementing automated deployment, testing, and CI/CD pipelines can significantly improve your workflows. If you aren’t ready to automate, the benefits of dbt may not be fully realized. If you simply put in dbt without thinking about the end-to-end process and the failure points, you will miss opportunities for errors. The spaghetti code you have today didn't happen just because you were not using dbt.

dbt is a framework, not a silver bullet. Merely changing tools without altering your underlying processes will not solve existing issues. This is a huge issue with organizations that have not done the work to create a data-driven culture. Assess if your team is ready to adopt better naming conventions and more structured processes to make data more understandable.

Data immaturity might manifest as a reliance on manual processes, lack of data quality controls, or poor documentation practices. These factors can derail the effective implementation of dbt since dbt thrives in environments where data practices are robust and standardized. In other words, dbt alone will not solve these problems.

Ensuring your organization is ready for the changes that come with implementing dbt is not just best practice, it is essential for success. By thoroughly assessing your readiness, you can avoid technical debt, optimize your workflows, and fully harness the power of dbt. Remember, dbt is a powerful tool, but its effectiveness depends on the readiness of your organization to improve data practices and its alignment with dbt’s philosophy.

The top dbt alternatives include Datacoves, SQLMesh, Bruin Data, Dataform, and visual ETL tools such as Alteryx, Matillion, and Informatica. Code-first engines offer stronger rigor, testing, and CI/CD, while GUI platforms emphasize ease of use and rapid prototyping. Teams choose these alternatives when they need more security, governance, or flexibility than dbt Core or dbt Cloud provide.

The top dbt alternatives include Datacoves, SQLMesh, Bruin Data, Dataform, and GUI-based ETL tools such as Alteryx, Matillion, and Informatica.

Teams explore dbt alternatives when they need stronger governance, private deployments, or support for Python and code-first workflows that go beyond SQL. Many also prefer GUI-based ETL tools for faster onboarding. Recent market consolidation, including Fivetran acquiring SQLMesh and merging with dbt Labs, has increased concerns about vendor lock-in, which makes tool neutrality and platform flexibility more important than ever.

Teams look for dbt alternatives when they need stronger orchestration, consistent development environments, Python support, or private cloud deployment options that dbt Cloud does not provide.

Organizations evaluating dbt alternatives typically compare tools across three categories. Each category reflects a different approach to data transformation, development preferences, and organizational maturity.

Organizations consider alternatives to dbt Cloud when they need more flexibility, stronger security, or support for development workflows that extend beyond dbt. Teams comparing platform options often begin by evaluating the differences between dbt Cloud vs dbt Core.

Running enterprise-scale ELT pipelines often requires a full orchestration layer, consistent development environments, and private deployment options that dbt Cloud does not provide. Costs can also increase at scale (see our breakdown of dbt pricing considerations), and some organizations prefer to avoid features that are not open source to reduce long-term vendor lock-in.

This category includes platforms that deliver the benefits of dbt Cloud while providing more control, extensibility, and alignment with enterprise data platform requirements.

Datacoves provides a secure, flexible platform that supports dbt, SQLMesh, and Bruin in a unified environment with private cloud or VPC deployment.

Datacoves is an enterprise data platform that serves as a secure, flexible alternative to dbt Cloud. It supports dbt Core, SQLMesh, and Bruin inside a unified development and orchestration environment, and it can be deployed in your private cloud or VPC for full control over data access and governance.

Benefits

Flexibility and Customization:

Datacoves provides a customizable in-browser VS Code IDE, Git workflows, and support for Python libraries and VS Code extensions. Teams can choose the transformation engine that fits their needs without being locked into a single vendor.

Handling Enterprise Complexity:

Datacoves includes managed Airflow for end-to-end orchestration, making it easy to run dbt and Airflow together without maintaining your own infrastructure. It standardizes development environments, manages secrets, and supports multi-team and multi-project workflows without platform drift.

Cost Efficiency:

Datacoves reduces operational overhead by eliminating the need to maintain separate systems for orchestration, environments, CI, logging, and deployment. Its pricing model is predictable and designed for enterprise scalability.

Data Security and Compliance:

Datacoves can be deployed fully inside your VPC or private cloud. This gives organizations complete control over identity, access, logging, network boundaries, and compliance with industry and internal standards.

Reduced Vendor Lock-In:

Datacoves supports dbt, SQLMesh, and Bruin Data, giving teams long-term optionality. This avoids being locked into a single transformation engine or vendor ecosystem.

Running dbt Core yourself is a flexible option that gives teams full control over how dbt executes. It is also the most resource-intensive approach. Teams choosing DIY dbt Core must manage orchestration, scheduling, CI, secrets, environment consistency, and long-term platform maintenance on their own.

Benefits

Full Control:

Teams can configure dbt Core exactly as they want and integrate it with internal tools or custom workflows.

Cost Flexibility:

There are no dbt Cloud platform fees, but total cost of ownership often increases as the system grows.

Considerations

High Maintenance Overhead:

Teams must maintain Airflow or another orchestrator, build CI pipelines, manage secrets, and keep development environments consistent across users.

Requires Platform Engineering Skills:

DIY dbt Core works best for teams with strong Kubernetes, CI, Python, and DevOps expertise. Without this expertise, the environment becomes fragile over time.

Slow to Scale:

As more engineers join the team, keeping dbt environments aligned becomes challenging. Onboarding, upgrades, and platform drift create operational friction.

Security and Compliance Responsibility:

Identity, permissions, logging, and network controls must be designed and maintained internally, which can be significant for regulated organizations.

Teams that prefer code-first tools often look for dbt alternatives that provide strong SQL modeling, Python support, and seamless integration with CI/CD workflows and automated testing. These are part of a broader set of data transformation tools. Code-based ETL tools give developers greater control over transformations, environments, and orchestration patterns than GUI platforms. Below are four code-first contenders that organizations should evaluate.

Code-first dbt alternatives like SQLMesh, Bruin Data, and Dataform provide stronger CI/CD integration, automated testing, and more control over complex transformation workflows.

SQLMesh is an open-source framework for SQL and Python-based data transformations. It provides strong visibility into how changes impact downstream models and uses virtual data environments to preview changes before they reach production. SQLMesh was originally developed by Tobiko Data, acquired by Fivetran in 2025, and donated to the Linux Foundation in March 2026.

Benefits

Efficient Development Environments:

Virtual environments reduce unnecessary recomputation and speed up iteration.

Community Governance Under the Linux Foundation:

In March 2026, Fivetran contributed SQLMesh to the Linux Foundation, establishing an open community governance model. Founding members including Benzinga, CloudKitchens, Harness, and others joined to support its ongoing development. The project remains publicly available on GitHub, which increases its neutrality and long-term independence from any single vendor.

Considerations

Governance Is New:

While Linux Foundation stewardship is a positive signal for openness, the community governance model is still in its early stages. It remains to be seen how active and independent the contributor community will become over time.

Dataform is a SQL-based transformation framework focused specifically for BigQuery. It enables teams to create table definitions, manage dependencies, document models, and configure data quality tests inside the Google Cloud ecosystem. It also provides version control and integrates with GitHub and GitLab.

Benefits

Centralized BigQuery Development:

Dataform keeps all modeling and testing within BigQuery, reducing context switching and making it easier for teams to collaborate using familiar SQL workflows.

Considerations

Focused Only on the GCP Ecosystem:

Because Dataform is geared toward BigQuery, it may not be suitable for organizations that use multiple cloud data warehouses.

AWS Glue is a serverless data integration service that supports Python-based ETL and transformation workflows. It works well for organizations operating primarily in AWS and provides native integration with services like S3, Lambda, and Athena.

Benefits

Python-First ETL in AWS:

Glue supports Python scripts and PySpark jobs, making it a good fit for engineering teams already invested in the AWS ecosystem.

Considerations

Requires Engineering Expertise:

Glue can be complex to configure and maintain, and its Python-centric approach may not be ideal for SQL-first analytics teams.

Bruin is a modern SQL-based data modeling framework designed to simplify development, testing, and environment-aware deployments. It offers a familiar SQL developer experience while adding guardrails and automation to help teams manage complex transformation logic.

Benefits

Modern SQL Modeling Experience:

Bruin provides a clean SQL-first workflow with strong dependency management and testing.

Considerations

Growing Ecosystem:

Bruin is newer than dbt and has a smaller community and fewer third-party integrations.

While code-based transformation tools provide the most flexibility and long-term maintainability, some organizations prefer graphical user interface (GUI) tools. These platforms use visual, drag-and-drop components to build data integration and transformation workflows. Many of these platforms fall into the broader category of no-code ETL tools. GUI tools can accelerate onboarding for teams less comfortable with code editors and may simplify development in the short term. Below are several GUI-based options that organizations often consider as dbt alternatives.

GUI-based dbt alternatives such as Matillion, Informatica, and Alteryx use drag-and-drop interfaces that simplify development and accelerate onboarding for mixed-skill teams.

Matillion is a cloud-based data integration platform that enables teams to design ETL and transformation workflows through a visual, drag-and-drop interface. It is built for ease of use and supports major cloud data warehouses such as Amazon Redshift, Google BigQuery, and Snowflake.

Benefits

User-Friendly Visual Development:

Matillion simplifies pipeline building with a graphical interface, making it accessible for users who prefer low-code or no-code tooling.

Considerations

Limited Flexibility for Complex SQL Modeling:

Matillion’s visual approach can become restrictive for advanced transformation logic or engineering workflows that require version control and modular SQL development.

Informatica is an enterprise data integration platform with extensive ETL capabilities, hundreds of connectors, data quality tooling, metadata-driven workflows, and advanced security features. It is built for large and diverse data environments.

Benefits

Enterprise-Scale Data Management:

Informatica supports complex data integration, governance, and quality requirements, making it suitable for organizations with large data volumes and strict compliance needs.

Considerations

High Complexity and Cost:

Informatica’s power comes with a steep learning curve, and its licensing and operational costs can be significant compared to lighter-weight transformation tools.

Alteryx is a visual analytics and data preparation platform that combines data blending, predictive modeling, and spatial analysis in a single GUI-based environment. It is designed for analysts who want to build workflows without writing code and can be deployed on-premises or in the cloud.

Benefits

Powerful GUI Analytics Capabilities:

Alteryx allows users to prepare data, perform advanced analytics, and generate insights in one tool, enabling teams without strong coding skills to automate complex workflows.

Considerations

High Cost and Limited SQL Modeling Flexibility:

Alteryx is one of the more expensive platforms in this category and is less suited for SQL-first transformation teams who need modular modeling and version control.

Azure Data Factory (ADF) is a fully managed, serverless data integration service that provides a visual interface for building ETL and ELT pipelines. It integrates natively with Azure storage, compute, and analytics services, allowing teams to orchestrate and monitor pipelines without writing code.

Benefits

Strong Integration for Microsoft-Centric Teams:

ADF connects seamlessly with other Azure services and supports a pay-as-you-go model, making it ideal for organizations already invested in the Microsoft ecosystem.

Considerations

Limited Transformation Flexibility:

ADF excels at data movement and orchestration but offers limited capabilities for complex SQL modeling, making it less suitable as a primary transformation engine

Talend provides an end-to-end data management platform with support for batch and real-time data integration, data quality, governance, and metadata management. Talend Data Fabric combines these capabilities into a single low-code environment that can run in cloud, hybrid, or on-premises deployments.

Benefits

Comprehensive Data Quality and Governance:

Talend includes built-in tools for data cleansing, validation, and stewardship, helping organizations improve the reliability of their data assets.

Considerations

Broad Platform, Higher Operational Complexity:

Talend’s wide feature set can introduce complexity, and teams may need dedicated expertise to manage the platform effectively.

SQL Server Integration Services is part of the Microsoft SQL Server ecosystem and provides data integration and transformation workflows. It supports extracting, transforming, and loading data from a wide range of sources, and offers graphical tools and wizards for designing ETL pipelines.

Benefits

Strong Fit for SQL Server-Centric Teams:

SSIS integrates deeply with SQL Server and other Microsoft products, making it a natural choice for organizations with a Microsoft-first architecture.

Considerations

Not Designed for Modern Cloud Data Warehouses:

SSIS is optimized for on-premises SQL Server environments and is less suitable for cloud-native architectures or modern ELT workflows.

Recent consolidation, including Fivetran acquiring SQLMesh and merging with dbt Labs, has increased concerns about vendor lock-in and pushed organizations to evaluate more flexible transformation platforms.

Organizations explore dbt alternatives when dbt no longer meets their architectural, security, or workflow needs. As teams scale, they often require stronger orchestration, consistent development environments, mixed SQL and Python workflows, and private deployment options that dbt Cloud does not provide.

Some teams prefer code-first engines for deeper CI/CD integration, automated testing, and strong guardrails across developers. Others choose GUI-based tools for faster onboarding or broader integration capabilities. Recent market consolidation, including Fivetran acquiring SQLMesh and merging with dbt Labs, has also increased concerns about vendor lock-in.

These factors lead many organizations to evaluate tools that better align with their governance requirements, engineering preferences, and long-term strategy.

DIY dbt Core offers full control but requires significant engineering work to manage orchestration, CI/CD, security, and long-term platform maintenance.

Running dbt Core yourself can seem attractive because it offers full control and avoids platform subscription costs. However, building a stable, secure, and scalable dbt environment requires significantly more than executing dbt build on a server. It involves managing orchestration, CI/CD, and ensuring development environment consistency along with long-term platform maintenance, all of which require mature DataOps practices.

The true question for most organizations is not whether they can run dbt Core themselves, but whether it is the best use of engineering time. This is essentially a question of whether to build vs buy your data platform. DIY dbt platforms often start simple and gradually accumulate technical debt as teams grow, pipelines expand, and governance requirements increase.

For many organizations, DIY works in the early stages but becomes difficult to sustain as the platform matures.

The right dbt alternative depends on your team’s skills, governance requirements, pipeline complexity, and long-term data platform strategy.

Selecting the right dbt alternative depends on your team’s skills, security requirements, and long-term data platform strategy. Each category of tools solves different problems, so it is important to evaluate your priorities before committing to a solution.

If these are priorities, a platform with secure deployment options or multi-engine support may be a better fit than dbt Cloud.

Recent consolidation in the ecosystem has raised concerns about vendor dependency. Organizations that want long-term flexibility often look for:

Consider platform fees, engineering maintenance, onboarding time, and the cost of additional supporting tools such as orchestrators, IDEs, and environment management

dbt remains a strong choice for SQL-based transformations, but it is not the only option. As organizations scale, they often need stronger orchestration, consistent development environments, Python support, and private deployment capabilities that dbt Cloud or DIY dbt Core may not provide. Evaluating alternatives helps ensure that your transformation layer aligns with your long-term platform and governance strategy.

Code-first tools like SQLMesh, Bruin Data, and Dataform offer strong engineering workflows, while GUI-based tools such as Matillion, Informatica, and Alteryx support faster onboarding for mixed-skill teams. The right choice depends on the complexity of your pipelines, your team’s technical profile, and the level of security and control your organization requires.

Datacoves provides a flexible, secure alternative that supports dbt, SQLMesh, and Bruin in a unified environment. With private cloud or VPC deployment, managed Airflow, and a standardized development experience, Datacoves helps teams avoid vendor lock-in while gaining an enterprise-ready platform for analytics engineering.

Selecting the right dbt alternative is ultimately about aligning your transformation approach with your data architecture, governance needs, and long-term strategy. Taking the time to assess these factors will help ensure your platform remains scalable, secure, and flexible for your future needs.

dbt is wildly popular and has become a fundamental part of many data stacks. While it’s easy to spin up a project and get things running on a local machine, taking the next step and deploying dbt to production isn’t quite as simple.

In this article we will discuss options for deploying dbt to production, comparing some high, medium, and low effort options so that you can find which works best for your business and team. You might be deploying dbt using one of these patterns already; if you are, hopefully this guide will help highlight some improvements you can make to your existing deployment process.

We're going to assume you know how to run dbt on your own computer (aka your local dbt setup). We’re also going to assume that you either want to or need to run dbt in a “production” environment – a place where other tools and systems make use of the data models that dbt creates in your warehouse.

The deployment process for dbt jobs extends beyond basic scheduling and involves a multifaceted approach. This includes establishing various dbt environments with distinct roles and requirements, ensuring the reliability and scalability of these environments, integrating dbt with other tools in the (EL)T stack, and implementing effective scheduling strategies for dbt tasks. By focusing on these aspects, a comprehensive and robust dbt deployment strategy can be developed. This strategy will not only address current data processing needs but also adapt to future challenges and changes in your data landscape, ensuring long-term success and reliability.

In deploying dbt you have the creation and management of certain dbt environments. The development environment is the initial testing ground for creating and refining dbt models. It allows for experimentation without impacting production data. Following this, the testing environment, including stages like UAT and regression testing, rigorously evaluates the models for accuracy and performance. Finally, the production environment is where these models are executed on actual data, demanding high stability and performance.

Reliability and scalability of data models are also important. Ensuring that the data models produce accurate and consistent results is essential for maintaining trust in your data. As your data grows, the dbt deployment should be capable of scaling, handling increased volumes, and maintaining performance.

Integration with other data tools and systems is another key aspect. A seamless integration of dbt with EL tools, data visualization platforms, and data warehouses ensures efficient data flow and processing, making dbt a harmonious component of your broader data stack.

Effective dbt scheduling goes beyond mere time-based scheduling. It involves context-aware execution, such as triggering jobs based on data availability or other external events. Managing dependencies within your data models is critical to ensure that transformations occur in the correct sequence. Additionally, adapting to varying data loads is necessary to scale resources effectively and maintain the efficiency of dbt job executions.

They each have their place, and the trade-offs between setup costs and long-term maintainability is important to consider when you’re choosing one versus another.

Cron jobs are scripts that run at a set schedule. They can be defined in any language. For instance, we can use a simple bash script to run dbt. It’s just like running the CLI commands, but instead of you running them by hand, a computer process would do it for you.

Here’s a simple cron script:

In order to run on schedule, you’ll need to add this file to your system’s crontab.

As you can tell, this is a very basic dbt run script; we are doing the bare minimum to run the project. There is no consideration for tagged models, test, alerting, or more advanced checks.

Even though Cron jobs are the most basic way to deploy dbt there is still a learning curve. It requires some technical skills to set up this deployment. Additionally, because of its simplicity, it is pretty limited. If you are thinking of using crons for multi-step deployments, you might want to look elsewhere.

While it's relatively easy to set up a cron job to run on your laptop this defeats the purpose of using a cron altogether. Crons will only run when the daemon is running, so unless you plan on never turning off your laptop, you’ll want to set up the cron on an EC2 instance (or another server). Now you have infrastructure to support and added complexity to keep in mind when making changes. Running a cron on an EC2 instance is certainly doable, but likely not the best use of resources. Just because it can be done does not mean it should be done. At this point, you’re better off using a different deployment method.

The biggest downside, however, is that your cron script must handle any edge cases or errors gracefully. If it doesn’t, you might wind up with silent failures – a data engineer’s worst enemy.

Cron jobs might serve you well if you have some running servers you can use, have a strong handle on the types of problems your dbt runs and cron executions might run into, and you can get away with a simple deployment with limited dbt steps. It is also a solid choice if you are running a small side-project where missed deployments are probably not a big deal.

Use crons for anything more complex, and you might be setting yourself up for future headaches.

Ease of Use / Implementation – You need to know what you’re doing

Required Technical Ability – Medium/ High

Configurability – High, but with the added complexity of managing more complex code

Customization – High, but with a lot of overhead. Best to keep things very simple

Best for End-to-End Deployment - Low.

Cloud Service Runners like dbt Cloud are probably the most obvious way to deploy your dbt project without writing code for those deployments, but they are not perfect.

dbt Cloud is a product from dbt Labs, the creators of dbt. The platform has some out-of-the-box integrations, such as Github Actions and Webhooks, but anything more will have to be managed by your team. While there is an IDE (Integrated Developer Experience) that allows the user to write new dbt models, you are adding a layer of complexity by orchestrating your deployments in another tool. If you are only orchestrating dbt runs, dbt Cloud is a reasonable choice – it's designed for just that.

However, when you want to orchestrate more than just your dbt runs – for instance, kickoff multiple Extract / Load (EL) processes or trigger other jobs after dbt completes – you will need to look elsewhere.

dbt Cloud will host your project documentation and provide access to its APIs. But that is the lion’s share of the offering. Unless you spring for the Enterprise Tier, you will not be able to do custom deployments or trigger dbt runs based on incoming data with ease.

Deploying your dbt project with dbt Cloud is straightforward, though. And that is its best feature. All deployment commands use native dbt command line syntax, and you can create various "Jobs" through their UI to run specific models at different cadences.

If you are a data team with data pipelines that are not too complex and you are looking to handle dbt deployments without the need for standing up infrastructure or stringing together advanced deployment logic, then dbt Cloud will work for you. If you are interested in more complex triggers to kickoff your dbt runs - for instance, triggering a run immediately after your data is loaded – there are other options which natively support patterns like that. The most important factor is the complexity of the pieces you need to coordinate, not necessarily the size of your team or organization.

Overall, it is a great choice if you’re okay working within its limitations and support a simple workflow. As soon as you reach any scale, however, the cost may be too high.

Ease of Use / Implementation – Very easy

Required Technical Ability – Low

Configurability – Low / Medium

Customization – Low

Best for End-to-End Deployment - Low

The Modern Data Stack is a composite of tools. Unfortunately, many of those tools are disconnected because they specialize in handling one of the steps in the ELT process. Only after working with them do you realize that there are implicit dependencies between these tools. Tools like Datacoves bridge the gaps between the tools in the Modern Data Stack and enable some more flexible dbt deployment patterns. Additionally, they cover the End-to-End solution, from Extraction to Visualization, meaning it can handle steps before and after Transformation.

If you are loading your data into Snowflake with Fivetran or Airbyte, your dbt runs need to be coordinated with those EL processes. Often, this is done by manually setting the ETL schedule and then defining your dbt run schedule to coincide with your ETL completion. It is not a hard dependency, though. If you’re processing a spike in data or running a historical re-sync, your ETL pipeline might take significantly longer than usual. Your normal dbt run won’t play nicely with this extended ETL process, and you’ll wind up using Snowflake credits for nothing.

This is a common issue for companies moving from early stage / MVP data warehouses into more advanced patterns. There are ways to connect your EL processes and dbt deployments with code, but Datacoves makes it much easier. Datacoves will trigger the right dbt job immediately after the load is complete. No need to engineer a solution yourself. The value of the Modern Data Stack is being able to mix and match tools that are fit for purpose.

Meeting established data freshness and quality SLAs is challenging enough, but with Datacoves, you’re able to skip building custom solutions for these problems. Every piece of your data stack is integrated and working together. If you are orchestrating with Airflow, then you’re likely running a Docker container which may or may not have added dependencies. That’s one common challenge teams managing their own instances of Airflow will meet, but with Datacoves, container / image management and synchronization between EL and dbt executions are all handled on the platform. The setup and maintenance of the scalable Kubernetes infrastructure necessary to run Airflow is handled entirely by the Datacoves platform, which gives you flexibility but with a lower learning curve. And, it goes without saying that this works across multiple environments like development, UAT, and production.

With the End-to-End Pipeline in mind, one of the convenient features is that Datacoves provides a singular place to access all the tools within your normal analytics workflow - extraction + load, transformation, orchestration, and security controls are in a single place. The implicit dependencies are now codified; it is clear how a change to your dbt model will flow through to the various pieces downstream.

Datacoves is for teams who want to introduce a mature analytics workflow without the weight of adopting and integrating a new suite of tools on their own. This might mean you are a small team at a young company, or an established analytics team at an enterprise looking to simplify and reduce platform complexity and costs.

There are some prerequisites, though. To make use of Datacoves, you do need to write some code, but you’ll likely already be used to writing configuration files and dbt models that Datacoves expects. You won't be starting from scratch because best practices, accelerators, and expertise are already provided.

Ease of Use / Implementation – You can utilize YAML to generate DAGS for a simpler approach, but you also have the option to use Python DAGS for added flexibility and complexity in your pipelines.

Required Technical Ability – Medium

Configurability – High

Customization – High. Datacoves is modular, allowing you to embed the tools you already use

Best for End-to-End Deployment - High. Datacoves takes into account all of the factors of dbt Deployment

What do you use to deploy your dbt project when you have a large, complex set of models and dependencies? An orchestrator like Airflow is a popular choice, with many companies opting to use managed deployments through services such as Astronomer.

For many companies – especially in the enterprise – this is familiar territory. Adoption of these orchestrators is widespread. The tools are stable, but they are not without some downsides.

These orchestrators require a lot of setup and maintenance. If you’re not using a managed service, you’ll need to deploy the orchestrator yourself, and handle the upkeep of the infrastructure running your orchestrator, not to mention manage the code your orchestrator is executing. It’s no small feat, and a large part of the reason that many large engineering groups have dedicated data engineering and infrastructure teams.

Running your dbt deployment through Airflow or any other orchestrator is the most flexible option you can find, though. The increase in flexibility means more overhead in terms of setting up the systems you need to run and maintain this architecture. You might need to get DevOps involved, you’ll need to move your dbt project into a Docker image, you’ll want an airtight CI/CD process, and ultimately have well defined SLAs. This typically requires Docker images, container management, and some DevOps work. There can be a steep learning curve, especially if you’re unfamiliar with what’s needed to take an Airflow instance to a stable production release.

There are 3 ways to run Airflow, specifically – deploying on your own, using a managed service, or using an integrated platform like Datacoves. When using a managed service or an integrated platform like Datacoves, you need to consider a few factors:

Airflow is a multi-purpose tool. It’s not just for dbt deployments. Many organizations run complex data engineering pipelines with Airflow, and by design, it is flexible. If your use of Airflow extends well beyond dbt deployments or ELT jobs oriented around your data warehouse, you may be better suited for a dedicated managed service.

Similarly, if your organization has numerous teams dedicated to designing, building and maintaining your data infrastructure, you may want to use a dedicated Airflow solution. However, not every organization is able to stand up platform engineering teams or DevOps squads dedicated to the data infrastructure. Regardless of the size of your team, you will need to make sure that your data infrastructure needs do not outmatch your team’s ability to support and maintain that infrastructure.

Every part of the Modern Data Stack relies on other tools performing their jobs; data pipelines, transformations, data models, BI tools - they are all connected. Using Airflow for your dbt deployment adds another link in the dependency chain. Coordinating dbt deployments via Airflow can always be done through writing additional code, but this is an additional overhead you will need to design, implement, and maintain. With this approach, you begin to require strong software engineering and design principles. Your data models are only as useful as your data is fresh; meeting your required SLAs will require significant cross-tool integration and customization.

If you are a small team looking to deploy dbt, there are likely better options. If you are a growing team, there are certainly simpler options with less infrastructure overhead. For Data teams with complex data workflows that combine multiple tools and transformation technologies such as Python, Scala, and dbt, however, Airflow and other orchestrators can be a good choice.

Ease of Use / Implementation – Can be quite challenging starting from scratch

Required Technical Ability – High

Configurability – High

Customization – High, but build time and maintenance costs can be prohibitive

Best for End-to-End Deployment - High, but requires a lot of resources to set up and maintain

The way you should deploy your dbt project depends on a handful of factors – how much time you’re willing to invest up front, your level of technical expertise, as well as how much configuration and customization you need.

Small teams might have high technical acumen but not enough capacity to manage a deployment on their own. Enterprise teams might have enough resources but maintain disparate, interdependent projects for analytics. Thankfully, there are several options to move your project beyond your local and into a production environment with ease. And while specific tools like Airflow have their own pros and cons, it’s becoming increasingly important to evaluate your data stack vendor solution holistically. Ultimately, there are many ways to deploy dbt to production, and the decision comes down to spending time building a robust deployment pipeline or spending more time focusing on analytics.

Jinja is the game changing feature of dbt Core that allows us to create dynamic SQL code. In addition to the standard Jinja library, dbt Core includes additional functions and variables to make working with dbt even more powerful out of the box.

See our original post, The Ultimate dbt Jinja Cheat Sheet, to get started with Jinja fundamentals like syntax, variable assignment, looping and more. Then dive into the information below which covers Jinja additions added by dbt Core.

This cheatsheet references the extra functions, macros, filters, variables and context methods that are specific to dbt Core.

Enjoy!

These pre-defined dbt Jinja functions are essential to the dbt workflow by allowing you to reference models and sources, write documentation, print, write to the log and more.

These macros are provided in dbt Core to improve the dbt workflow.

These dbt Jinja filters are used to modify data types.

These dbt core "variables" such as config, target, source, and others contain values that provide contextual information about your dbt project and configuration settings. They are typically used for accessing and customizing the behavior of your dbt models based on project-specific and environment-specific information.

These special variables provide information about the current context in which your dbt code is running, such as the model, schema, or project name.

These methods allow you to retrieve information about models, columns, or other elements of your project.

Please contact us with any errors or suggestions.

The dbt-utils package enhances the dbt experience by offering a suite of utility macros. Designed to tackle common SQL modeling patterns, it streamlines complex operations, allowing users to focus on data transformation rather than the intricacies of SQL. dbt-utils is a must-have tool for dbt aficionados!

The dbt-utils package is a gem in the world of data transformations. Let this cheat sheet guide you swiftly through its features, ensuring you get the most out of dbt-utils. Enjoy!

The SQL generators in the dbt-utils package streamline your modeling tasks. By automating common SQL patterns, they minimize manual coding and guarantee consistent, high-quality queries. Think of it as a handy toolkit for every dbt user's SQL endeavors!

Within the dbt-utils package lies a set of generic tests, designed to validate your data effortlessly. These tests ensure consistency and quality, checking for common issues without the need to craft custom validation rules. It's data integrity made simple for dbt users.

The introspective macros within the dbt-utils package are a window into your data's metadata. They empower you to dynamically interact with and understand the underlying structure of your datasets. It's like having a magnifying glass for the intricacies of your dbt projects!

Please contact us with any errors or suggestions.