dbt’s built-in tests cover the fundamentals: uniqueness, nulls, referential integrity, accepted values. But as your project grows, so do the gaps. Anomalies that no one wrote a test for. Code changes that silently break downstream models. Production pipelines that look healthy until a stakeholder finds stale data in a dashboard.

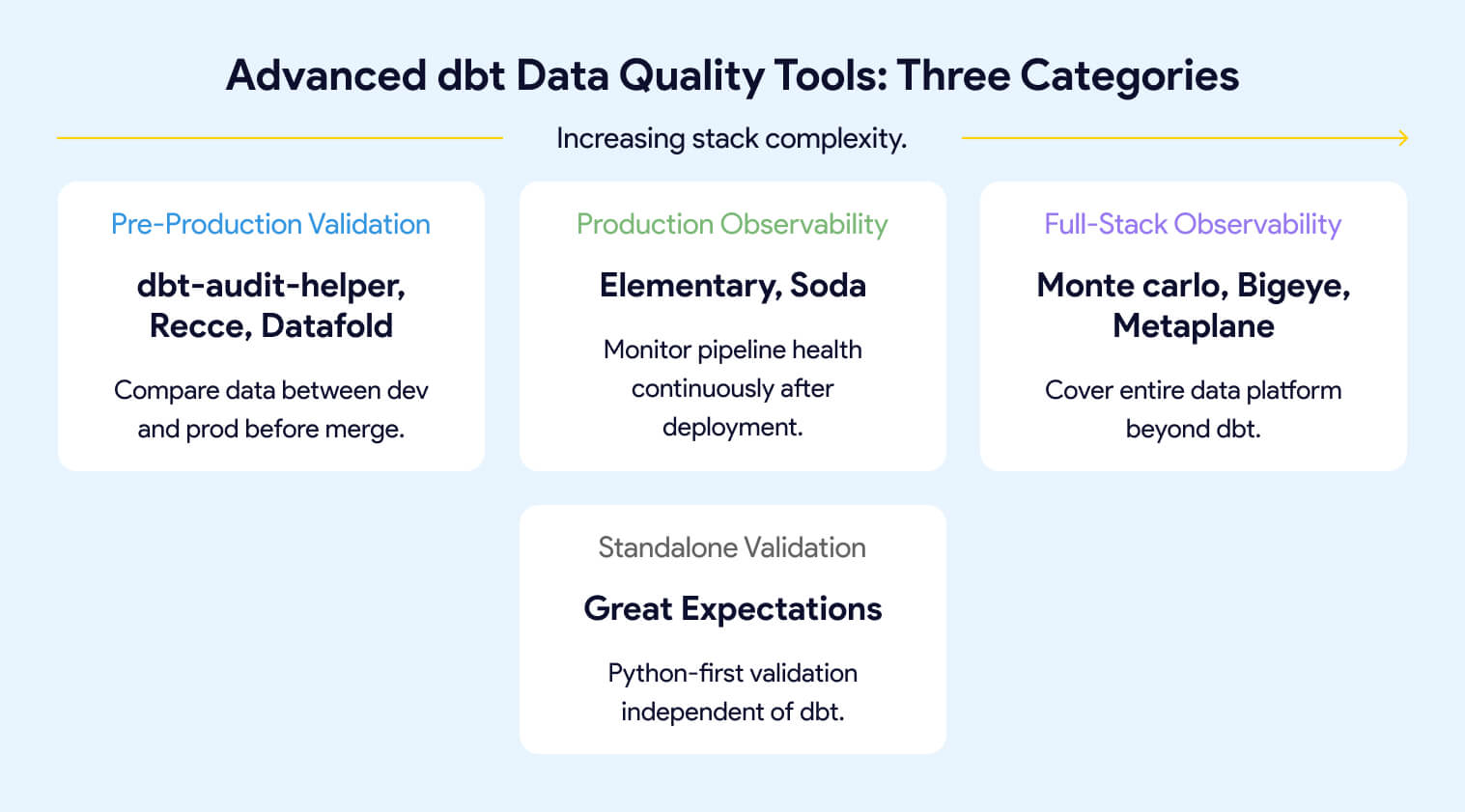

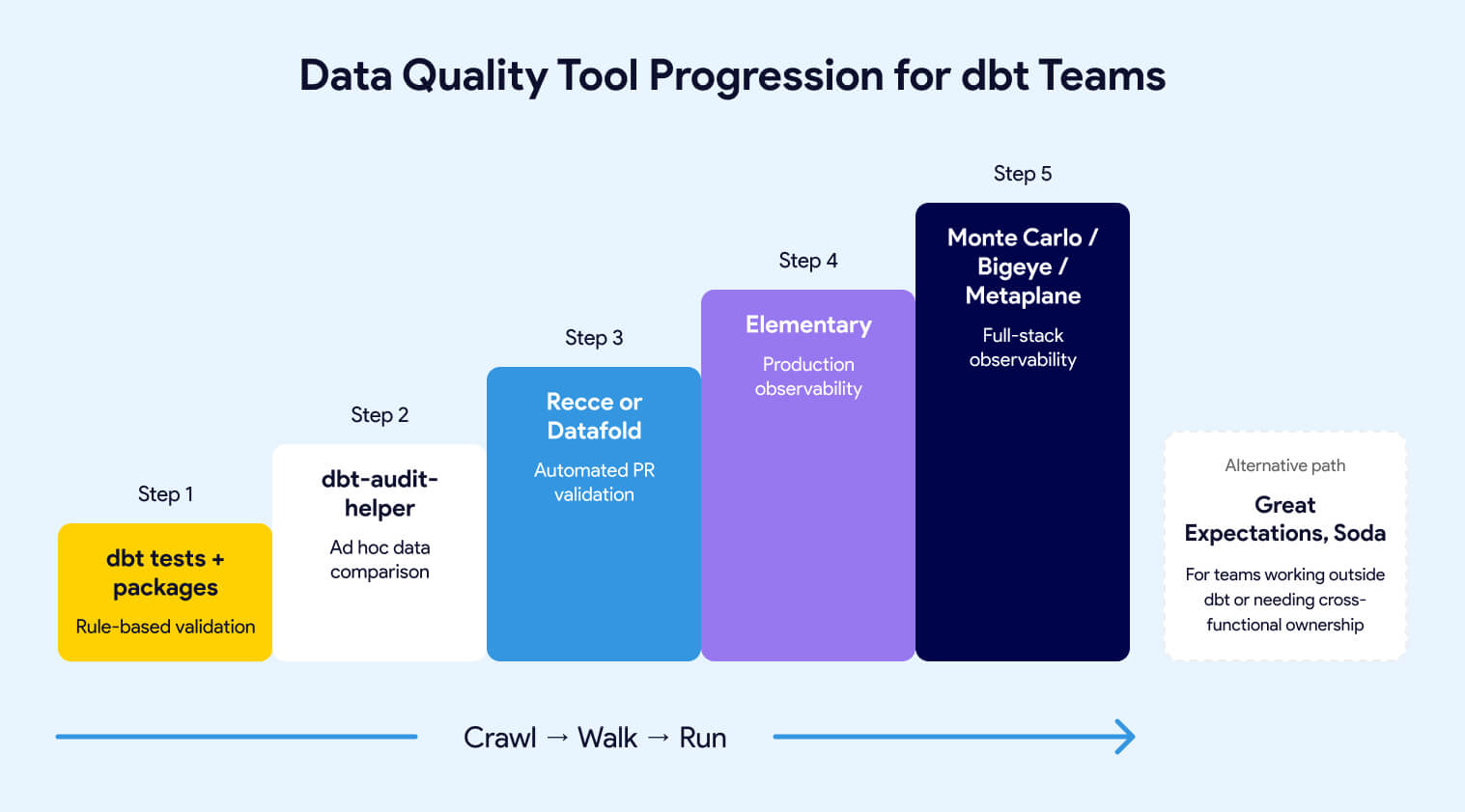

The tools in this guide pick up where basic dbt tests stop. They fall into three categories: pre-production validation (comparing data between environments before code merges), production observability (continuous monitoring of pipeline health over time), and full-stack observability (commercial platforms covering your entire data platform beyond dbt). Some tools span more than one category. The right combination depends on your team's maturity, stack complexity, and where you're losing the most time to data issues today.

This is the companion to An Overview of Testing Options for dbt, which covers everything that ships with dbt Core and the most common testing packages. If you haven’t built out your test suite yet, start there. This guide assumes you have already progressed past dbt data testing.

If you’ve followed the dbt testing guide, your project already has generic tests, singular tests, and probably packages like dbt-utils or dbt-expectations for richer assertions. That coverage handles a lot. But it has a ceiling.

Rule-based tests catch what you anticipated. They won’t tell you that row volumes dropped 40% overnight, that a source table stopped arriving on schedule, that a gradual shift in null rates is slowly corrupting a downstream report, or that your “harmless” model refactor just changed 15,000 values in a column no one thought to test.

The tools in this guide fill those gaps. They fall into three categories:

Pre-production validation compares data between your development and production environments before code merges. If a model refactor changes row counts, adds or removes rows, shifts column values, or alters schema structure, these tools surface the specific differences in your PR so reviewers can see the data impact alongside the code change. Tools: dbt-audit-helper, Recce, Datafold.

Production observability monitors your pipeline health continuously after deployment. Instead of testing specific conditions, it builds statistical baselines over time and alerts you when behavior deviates: freshness failures, volume anomalies, schema changes, distribution drift. Tools: Elementary, Soda.

Full-stack observability extends monitoring beyond dbt to cover your entire data platform, including ingestion tools, warehouses, BI layers, and AI workloads. These are commercial platforms for teams where dbt is one piece of a larger stack. Tools: Monte Carlo, Bigeye, Metaplane.

A complete observability layer tracks four dimensions:

Freshness monitors whether models and sources are updating on schedule. A freshness failure often means an upstream pipeline broke before any dbt test had a chance to run.

Volume tracks whether row counts and event rates behave as expected. Sudden drops or spikes frequently signal upstream issues before any explicit test fires.

Schema detects column additions, removals, renames, and data type changes that can silently break downstream models and dashboards.

Distribution watches the statistical properties of your data over time: null rates, cardinality, value ranges. Gradual drift here can corrupt reports without triggering a single test failure.

Some tools span categories. Elementary installs as a dbt package (making it feel like an extension of your test suite) but its core value is production observability. Datafold started as a data diffing tool but now includes production monitors. The categories describe what problem you’re solving, not rigid product boundaries.

Your dbt tests pass. Your CI pipeline is green. You merge the PR. And then a stakeholder reports that revenue numbers shifted by 12% in a dashboard no one connected to the model you changed.

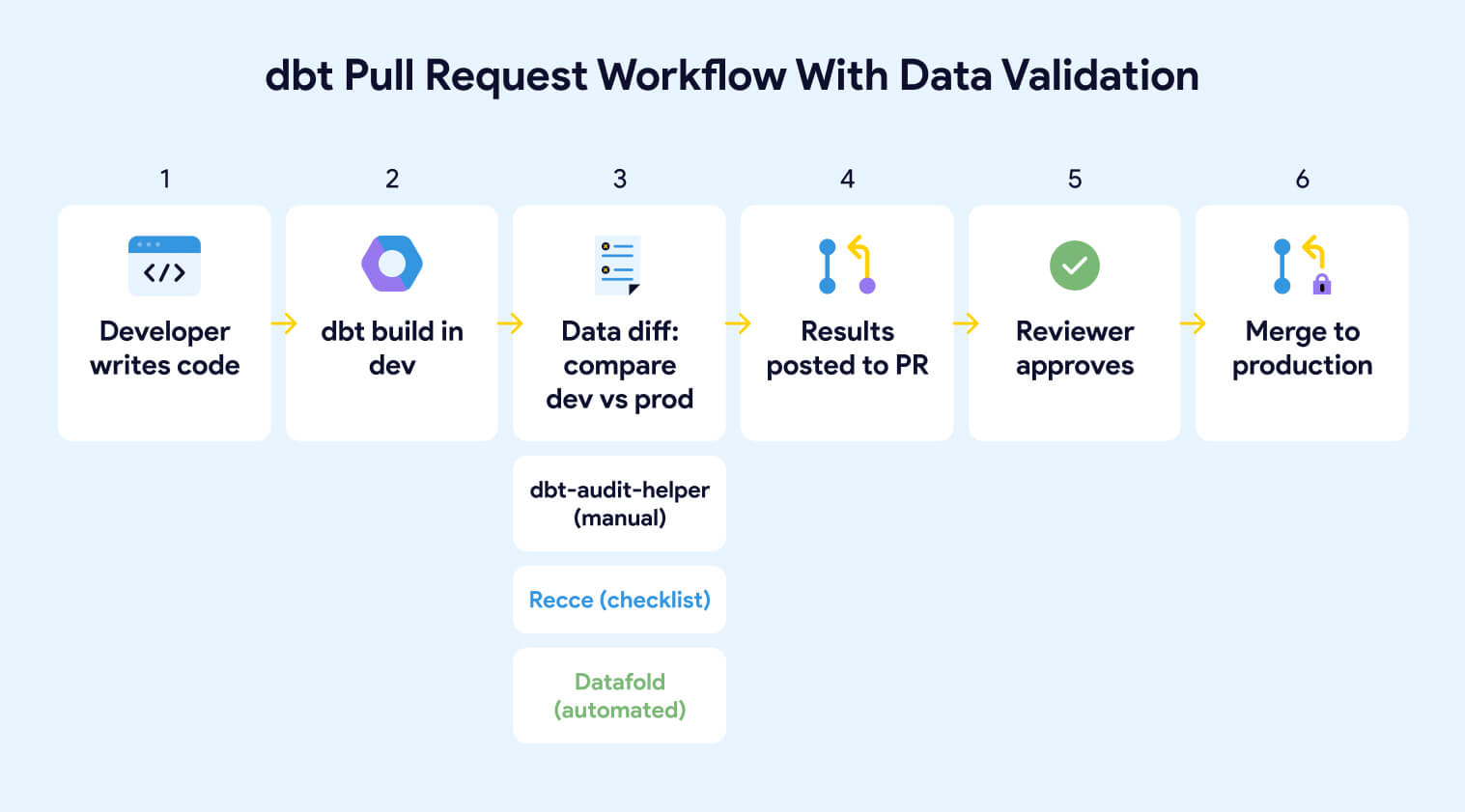

This happens because dbt tests validate conditions you defined, not the data impact of your code change. A model can pass every test and still produce different data than it did yesterday. Pre-production validation tools close that gap by comparing data between environments before code reaches production.

dbt-audit-helper, maintained by dbt Labs, is a dbt package that compares two relations or queries row by row and column by column. It’s the simplest way to validate that a model refactor, migration, or logic change didn’t introduce unintended differences.

The package provides 10 active macros organized into four groups:

A typical workflow: you refactor a model, run it against your dev environment, then use compare_and_classify_relation_rows to compare the dev output against the production version. If rows show as modified, you drill in with compare_which_relation_columns_differ to find which columns changed, then compare_column_values to understand the specific discrepancies.

dbt-audit-helper is free, open source, and runs entirely inside your dbt project. The tradeoff is that everything is manual. You write SQL files using the macros, run them one model at a time, and read the output in your terminal or warehouse. There's no UI, no PR integration, no automated detection of which models changed. For ad hoc validation during refactoring or migration, it's excellent. For ongoing change management across a team, you'll want Recce or Datafold

Recce is an open-source data validation toolkit built specifically for dbt PR workflows. Where dbt-audit-helper requires you to write macros and run them manually, Recce automates the comparison and packages the results into a format designed for PR review.

When a developer opens a PR, Recce compares a production baseline against the development branch using a suite of checks:

As you run checks, Recce lets you add each result to a validation checklist with notes explaining your findings. When you’re ready for review, you export the checklist to your PR comment. The reviewer gets a curated summary of the data impact rather than raw output they have to interpret themselves.

Recce OSS includes all the diff tools, the checklist workflow, and a CLI for CI/CD integration. Recce Cloud (commercial version) adds an AI Data Review Agent that auto-summarizes data impact on every PR, real-time collaboration, automatic checklist sync, and PR gating. For a detailed walkthrough of the workflow, see Recce's data validation toolkit guide.

Datafold is a commercial data engineering platform that automates data diffing as part of your CI/CD pipeline. Both Recce and Datafold run automatically on PRs, but they take different philosophies: Recce lets developers scope and choose which diffs matter, while Datafold diffs every changed model on every PR by default. Datafold's approach gives full coverage with less manual decision-making; Recce's reduces noise by keeping humans in the loop.

Datafold integrates deeply with both dbt Core and dbt Cloud. Its core capabilities:

Datafold supports Snowflake, BigQuery, Redshift, Databricks, PostgreSQL, and DuckDB, with cross-database diffing for migrations. VPC deployment is available for teams with strict security requirements.

The open-source data-diff CLI that Datafold previously maintained was deprecated in May 2024. All diffing capabilities now require Datafold Cloud.

Pre-production validation catches problems before merge. But not every data issue originates from a code change. Sources stop updating. Upstream systems introduce silent schema changes. Row volumes drift gradually over weeks until a report breaks. These are production problems, and they require tools that monitor your pipeline continuously, not just when someone opens a PR.

Elementary is an open-source observability tool built natively on dbt. It installs as a dbt package, runs as part of your project, and stores all observability data directly in your warehouse. No separate infrastructure, no additional warehouse connection. Elementary supports Snowflake, BigQuery, Redshift, Databricks, and PostgreSQL.

Elementary does three things:

Collects and stores test result history. Every dbt test run, including pass/fail status, failure counts, execution time, and the rows that failed, gets written to queryable tables in your warehouse. This gives you trend visibility that dbt’s native artifacts don’t provide.

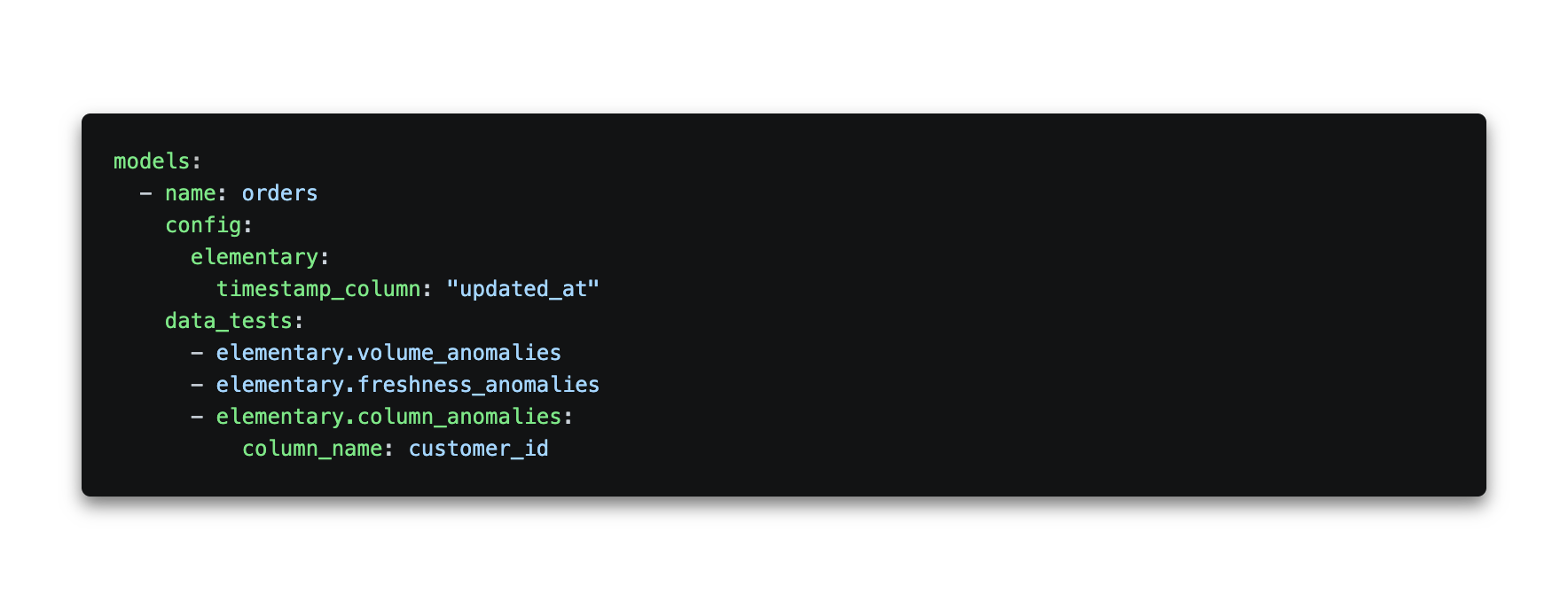

Adds anomaly detection monitors. Elementary provides dbt-native monitors you configure in YAML, covering row count anomalies, freshness, event freshness (for streaming data), null rate changes, cardinality shifts, and dimension distribution. These use Z-score based statistical detection: Elementary builds a baseline from your historical data (default 14-day training period) and flags values that fall outside the expected range. You can tune sensitivity, time buckets, and training windows per test.

Elementary OSS also includes an AI-powered test (ai_data_validation), currently in beta, that lets you define expectations in plain English. For example, expectation_prompt: "There should be no contract date in the future". Instead of running its own LLM, Elementary uses the AI functions built into your warehouse (Snowflake Cortex, Databricks AI Functions, or BigQuery Vertex AI), so your data never leaves your environment. Setup requires enabling the relevant LLM service in your warehouse first.

An Elementary monitor configuration looks like this:

Generates a self-hosted observability report. The Elementary CLI produces a rich HTML report you can host on S3, an internal server, or any static file host. It shows model lineage, test results over time, and anomaly alerts in one place. Alerts can be sent to Slack or Microsoft Teams. Full configuration options are in the Elementary docs.

Elementary also includes schema validation tests (detecting deleted or added columns, data type changes, deviations from a configured baseline, JSON schema violations) and exposure validation (detecting column changes that break downstream BI dashboards).

OSS vs. Cloud: The features above are all available in Elementary OSS. Elementary Cloud adds automated monitors that require no YAML configuration, column-level lineage extending to BI tools, a built-in data catalog, incident management, AI agents for triage and test recommendations, and a collaborative UI for non-technical users.

Elementary is the right starting point for most dbt teams because it fits inside a workflow you already have. Adding it requires a package installation and a few lines of YAML. If your needs grow beyond what OSS provides, the Cloud tier is the upgrade path.

Soda is an open-core data quality platform designed so that analysts and business stakeholders can write and own quality checks alongside the engineering team. Where Elementary is built for engineers working inside dbt, Soda is built for shared ownership of data quality across roles.

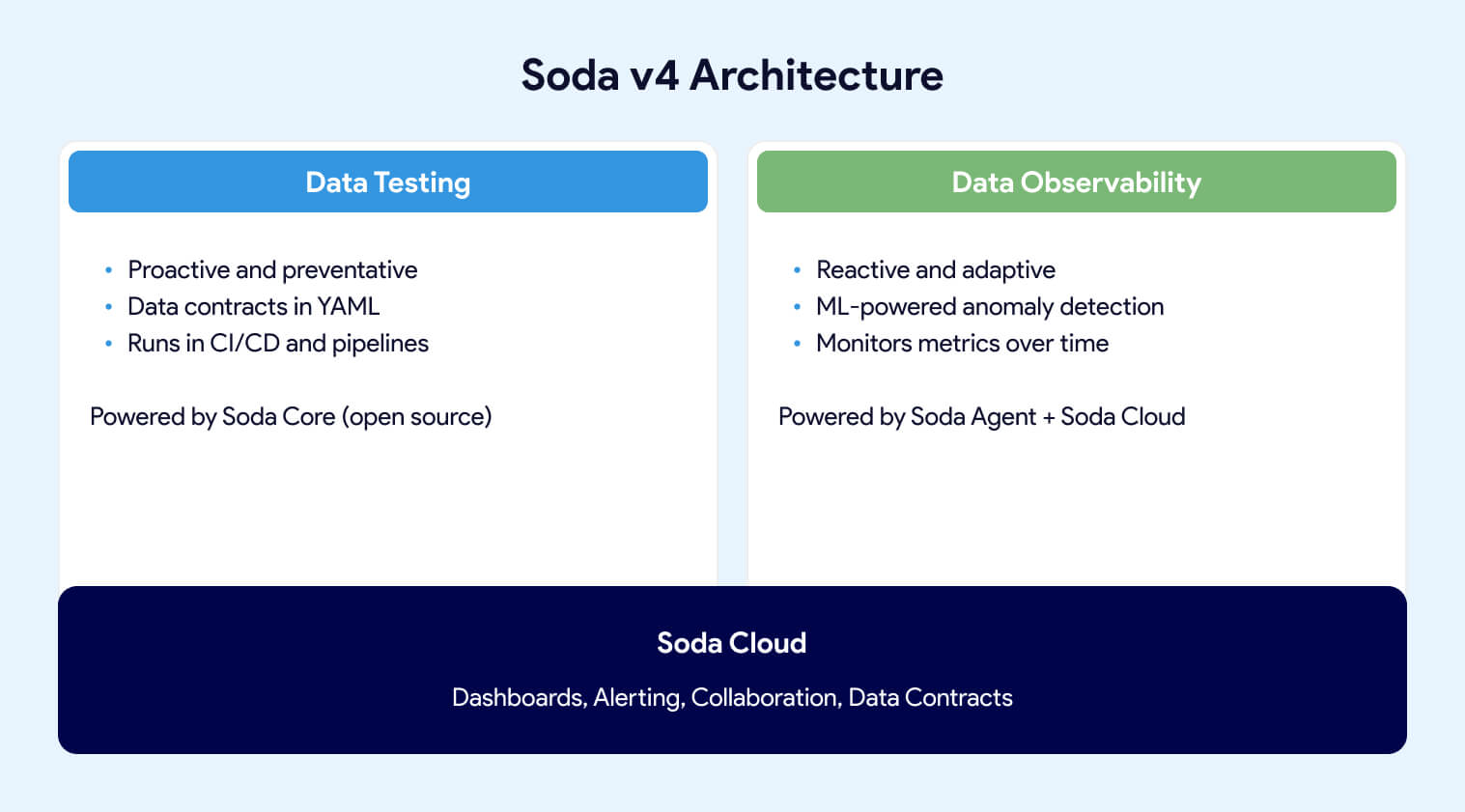

With the release of Soda v4, the platform has two pillars: Data Testing (proactive, contract-based validation) and Data Observability (reactive, ML-powered monitoring in production). This marks a shift from the earlier CLI-centric approach toward a unified data quality platform.

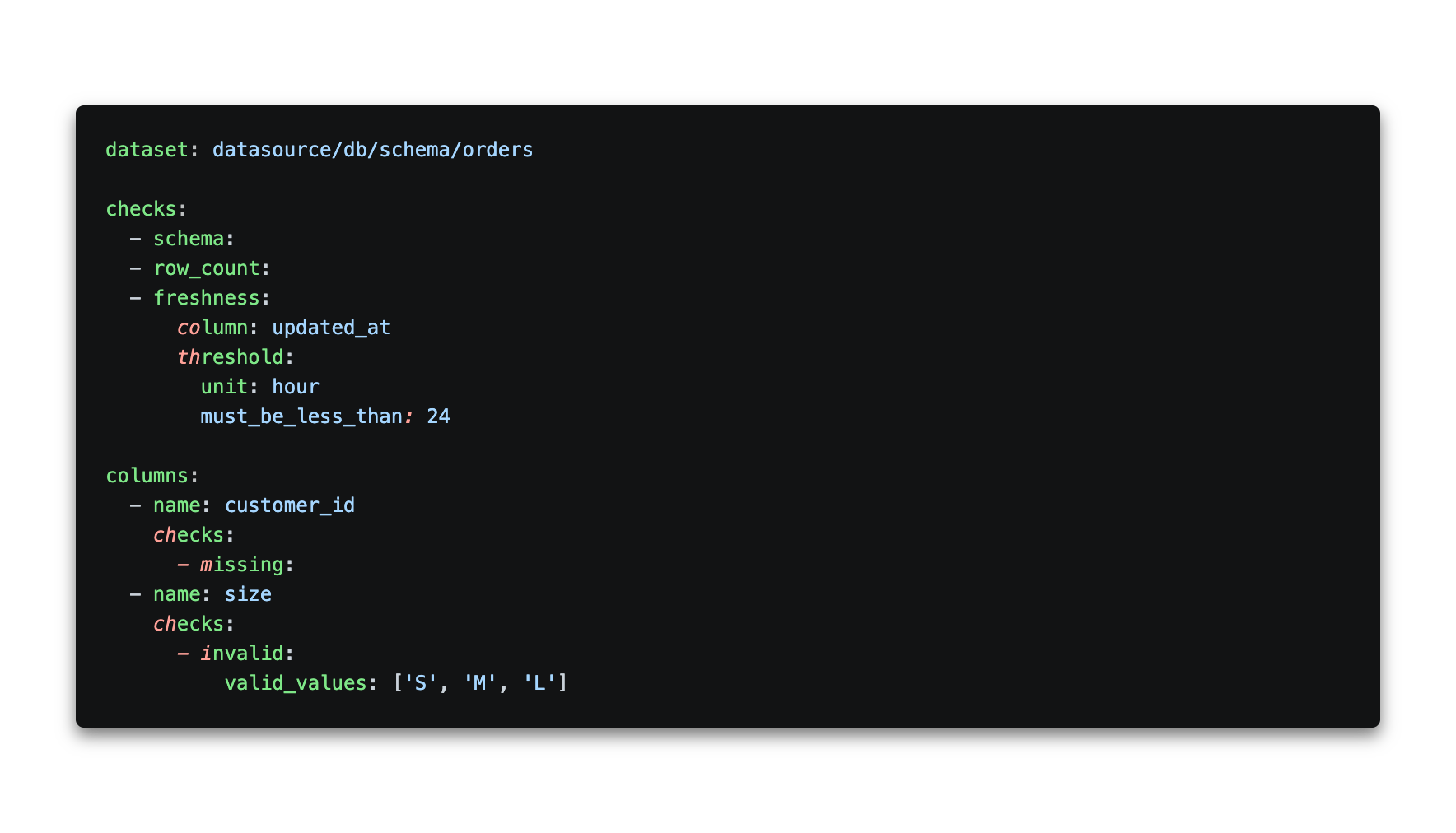

Soda v4 introduces a Contract Language, a YAML-based format for defining data quality expectations as enforceable agreements between data producers and consumers. A data contract looks like this:

Contracts are verified using Soda Core v4, the open-source Python engine that now functions as a Data Contract Engine. It runs contract verifications locally or in pipelines and supports 50+ built-in data quality checks. Soda Core v4 does not include observability features; those require Soda Cloud or a Soda Agent.

Teams still using SodaCL (the v3 check language) can continue doing so, but new development is centered on the Contract Language. SodaCL documentation is maintained under the Soda v3 docs.

Soda's deployment model has three tiers. Soda Core (open source) runs contract verifications in your pipelines. Soda-hosted Agent is a managed runner that adds observability, scheduling, and the ability to create checks from the Soda Cloud UI. Self-hosted Agent provides the same capabilities deployed in your own Kubernetes environment. Observability features (anomaly detection, metric trending, automated monitoring) require either Agent option plus Soda Cloud.

Soda Cloud is the commercial SaaS layer that adds dashboards, alerting (Slack, MS Teams, Jira, PagerDuty, ServiceNow), collaborative data contracts with role-based ownership, and a UI for both technical and non-technical users.

Soda isn't dbt-native. It works independently and can ingest dbt test results into Soda Cloud for visualization rather than replacing your dbt tests. It integrates with Airflow, Dagster, Prefect, and Azure Data Factory for orchestration, and with Atlan, Alation, and Collibra for data cataloging. It supports Snowflake, BigQuery, Redshift, Databricks, PostgreSQL, DuckDB, and more.

If data quality ownership needs to extend beyond your engineering team, or you need a warehouse-agnostic quality layer that works both inside and outside dbt, Soda is built for that. The producer/consumer contract model is its most meaningful distinction from Elementary.

Elementary and Soda work well when dbt is the center of your data stack. But many organizations run pipelines that span ingestion tools, multiple transformation layers, legacy ETL platforms, and BI tools that dbt never touches. When a data quality issue could originate anywhere in that chain, you need observability that covers the full stack, not just the dbt layer.

Monte Carlo is a commercial observability platform that connects directly to your warehouse and automatically learns the baseline behavior of your tables using ML. No manual threshold configuration, no YAML. It supports Snowflake, BigQuery, Redshift, and Databricks across all three clouds, plus data lakes via Hive and Glue metastores.

Where Elementary requires you to define each monitor, Monte Carlo deploys monitoring out of the box. It provides automated field-level lineage across your entire stack (not just dbt), integrates with Airflow, Fivetran, Azure Data Factory, Informatica, Databricks Workflows, Prefect, Looker, Tableau, and dbt, and includes centralized incident management.

In 2025, Monte Carlo launched Observability Agents: a Monitoring Agent that recommends and deploys monitors automatically based on data profiling, and a Troubleshooting Agent that investigates root causes by testing hundreds of hypotheses across related tables in parallel. Monte Carlo now also extends monitoring to AI agent inputs and outputs alongside traditional pipeline health.

Monte Carlo’s value compounds as your stack grows beyond dbt. For teams running primarily dbt workloads, the overhead and cost typically outweigh the benefits compared to Elementary. But for large, multi-tool platforms with SLA requirements and dedicated data reliability teams, Monte Carlo is purpose-built.

Bigeye is a commercial observability platform that differentiates on lineage depth. After acquiring Data Advantage Group, Bigeye offers end-to-end column-level lineage across both modern cloud warehouses and legacy ETL platforms including Informatica, Talend, SSIS, and IBM DataStage. That makes it a strong fit for enterprises running hybrid stacks where not everything lives in Snowflake or Databricks.

Bigeye provides 70+ data quality monitoring metrics with ML-powered anomaly detection, and supports join-based rules that validate data across tables in different databases. Recent additions include customizable data quality dimensions, PII/PHI detection for sensitive data classification, and an AI Trust platform that applies runtime enforcement to AI data policies.

If your observability needs span legacy ETL systems alongside modern cloud warehouses, or you need cross-database data quality rules and sensitive data detection, Bigeye covers territory that Monte Carlo and Elementary don’t.

Metaplane takes a different approach: self-service observability with minimal setup. Connect your warehouse, BI tool, and dbt repo, and Metaplane’s ML engine starts learning from your metadata and generating alerts within days. No manual thresholds, no engineering effort to configure. It was acquired by Datadog in 2024, positioning it as the bridge between application observability and data observability.

Metaplane provides anomaly detection, column-level lineage, schema change detection, and CI/CD support for dbt (impact previews and regression tests in PRs). It also offers a Snowflake native app that lets you pay with existing Snowflake credits.

Metaplane is optimized for modern cloud stacks. Its integrations cover the core of a typical modern data platform: Snowflake, BigQuery, Redshift, Databricks, Clickhouse, and S3 for warehouses and data lakes; PostgreSQL, MySQL, and SQL Server for transactional databases; Fivetran and Airbyte for ingestion; dbt Core and dbt Cloud for transformation; Airflow for orchestration; Census and Hightouch for reverse ETL; Looker, Tableau, PowerBI, Metabase, Mode, Sigma, and Hex for BI; Slack and Jira for notifications.

The tradeoff is scope. Metaplane doesn't cover legacy ETL systems like Informatica, Talend, or SSIS, and its orchestration support is limited to Airflow. For teams with complex hybrid stacks, Bigeye or Monte Carlo may fit better. For modern cloud-native stacks where fast setup matters more than exhaustive coverage, Metaplane is hard to beat. Pricing starts with a free tier, with team plans scaling based on usage.

Great Expectations (GX) is the most widely used open-source Python framework for data validation. It’s not dbt-native and it’s not an observability platform. It’s a standalone validation engine for teams that need to define, execute, and document data quality checks across any Python-accessible data source.

GX Core (open source, Apache 2.0) lets you define “Expectations” (data assertions) and run them against Pandas DataFrames, Spark, or any database supported by SQLAlchemy. Results are rendered as auto-generated “Data Docs,” human-readable HTML documentation of what passed and failed. GX integrates with Airflow, Databricks, Snowflake, BigQuery, Redshift, PostgreSQL, and Microsoft Fabric.

GX Cloud (commercial) adds a web UI for managing expectations without code, scheduled validations, alerting, Data Health dashboards, and ExpectAI, which generates expectations from natural language prompts. Currently ExpectAI supports Snowflake, PostgreSQL, Databricks SQL, and Redshift.

The tradeoff is complexity. GX has a steeper learning curve than Elementary or Soda. Its architecture (DataContext, DataSources, ExpectationSuites, Checkpoints, Stores) requires more setup and conceptual overhead than adding a dbt package or writing SodaCL checks. For teams with strong Python skills who want deep, standalone validation across multiple data sources independent of dbt, it remains a solid choice. For dbt-centric teams, Elementary or Soda will get you to value faster.

The right tooling depends on where your team sits on the data quality maturity curve. A five-person analytics engineering team running 50 dbt models doesn’t need Monte Carlo. A platform team managing hundreds of models across multiple ingestion tools, transformation layers, and BI dashboards probably can’t get by with just Elementary.

For most dbt teams, the progression looks like this:

Already have dbt tests and packages? Add dbt-audit-helper for ad hoc data comparison when you refactor models or migrate from legacy SQL. It costs nothing and runs inside your project.

Merging dbt changes regularly and want a safety net? Add Recce if you want an open-source, developer-controlled workflow. Choose Datafold if you want fully automated diffing on every PR with lineage into BI tools.

Need to know when production data goes wrong between deploys? Deploy Elementary. It covers anomaly detection, test result history, and alerting with no infrastructure outside your warehouse.

Data quality ownership extends beyond engineering? Evaluate Soda for its human-readable checks and data contracts.

Stack extends well beyond dbt? Evaluate Monte Carlo for ML-based full-stack coverage, Bigeye for hybrid modern/legacy environments, or Metaplane for fast self-service setup on modern stacks.

These tools aren’t mutually exclusive. The strongest data teams typically run two or three: one for pre-production validation, one for production observability, and sometimes a commercial platform on top for cross-stack coverage. The maturity curve gives you the order. Don’t try to run before you’ve learned to walk.

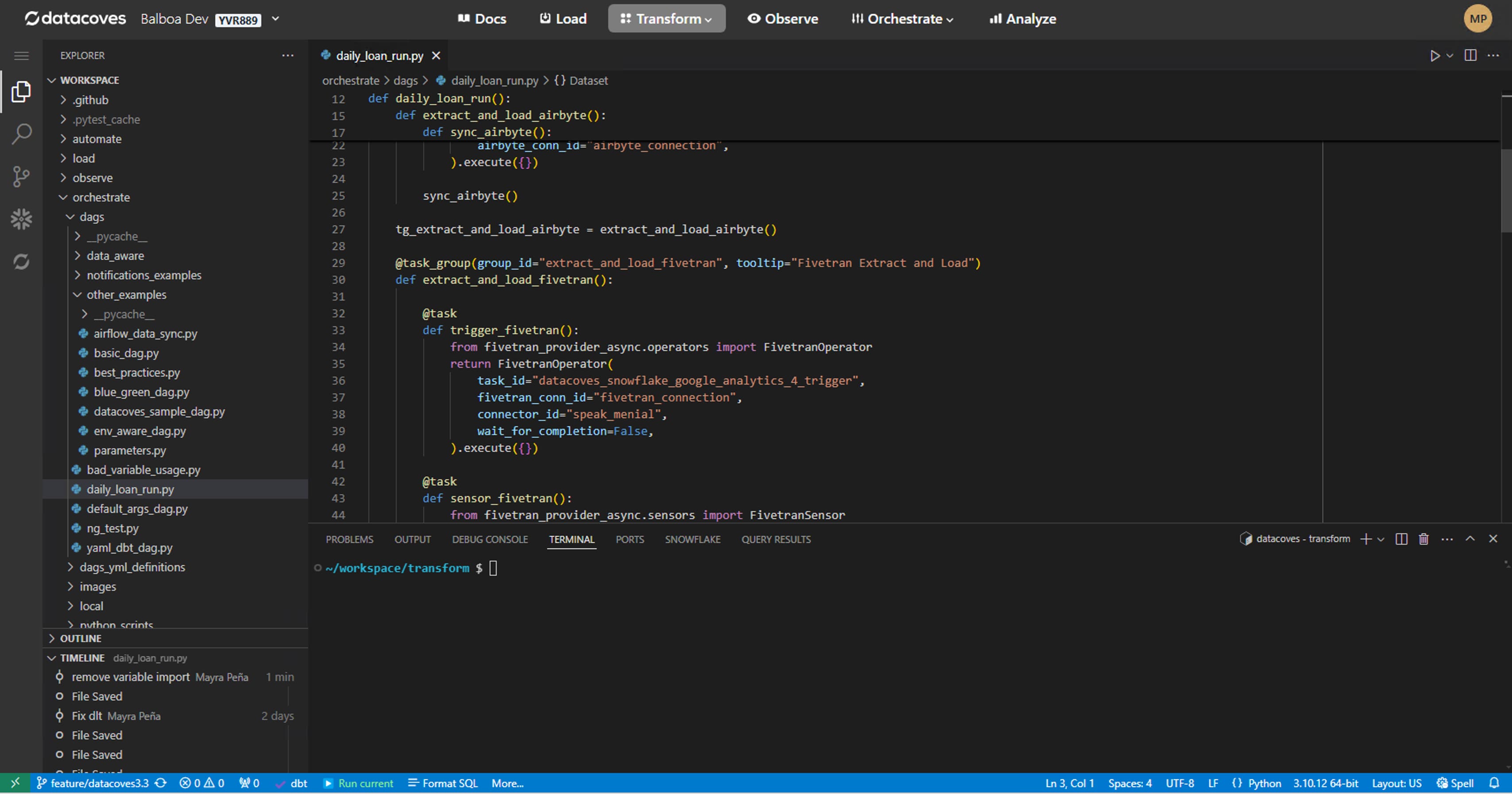

Datacoves doesn't bundle or pre-configure any of the tools in this guide. What it does is provide a managed dbt and Airflow environment that's compatible with all of them. If your team already uses Elementary, Soda, Recce, or any other package, Datacoves supports that workflow without getting in the way.

For example, if a client is running Elementary, Datacoves facilitates the continuity of that tool within its environment. The same applies to Recce in CI/CD, dbt-audit-helper in development, or any other dbt package or external integration. Datacoves doesn't own or maintain these tools, but it ensures they work within a governed, orchestrated platform where your team can connect observability data to Airflow DAG runs, version control history, and deployment pipelines.

The value isn't in pre-installing packages. It's in providing the environment where these tools run reliably alongside everything else your data team needs.

If your dbt project has basic tests in place and you’re still getting surprised by data issues, you don’t need more tests. You need coverage at different points in the lifecycle.

Before merge: start with dbt-audit-helper for ad hoc comparison, then graduate to Recce or Datafold when your team needs automated PR-level validation.

After deployment: Elementary gives you production anomaly detection, test result history, and alerting inside your existing dbt workflow. It’s the lowest-friction path to observability for most teams.

Beyond dbt: if your stack spans ingestion tools, legacy ETL, and BI layers that dbt doesn’t touch, Monte Carlo, Bigeye, and Metaplane provide the cross-stack coverage. Soda and Great Expectations fit teams that need quality ownership or validation logic outside the dbt ecosystem.

The teams that build the most reliable data platforms aren’t the ones running the most tools. They’re the ones that picked the right tools for the right problems at the right stage of their maturity curve.

This guide is the companion to An Overview of Testing Options for dbt. If you haven’t built your test suite yet, start there. The tools in this article are most valuable when they sit on top of a solid testing foundation.

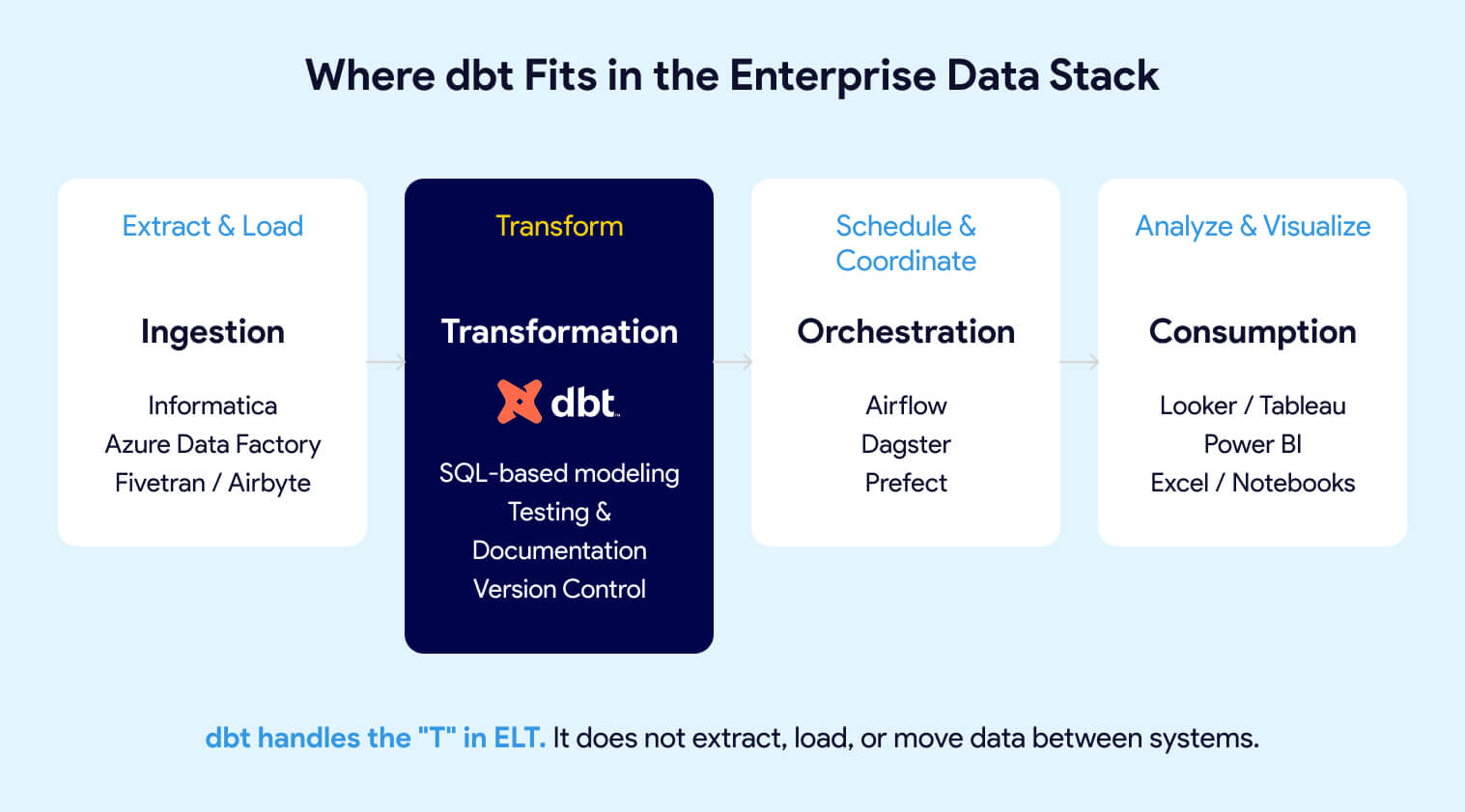

dbt (data build tool) is a SQL-based transformation framework that turns raw data into trusted, analytics-ready datasets directly inside your data warehouse. It brings software engineering discipline to analytics: version control, automated testing, CI/CD, and auto-generated documentation. dbt handles the "T" in ELT. It does not extract, load, or move data.

dbt focuses exclusively on the transformation layer of ELT (Extract, Load, Transform). Unlike traditional ETL tools that handle the entire pipeline, dbt assumes data already exists in your warehouse. Ingestion tools like Informatica, Azure Data Factory, or Fivetran load the raw data. dbt transforms it into trusted, analytics-ready datasets.

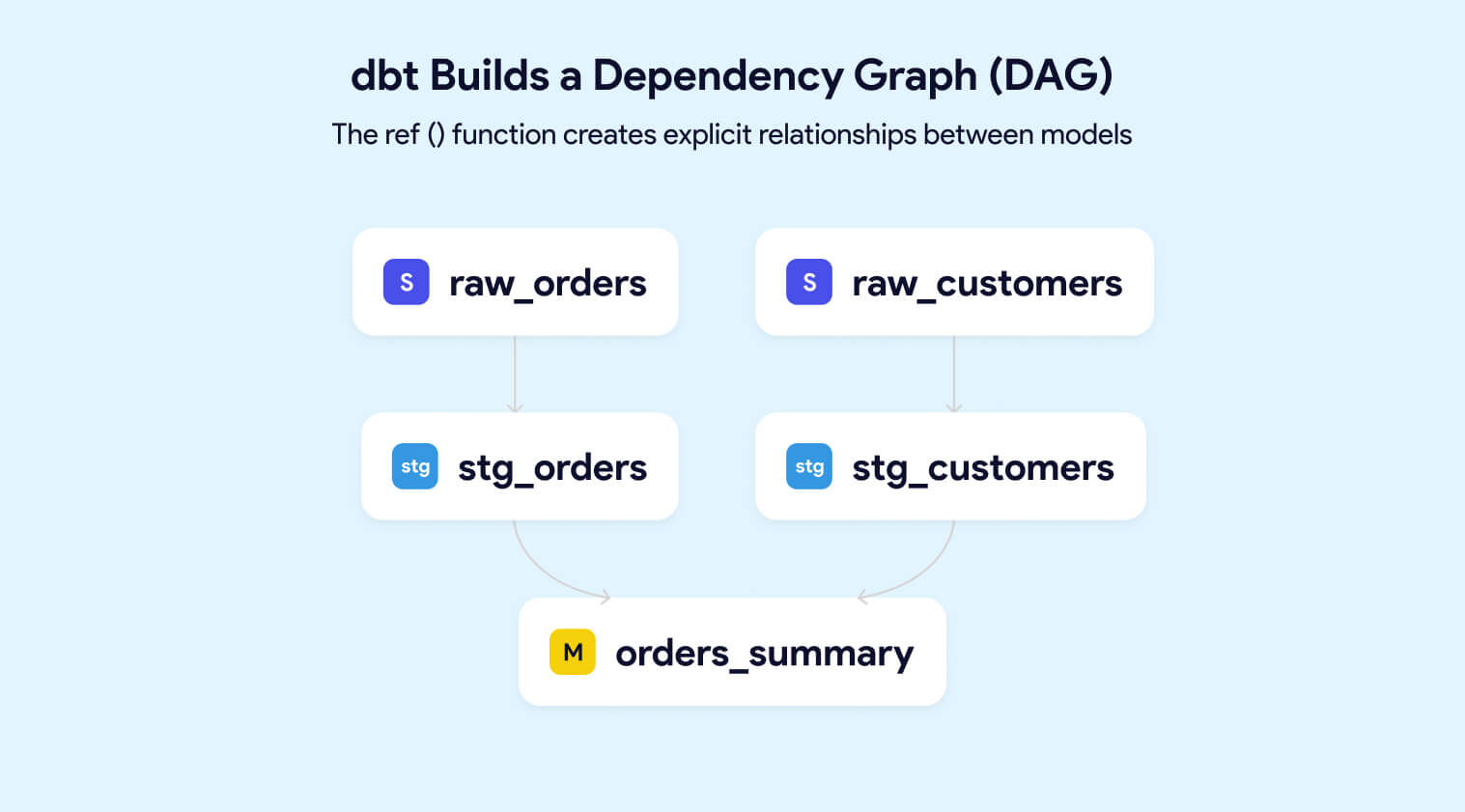

A dbt project consists of SQL files called models. Each model is a SELECT statement that defines a transformation. When you run dbt, it compiles these models, resolves dependencies, and executes the SQL directly in your warehouse. The results materialize as tables or views. Data never leaves your warehouse.

Example: A Simple dbt Model (models/marts/orders_summary.sql)

SELECT

customer_id,

COUNT(*) AS total_orders,

SUM(order_amount) AS lifetime_value,

MIN(order_date) AS first_order_date

FROM {{ ref('stg_orders') }}

GROUP BY customer_idThe {{ref('stg_orders')}} syntax creates an explicit dependency. dbt uses these references to build a dependency graph (DAG) of your entire pipeline, ensuring models run in the correct order.

For large datasets, dbt supports incremental models that process only new or changed data. This keeps pipelines fast and warehouse costs controlled as data volumes grow.

With dbt, teams can:

dbt handles the "T" in ELT. It does not extract, load, or move data between systems.

Misaligned expectations are a primary cause of failed dbt implementations. Knowing what dbt does not do matters as much as knowing what it does.

This separation of concerns is intentional. By focusing exclusively on transformation, dbt allows enterprises to evolve their ingestion, orchestration, and visualization layers independently. You can swap Informatica for Azure Data Factory or migrate from Redshift to Snowflake without rewriting your business logic.

dbt is a tool, not a strategy. Organizations with unclear data ownership, no governance framework, or misaligned incentives will not solve those problems by adopting dbt. They will simply have the same problems with versioned SQL.

For a deeper comparison, see dbt vs Airflow: Which data tool is best for your organization?

Over 30,000+ companies use dbt weekly, including JetBlue, HubSpot, Roche, J&J, Block, and Nasdaq dbt Labs, 2024 State of Analytics Engineering

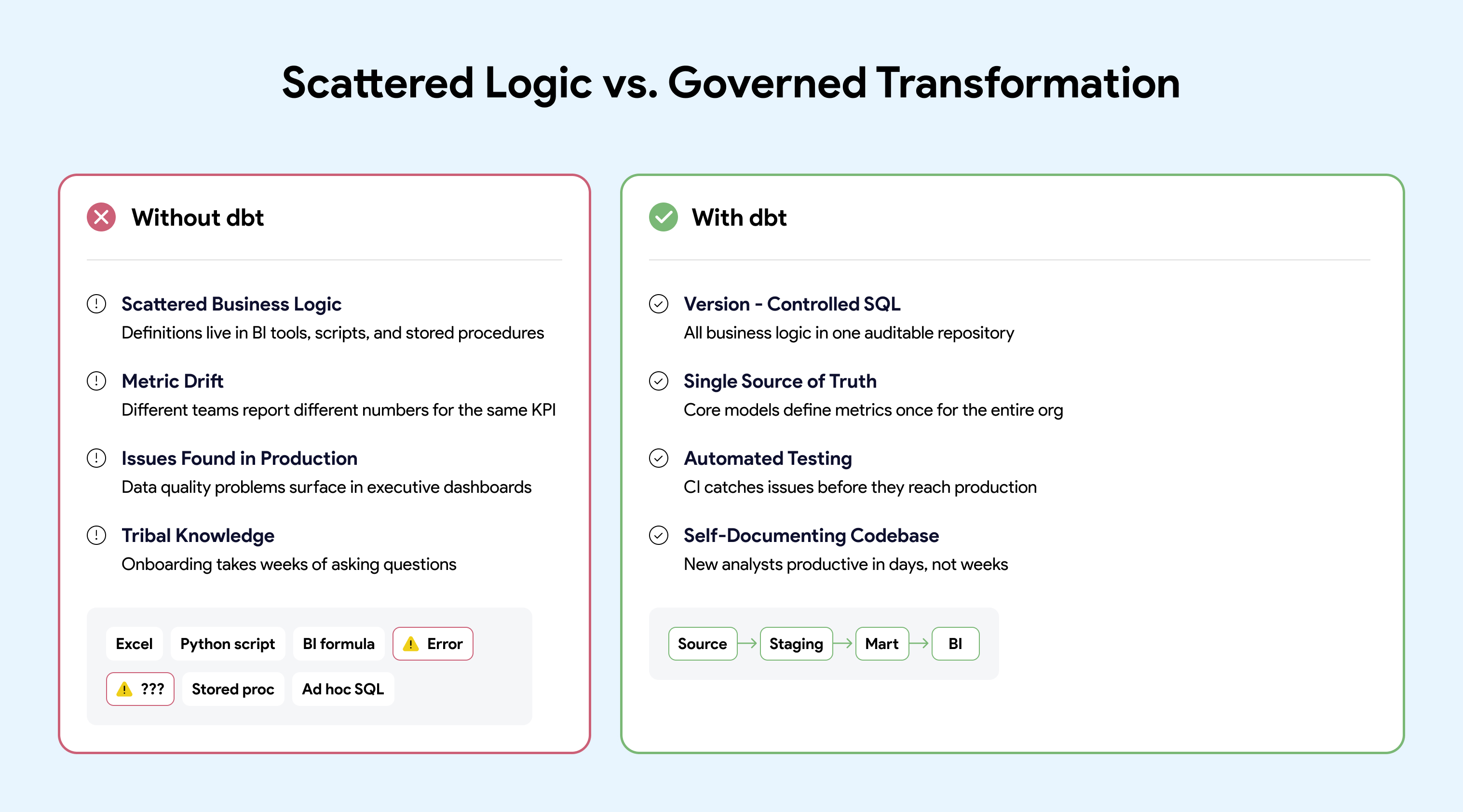

Enterprise adoption of dbt has accelerated because it solves problems that emerge specifically at scale. Small teams can manage transformation logic in spreadsheets and ad hoc scripts. At enterprise scale, that approach creates compounding risk.

dbt has moved well beyond startups into regulated, enterprise environments:

Life Sciences: Roche, Johnson & Johnson (See how J&J modernized their data stack with dbt), and pharmaceutical companies with strict compliance requirements

These are not proof-of-concept deployments. These are production systems powering executive dashboards, regulatory reporting, and customer-facing analytics.

Without a standardized transformation layer, enterprise analytics fails in predictable ways:

Organizations report 45% of analyst time is spent finding, understanding, and fixing data quality issues Gartner Data Quality Market Survey, 2023

dbt addresses these problems by treating transformation logic as production code:

One of the most underappreciated reasons enterprises adopt dbt is leverage. dbt is not just a transformation framework. It sits at the center of a broad ecosystem that reduces implementation risk and accelerates delivery.

dbt packages are reusable projects available at hub.getdbt.com. They provide pre-built tests, macros, and modeling patterns that let teams leverage proven approaches instead of building from scratch.

Popular packages include:

Using packages signals operational maturity. It reflects a preference for shared, tested patterns over bespoke solutions that create maintenance burden. Mature organizations also create internal packages they can share across teams to leverage learnings across the company.

dbt integrates with the broader data stack through its rich metadata (lineage, tests, documentation):

Because dbt produces machine-readable metadata, it acts as a foundation that other tools build on. This makes dbt a natural anchor point for enterprise data platforms.

The dbt Slack community has 100,000+ members sharing patterns, answering questions, and debugging issues dbt Labs Community Stats, 2024

For enterprises, community size matters because:

When you adopt dbt, you are not just adopting a tool. You are joining an ecosystem with momentum.

A typical dbt workflow follows software engineering practices familiar to any developer:

models:

- name: orders_summary

description: "Customer-level order aggregations"

columns:

- name: customer_id

description: "Primary key from source system"

tests:

- unique

- not_null

- name: lifetime_value

description: "Sum of all order amounts in USD" For executives and data leaders, dbt is less about SQL syntax and more about risk reduction and operational efficiency.

Organizations implementing dbt with proper DataOps practices report:

dbt supports enterprise governance requirements by making transformations explicit and auditable:

The question for enterprise leaders is not "Should we use dbt?" The question is "How do we operate dbt as production infrastructure?"

dbt Core is open source, and many teams start by running it on a laptop. But open source looks free the way a free puppy looks free. The cost is not in the acquisition. The cost is in the care and feeding.

For a detailed comparison, see Build vs Buy Analytics Platform: Hosting Open-Source Tools.

The hard part is not installing dbt. The complexity comes from everything around it:

Building your own dbt platform is like wiring your own home: possible, but very few teams should. Most enterprises find that building and maintaining this infrastructure becomes a distraction from their core mission of delivering data products.

dbt delivers value when supported by clear architecture, testing standards, CI/CD automation, and a platform that enables teams to work safely at scale.

Skip the Infrastructure. Start Delivering.

Datacoves provides managed dbt and Airflow deployed in your private cloud, with pre-built CI/CD, VS Code environments, and best-practice architecture out of the box. Your data never leaves your network. No VPC peering required.

Learn more about Managed dbt + Airflow

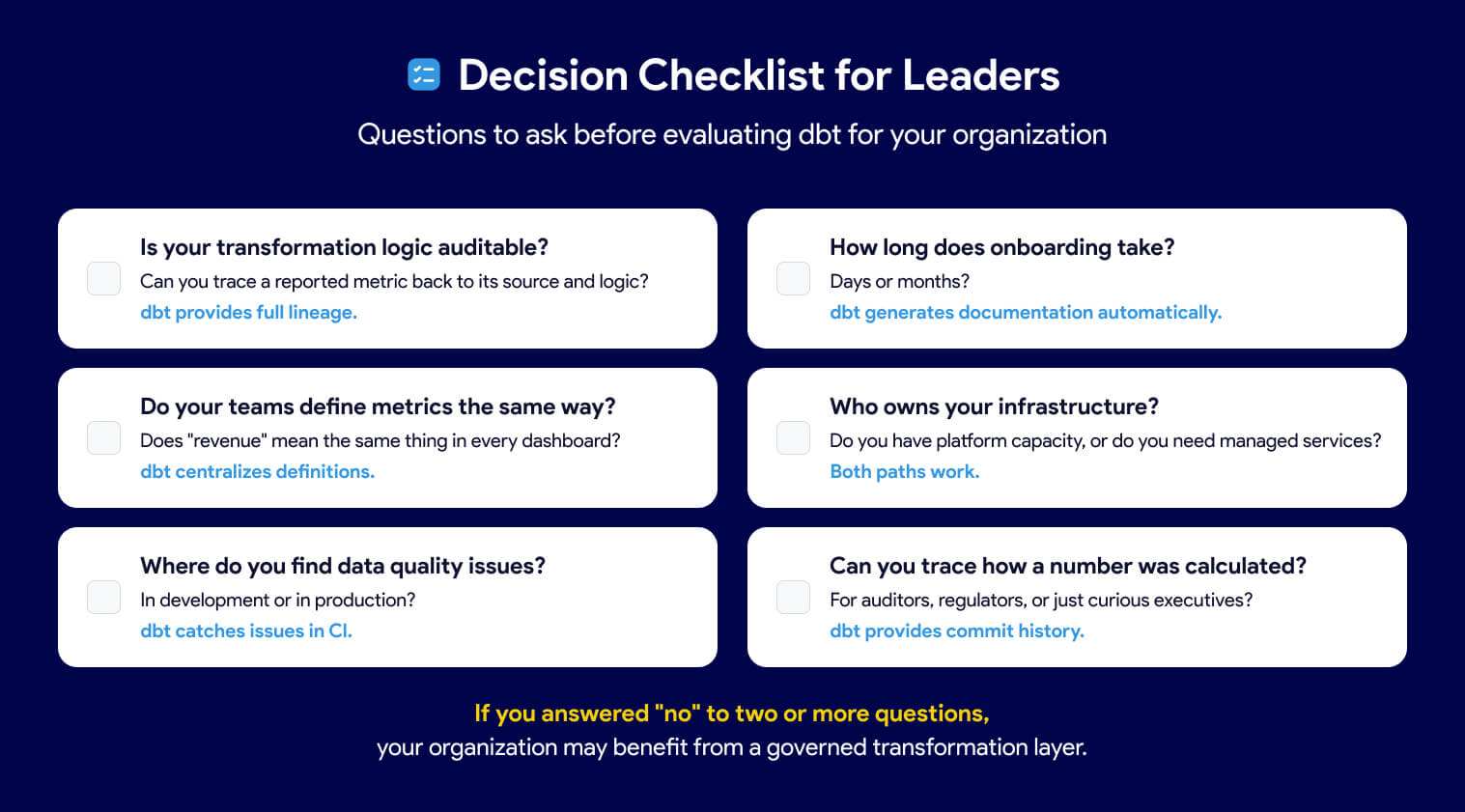

Before adopting or expanding dbt, leaders should ask:

Is your transformation logic auditable? If business rules live in dashboards, stored procedures, or tribal knowledge, the answer is no. dbt makes every transformation visible, version-controlled, and traceable.

Do your teams define metrics the same way? If "revenue" or "active user" means different things to different teams, you have metric drift. dbt centralizes definitions in code so everyone works from a single source of truth.

Where do you find data quality issues? If problems surface in executive dashboards instead of daily data quality check, you lack automated testing. dbt runs tests on every build, catching issues before they reach end users.

How long does onboarding take? If new analysts spend weeks decoding tribal knowledge, your codebase is not self-documenting. dbt generates documentation and lineage automatically from code.

Who owns your infrastructure? Decide whether your engineers should be building platforms or building models. Operating dbt at scale requires CI/CD, orchestration, environments, and security. That work must live somewhere.

Can you trace how a number was calculated? If auditors or regulators ask how a reported figure was derived, you need full lineage from source to dashboard. dbt provides that traceability by design.

dbt has become the standard for enterprise data transformation because it makes business logic visible, testable, and auditable. But the tool alone is not the strategy. Organizations that treat dbt as production infrastructure, with proper orchestration, CI/CD, and governance, unlock its full value. Those who skip the foundation often find themselves rebuilding later.

Ready to skip the infrastructure complexity? See how Datacoves helps enterprises operate dbt at scale

The merger of dbt Labs and Fivetran (which we refer to as dbt Fivetran for simplicity) represents a new era in enterprise analytics. The combined company is expected to create a streamlined, end-to-end data workflow consolidating data ingestion, transformation, and activation with the stated goal of reducing operational overhead and accelerating delivery. Yet, at the dbt Coalesce conference in October 2025 and in ongoing conversations with data leaders, many are voicing concerns about price uncertainty, reduced flexibility, and the long-term future of dbt Core.

As enterprises evaluate the implications of this merger, understanding both the opportunities and risks is critical for making informed decisions about their organization's long-term analytics strategy.

In this article, you’ll learn:

1. What benefits could the dbt Fivetran merger offer enterprise data teams

2. Key risks and lessons from past open-source acquisitions

3. How enterprises can manage risks and challenges

4. Practical steps dbt Fivetran can take to address community anxiety

For enterprise data teams, the dbt Fivetran merger may bring compelling opportunities:

1. Integrated Analytics Stack:

The combination of ingestion, transformation, and activation (reverse ETL) processes may enhance onboarding by streamlining contract management, security evaluations, and user training.

2. Resource Investment:

The merged company has the potential to speed up feature development across the data landscape. Open data standards like Iceberg could see increased adoption, fostering interoperability between platforms such as Snowflake and Databricks.

While these prospects are enticing, they are not guaranteed. The newly formed organization now faces the non-trivial task of merging various teams, including Fivetran, HVR (Oct 2021), Census (May 2025), SQLMesh/Tobiko (Sept 2025), and dbt Labs (Oct 2025). Successfully integrating their tools, development practices, and support functions will be crucial. To create a truly seamless, end-to-end platform, alignment of product roadmaps, engineering standards, and operational processes will be necessary. Enterprises should carefully assess the execution risks when considering the promised benefits of this merger, as these advantages hinge on Fivetran's ability to effectively integrate these technologies and teams.

The future openness and flexibility of dbt Core is being questioned, with significant consequences for enterprise data teams that rely on open-source tooling for agility, security, and control.

dbt’s rapid adoption, now exceeding 80,000 projects, was fueled by its permissive Apache License and a vibrant, collaborative community. This openness allowed organizations to deploy, customize, and extend dbt to fit their needs, and enabled companies like Datacoves to build complementary tools, sponsor open-source projects, and simplify enterprise data workflows.

However, recent moves by dbt Labs, accelerated by the Fivetran merger, signal a natural evolution toward monetization and enterprise alignment:

1. Licensing agreement with Snowflake

2. Rewriting dbt Core as dbt Fusion under a more restrictive ELv2 license

3. Introducing a “freemium” model for the dbt VS Code Extension, limiting free use to 15 registered users per organization

While these steps are understandable from a business perspective, they introduce uncertainty and anxiety within the data community. The risk is that the balance between open innovation and commercial control could tip, raising understandable questions about long-term flexibility that enterprises have come to expect from dbt Core.

dbt Labs and Fivetran have both stated that dbt Core's license would not change, and I believe them. The vast majority of dbt users are using dbt Core and changing the licenses risks fragmentation and loss of goodwill in the community. The future vision for dbt is not dbt Core, but instead dbt Fusion.

While I see a future for dbt Core, I don't feel the same about SQLMesh. There is little chance that the dbt Fivetran organization would continue to invest in two open-source projects. It is also unlikely that SQLMesh innovations would make their way into dbt Core, as that would directly compete with dbt Fusion.

Recent history offers important cautionary tales for enterprises. While not a direct parallel, it’s worth learning from:

1. Terraform: A license change led to fragmentation and the creation of OpenTofu, eroding trust in the original steward.

2. ElasticSearch: License restrictions resulted in the OpenSearch fork, dividing the community and increasing support risks.

3. Redis and MongoDB: Similar license shifts caused forks or migrations to alternative solutions, increasing risk and migration costs.

For enterprise data leaders, these precedents highlight the dangers of vendor fragmentation, increased migration costs, and uncertainty around long-term support. When foundational tools become less open, organizations may face difficult decisions about adapting, migrating, or seeking alternatives. If you're considering your options, check out our Platform Evaluation Worksheet.

On the other hand, there are successful models where open-source projects and commercial offerings coexist and thrive:

1. Airflow: Maintains a permissive license, with commercial providers offering managed services and enterprise features.

2. GitLab, Spark, and Kafka: Each has built a sustainable business around a robust open-source core, monetizing through value-added services and features.

These examples show that a healthy open-source core, supported by managed services and enterprise features, can benefit all stakeholders, provided the commitment to openness remains.

To navigate the evolving landscape, enterprises should:

1. Monitor licensing and governance changes closely.

2. Engage in community and governance discussions to advocate for transparency.

3. Plan for contingencies, including potential migration or multi-vendor strategies.

4. Diversify by avoiding over-reliance on a single vendor or platform.

Avoid Vendor Lock-In:

1. Continue to leverage multiple tools for data ingestion and orchestration (e.g., Airflow) instead of relying solely on a single vendor’s stack.

2. Why? This preserves your ability to adapt as technology and vendor priorities evolve. While tighter tool integration is a potential promise of consolidation, options exist to reduce the burden of a multi-tool architecture.

For instance, Datacoves is built to help enterprises maintain governance, reliability, and freedom of choice to deploy securely in their own network, specifically supporting multi-tool architectures and open standards to minimize vendor lock-in risk.

Demand Roadmap Transparency:

1. Engage with your vendors about their product direction and advocate for community-driven development.

2. Why? Transparency helps align vendor decisions with your business needs and reduces the risk of disruptive surprises.

Participate in Open-Source Communities:

1. Contribute to and help maintain the open-source projects that underpin your data platform.

2. Why? Active participation ensures your requirements are heard and helps sustain the projects you depend on.

Attend and Sponsor Diverse Conferences:

1. Support and participate in community-driven events (such as Airflow Summit) to foster innovation and avoid concentration of influence.

2. Why? Exposure to a variety of perspectives leads to stronger solutions and a healthier ecosystem.

Support OSS Creators Financially and Through Advocacy:

1. Sponsor projects or directly support maintainers of critical open-source tools.

2. Why? Sustainable funding and engagement are vital for the health and reliability of the open-source ecosystem.

Encourage Openness and Diversity

1. Champion Diversity in OSS Governance: Advocate for broad, meritocratic project leadership and a diverse contributor base.

2. Why? Diverse stewardship drives innovation, resilience, and reduces the risk of any one entity dominating the project’s direction.

Long-term analytics success isn’t just about technology selection. It’s about actively shaping the ecosystem through strategic diversification, transparent vendor engagement, and meaningful support of open standards and communities. Enterprises that invest in these areas will be best equipped to thrive, no matter how the vendor landscape evolves.

While both dbt Labs and Fivetran have stated that the dbt Core license would remain permissive, to preserve trust and innovation in the data community, dbt Fivetran should commit to neutral governance and open standards for dbt Core, ensuring it remains a true foundation for collaboration, not fragmentation.

It is common knowledge that the dbt community has powered a remarkable flywheel of innovation, career growth, and ecosystem expansion. Disrupting this momentum risks technical fragmentation and loss of goodwill, outcomes that benefit no one in the analytics landscape.

To maintain community trust and momentum, dbt Fivetran should:

1. Establish Neutral Governance:

Place dbt Core under independent oversight, where its roadmap is shaped by a diverse set of contributors, not just a single commercial entity. Projects like Iceberg have shown that broad-based governance sustains engagement and innovation, compared to more vendor-driven models like Delta Lake.

2. Consider Neutral Stewardship Models:

One possible long-term approach that has been seen in projects like Iceberg and OpenTelemetry is to place an open-source core under neutral foundation governance (for example, the Linux Foundation or Apache Software Foundation).

While dbt Labs and Fivetran have both reaffirmed their commitment to keeping dbt Core open, exploring such models in the future could further strengthen community trust and ensure continued neutrality as the platform evolves.

3. Encourage Meritocratic Development: Empower a core team representing the broader community to guide dbt Core’s future. This approach minimizes the risk of forks and fragmentation and ensures that innovation is driven by real-world needs.

4. Apply Lessons from MetricFlow: When dbt Labs acquired MetricFlow and changed its license to BSL, it led to further fragmentation in the semantic layer space. Now, with MetricFlow relicensed as Apache and governed by the Open Semantic Interchange (OSI) initiative (including dbt Labs, Snowflake, and Tableau), the project is positioned as a vendor-neutral standard. This kind of model should be considered for dbt Core as well.

1. Technical teams: By ensuring continued access to an open, extensible framework, and reducing the risk of disruptive migration.

2. Business leaders: By protecting investments in analytics workflows and minimizing vendor lock-in or unexpected costs.

Solidifying dbt Core as a true open standard benefits the entire ecosystem, including dbt Fivetran, which is building its future, dbt Fusion, on this foundation. Taking these steps would not only calm community anxiety but also position dbt Fivetran as a trusted leader for the next era of enterprise analytics.

The dbt Fivetran merger represents a defining moment for the modern data stack, promising streamlined workflows while simultaneously raising critical questions about vendor lock-in, open-source governance, and long-term flexibility. Successfully navigating this shift requires a proactive, diversified strategy, one that champions open standards and avoids over-reliance on any single vendor. Enterprises that invest in active community engagement and robust contingency planning will be best equipped to maintain control and unlock maximum value from their analytics platforms.

If your organization is looking for a way to mitigate these risks and secure your workflows with enterprise-grade governance and multi-tool architecture, Datacoves offers a managed platform designed for maximum flexibility and control. For a deeper look, find out what Datacoves has to offer.

Ready to take control of your data future? Contact us today to explore how Datacoves allows organizations to take control while still simplifying platform management and tool integration.

In Apache Airflow, scheduling workflows has traditionally been managed using the schedule_interval parameter, which accepts definitions such as datetime objects or cron expressions to establish time-based intervals for DAG (Directed Acyclic Graph) executions. Airflow was a powerful scheduler but became even more efficient when Airflow introduced a significant enhancement in the incorporation of datasets into scheduling. This advancement enables data-driven DAG execution, allowing workflows to be triggered by specific data updates rather than relying on predetermined time intervals.

In this article, we'll dive into the concept of Airflow datasets, explore their transformative impact on workflow orchestration, and provide a step-by-step guide to schedule your DAGs using Datasets!

DAG scheduling in Airflow was primarily time-based, relying on parameters like schedule_interval and start_date to define execution times. With this set up there were three ways to schedule your DAGs: Cron, presets, or timedelta objects. Let's examine each one.

schedule_interval='5 4 * * *'. @hourly: Runs the DAG at the beginning of every hour. @daily: Runs the DAG at midnight every day. @weekly: Runs the DAG at midnight on the first day of the week. @monthly: Runs the DAG at midnight on the first day of the month. @yearly: Runs the DAG at midnight on January 1st. schedule_interval=timedelta(hours=6) would schedule the DAG every six hours. While effective for most complex jobs, time-based scheduling had some limitations:

Fixed Timing: DAGs ran at predetermined times, regardless of data readiness (this is the key to Datasets). If data wasn't available at the scheduled time, tasks could fail or process incomplete data.

Sensors and Polling: To handle data dependencies, sensors were employed to wait for data availability. However, sensors often relied on continuous polling, which could be resource-intensive and lead to inefficiencies.

Airflow Datasets were created to overcome these scheduling limitations.

A Dataset is a way to represent a specific set of data. Think of it as a label or reference to a particular data resource. This can be anything: a csv file, an s3 bucket or SQL table. A Dataset is defined by passing a string path to the Dataset() object. This path acts as an identifier — it doesn't have to be a real file or URL, but it should be consistent, unique, and ideally in ASCII format (plain English letters, numbers, slashes, underscores, etc.).

from airflow.datasets import Dataset

my_dataset = Dataset("s3://my-bucket/my-data.csv")

# or

my_dataset = Dataset("my_folder/my_file.txt")When using Airflow Datasets, remember that Airflow does not monitor the actual contents of your data. It doesn’t check if a file or table has been updated.

Instead, it tracks task completion. When a task that lists a Dataset in its outlets finishes successfully, Airflow marks that Dataset as “updated.” This means the task doesn’t need to actually modify any data — even a task that only runs a print() statement will still trigger any Consumer DAGs scheduled on that Dataset. It’s up to your task logic to ensure the underlying data is actually being modified when necessary. Even though Airflow isn’t checking the data directly, this mechanism still enables event-driven orchestration because your workflows can run when upstream data should be ready.

For example, if one DAG has a task that generates a report and writes it to a file, you can define a Dataset for that file. Another DAG that depends on the report can be triggered automatically as soon as the first DAG’s task completes. This removes the need for rigid time-based scheduling and reduces the risk of running on incomplete or missing data.

Datasets give you a new way to schedule your DAGs—based on when upstream DAGs completion, not just on a time interval. Instead of relying on schedule_interval, Airflow introduced the schedule parameter to support both time-based and dataset-driven workflows. When a DAG finishes and "updates" a dataset, any DAGs that depend on that dataset can be triggered automatically. And if you want even more control, you can update your Dataset externally using the Airflow API.

When using Datasets in Airflow, you'll typically work with two types of DAGs: Producer and Consumer DAGs.

A DAG responsible for defining and "updating" a specific Dataset. We say "updating" because Airflow considers a Dataset "updated" simply when a task that lists it in its outlets completes successfully — regardless of whether the data was truly modified.

A Producer DAG:

✅ Must have the Dataset variable defined or imported

✅ Must include a task with the outlets parameter set to that Dataset

A DAG that is scheduled to execute once the Producer DAG successfully completes.

A Consumer DAG:

✅ Must reference the same Dataset using the schedule parameter

It’s this producer-consumer relationship that enables event-driven scheduling in Airflow — allowing workflows to run as soon as the data they're dependent on is ready, without relying on fixed time intervals.

1. Define your Dataset.

In a new DAG file, define a variable using the Dataset object and pass in the path to your data as a string. In this example, it’s the path to a CSV file.

# producer.py

from airflow.datasets import Dataset

# Define the dataset representing the CSV file

csv_dataset = Dataset("/path/to/your_dataset.csv") 2. Create a DAG with a task that updates the CSV dataset.

We’ll use the @dag and @task decorators for a cleaner structure. The key part is passing the outlets parameter to the task. This tells Airflow that the task updates a specific dataset. Once the task completes successfully, Airflow will consider the dataset "updated" and trigger any dependent DAGs.

We’re also using csv_dataset.uri to get the path to the dataset—this is the same path you defined earlier (e.g., "/path/to/your_dataset.csv").

# producer.py

from airflow.decorators import dag, task

from airflow.datasets import Dataset

from datetime import datetime

import pandas as pd

import os

# Define the dataset representing the CSV file

csv_dataset = Dataset("/path/to/your_dataset.csv")

@dag(

dag_id='producer_dag',

start_date=datetime(2025, 3, 31),

schedule='@daily',

catchup=False,

)

def producer_dag():

@task(outlets=[csv_dataset])

def update_csv():

data = {'column1': [1, 2, 3], 'column2': ['A', 'B', 'C']}

df = pd.DataFrame(data)

file_path = csv_dataset.uri

# Check if the file exists to append or write

if os.path.exists(file_path):

df.to_csv(file_path, mode='a', header=False, index=False)

else:

df.to_csv(file_path, index=False)

update_csv()

producer_dag()Now that we have a producer DAG that is updating a Dataset. We can create our DAG that will be dependent on the consumer DAG. This is where the magic happens since this DAG will no longer be time dependent but rather Dataset dependant.

1. Instantiate the same Dataset used in the Producer DAG

In a new DAG file (the consumer), start by defining the same Dataset that was used in the Producer DAG. This ensures both DAGs are referencing the exact same dataset path.

# consumer.py

from airflow.datasets import Dataset

# Define the dataset representing the CSV file

csv_dataset = Dataset("/path/to/your_dataset.csv") 2. Set the schedule to the Dataset

Create your DAG and set the schedule parameter to the Dataset you instantiated earlier (the one being updated by the producer DAG). This tells Airflow to trigger this DAG only when that dataset is updated—no need for time-based scheduling.

# consumer.py

import datetime

from airflow.decorators import dag, task

from airflow.datasets import Dataset

csv_dataset = Dataset("/path/to/your_dataset.csv")

@dag(

default_args={

"start_date": datetime.datetime(2024, 1, 1, 0, 0),

"owner": "Mayra Pena",

"email": "mayra@example.com",

"retries": 3

},

description="Sample Consumer DAG",

schedule=[csv_dataset],

tags=["transform"],

catchup=False,

)

def data_aware_consumer_dag():

@task

def run_consumer():

print("Processing updated CSV file")

run_consumer()

dag = data_aware_consumer_dag()

Thats it!🎉 Now this DAG will run whenever the first Producer DAG completes (updates the file).

When using Datasets you may be using the same dataset across multiple DAGs and therefore having to define it many times. There is a simple DRY (Dont Repeat Yourself) way to overcome this.

1. Create a central datasets.py file

To follow DRY (Don't Repeat Yourself) principles, centralize your dataset definitions in a utility module.

Simply create a utils folder and add a datasets.py file.

If you're using Datacoves, your Airflow-related files typically live in a folder named orchestrate, so your path might look like:orchestrate/utils/datasets.py

2. Import the Dataset object

Inside your datasets.py file, import the Dataset class from Airflow:

from airflow.datasets import Dataset 3. Define your Dataset in this file

Now that you’ve imported the Dataset object, define your dataset as a variable. For example, if your DAG writes to a CSV file:

from airflow.datasets import Dataset

# Define the dataset representing the CSV file

CSV_DATASET= Dataset("/path/to/your_dataset.csv") Notice we’ve written the variable name in all caps (CSV_DATASET)—this follows Python convention for constants, signaling that the value shouldn’t change. This makes your code easier to read and maintain.

4. Import the Dataset in your DAG

In your DAG file, simply import the dataset you defined in your utils/datasets.py file and use it as needed.

from airflow.decorators import dag, task

from orchestrate.utils.datasets import CSV_DATASET

from datetime import datetime

import pandas as pd

import os

@dag(

dag_id='producer_dag',

start_date=datetime(2025, 3, 31),

schedule='@daily',

catchup=False,

)

def producer_dag():

@task(outlets=[CSV_DATASET])

def update_csv():

data = {'column1': [1, 2, 3], 'column2': ['A', 'B', 'C']}

df = pd.DataFrame(data)

file_path = CSV_DATASET.uri

# Check if the file exists to append or write

if os.path.exists(file_path):

df.to_csv(file_path, mode='a', header=False, index=False)

else:

df.to_csv(file_path, index=False)

update_csv()

producer_dag()

Now you can reference CSV_DATASET in your DAG's schedule or as a task outlet, keeping your code clean and consistent across projects.🎉

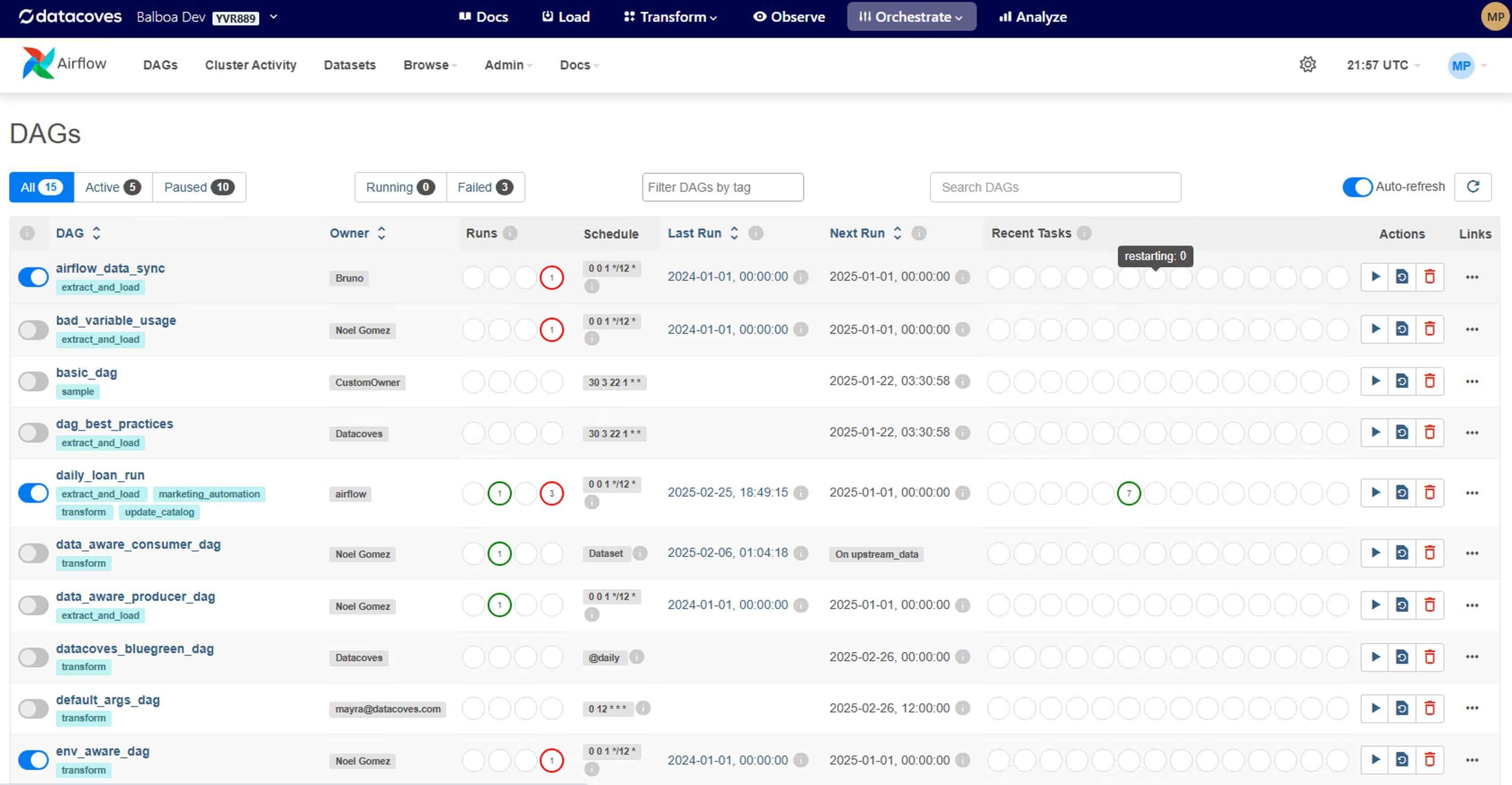

You can visualize your Datasets as well as events triggered by Datasets in the Airflow UI. There are 3 tabs that will prove helpful for implementation and debugging your event triggered pipelines:

Dataset Events

The Dataset Events sub-tab shows a chronological list of recent events associated with datasets in your Airflow environment. Each entry details the dataset involved, the producer task that updated it, the timestamp of the update, and any triggered consumer DAGs. This view is important for monitoring the flow of data, ensuring that dataset updates occur as expected, and helps with prompt identification and resolution of issues within data pipelines.

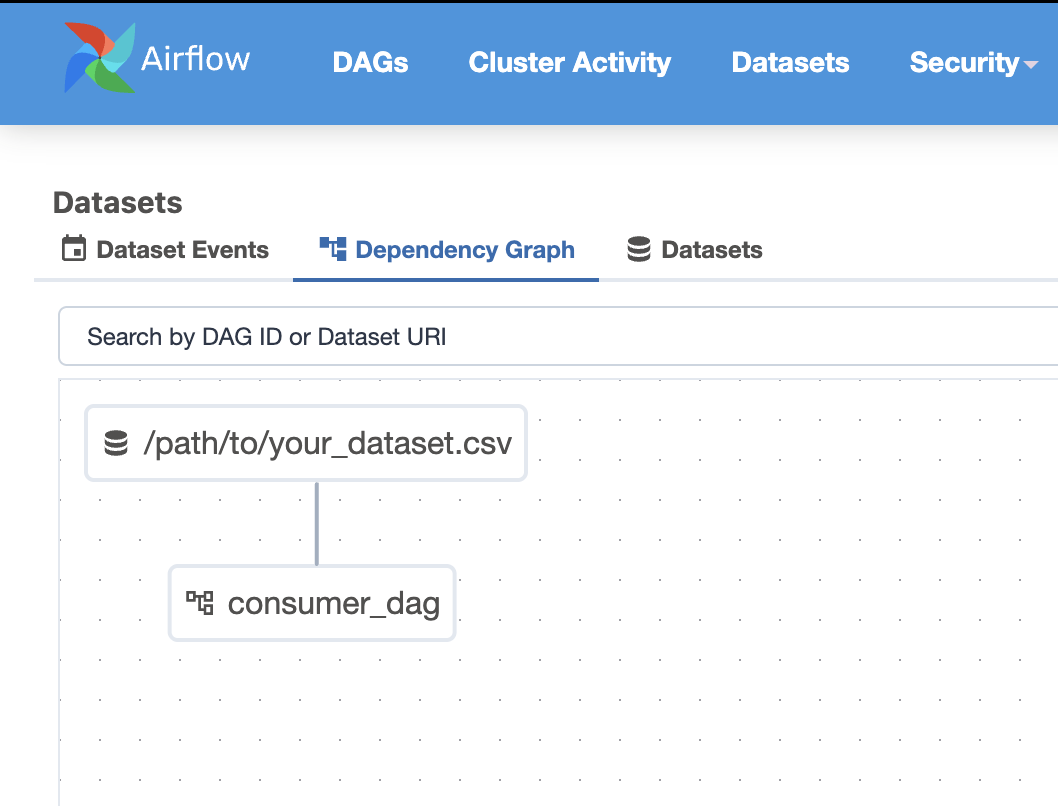

Dependency Graph

The Dependency Graph is a visual representation of the relationships between datasets and DAGs. It illustrates how producer tasks, datasets, and consumer DAGs interconnect, providing a clear overview of data dependencies within your workflows. This graphical depiction helps visualize the structure of your data pipelines to identify potential bottlenecks and optimize your pipeline.

Datasets

The Datasets sub-tab provides a list of all datasets defined in your Airflow instance. For each dataset, it shows important information such as the dataset's URI, associated producer tasks, and consumer DAGs. This centralized view provides efficient management of datasets, allowing users to track dataset usage across various workflows and maintain organized data dependencies.

When working with Datasets, there are a couple of things to take into consideration to maintain readability.

Naming datasets meaningfully: Ensure your names are verbose and descriptive. This will help the next person who is looking at your code and even future you.

Avoid overly granular datasets: While they are a great tool too many = hard to manage. So try to strike a balance.

Monitor for dataset DAG execution delays: It is important to keep an eye out for delays since this could point to an issue in your scheduler configuration or system performance.

Task Completion Signals Dataset Update: It’s important to understand that Airflow doesn’t actually check the contents of a dataset (like a file or table). A dataset is considered “updated” only when a task that lists it in its outlets completes successfully. So even if the file wasn’t truly changed, Airflow will still assume it was. At Datacoves, you can also trigger a DAG externally using the Airflow API and an AWS Lambda Function to trigger your DAG once data lands in an S3 Bucket.

Datacoves provides a scalable Managed Airflow solution and handles these upgrades for you. This alleviates the stress of managing Airflow Infrastructure so you can data teams focus on their pipelines. Checkout how Datadrive saved 200 hours yearly by choosing Datacoves.

The introduction of data-aware scheduling with Datasets in Apache Airflow is a big advancement in workflow orchestration. By enabling DAGs to trigger based on data updates rather than fixed time intervals, Airflow has become more adaptable and efficient in managing complex data pipelines.

By adopting Datasets, you can enhance the maintainability and scalability of your workflows, ensuring that tasks are executed exactly when the upstream data is ready. This not only optimizes resource utilization but also simplifies dependency management across DAGs.

Give it a try! 😎

.png)

Enterprises are increasingly relying on dbt (Data Build Tool) for their data analytics; however, dbt wasn’t designed to be an enterprise-ready platform on its own. This leads to struggles with scalability, orchestration, governance, and operational efficiency when implementing dbt at scale. But if dbt is so amazing why is this the case? Like our title suggests, you need more than just dbt to have a successful dbt analytics implementation. Keep on reading to learn exactly what you need to super charge your data analytics with dbt successfully.

dbt is popular because it solves problems facing the data analytics world. Enterprises today are dealing with growing volumes of data, making efficient data transformation a critical part of their analytics strategy. Traditionally, data transformation was handled using complex ETL (Extract, Transform, Load) processes, where data engineers wrote custom scripts to clean, structure, and prepare data before loading it into a warehouse. However, this approach has several challenges:

dbt (Data Build Tool) transforms this paradigm by enabling SQL-based, modular, and version-controlled transformations directly inside the data warehouse. By following the ELT (Extract, Load, Transform) approach, dbt allows raw data to be loaded into the warehouse first, then transformed within the warehouse itself—leveraging the scalability and processing power of modern cloud data platforms.

Unlike traditional ETL tools, dbt applies software engineering best practices to SQL-based transformations, making it easier to develop, test, document, and scale data pipelines. This shift has made dbt a preferred solution for enterprises looking to empower analysts, improve collaboration, and create maintainable data workflows.

With these benefits it is clear why over 40,000 companies are leveraging dbt today!

Despite dbt’s strengths, enterprises face several challenges when implementing it at scale for a variety of reasons:

Running dbt in production requires robust orchestration beyond simple scheduled jobs. dbt only manages transformations, but a complete end-to-end pipeline includes Extracting, Loading and Visualizing of data. To manage the full end-to-end data pipeline (ELT + Viz) organizations will need a full-fledged orchestrator like Airflow. While there are other orchestration options on the market, Airflow and dbt are a common pattern.

CI/CD pipelines are essential for dbt at the enterprise level, yet one of dbt Core’s major limitations is the lack of a built-in CI/CD pipeline for managing deployments. This makes workflows more complex and increases the likelihood of errors reaching production. To address this, teams can implement external tools like Jenkins, GitHub Actions, or GitLab Workflows that provide a flexible and customizable CI/CD process to automate deployments and enforce best practices.

While dbt Cloud does offer an out-of-the-box CI/CD solution, it lacks customization options. Some organizations find that their use cases demand greater flexibility, requiring them to build their own CI/CD processes instead.

Enterprises seek alternative solutions that provide greater control, scalability, and security over their data platform. However, this comes with the responsibility of managing their own infrastructure, which introduces significant operational overhead ($$$). Solutions like dbt Cloud do not offer Virtual Private Cloud (VPC) deployment, full CI/CD flexibility, and a fully-fledged orchestrator leaving organizations to handle additional platform components.

We saw a need for a middle ground that combined the best of both worlds; something as flexible as dbt Core and Airflow, but fully managed like dbt Cloud. This led to Datacoves which provides a seamless experience with no platform maintenance overhead or onboarding hassles. Teams can focus on generating insights from data and not worry about the platform.

Vendor lock-in is a major concern for organizations that want to maintain flexibility and avoid being tied to a single provider. The ability to switch out tools easily without excessive cost or effort is a key advantage of the modern data stack. Enterprises benefit from mixing and matching best-in-class solutions that meet their specific needs.

Datacoves is a fully managed enterprise platform for dbt, solving the challenges outlined above. Below is how Datacoves' features align with enterprise needs:

Datacoves offers flexible deployment and pricing options to accommodate various enterprise needs:

Datacoves is committed to delivering enterprise-grade support and resources through our white-glove service:

Enterprises need more than just dbt to achieve scalable and efficient analytics. While dbt is a powerful tool for data transformation, it lacks the necessary infrastructure, governance, and orchestration capabilities required for enterprise-level deployments. Datacoves fills these gaps by providing a fully managed environment that integrates dbt-Core, VS Code, Airflow, and Kubernetes-based deployments, Datacoves is the ultimate solution for organizations looking to scale dbt successfully.

The latest release of dbt 1.9, introduces some exciting features and updates meant to enhance functionality and tackle some pain points of dbt. With improvements like microbatch incremental strategy, snapshot enhancements, Iceberg table format support, and streamlined CI workflows, dbt 1.9 continues to help data teams work smarter, faster, and with greater precision. All the more reason to start using dbt today!

We looked through the release notes, so you don’t have to. This article highlights the key updates in dbt 1.9, giving you the insights needed to upgrade confidently and unlock new possibilities for your data workflows. If you need a flexible dbt and Airflow experience, Datacoves might be right for your organization. Lower total cost of ownership by 50% and shortened your time to market today!

If you are upgrading from dbt 1.7 or earlier, you will need to install both dbt-core and the appropriate adapter. This requirement stems from the decoupling introduced in dbt 1.8, a change that enhances modularity and flexibility in dbt’s architecture. These updates demonstrate dbt’s commitment to providing a streamlined and adaptable experience for its users while ensuring compatibility with modern tools and workflows.

pip install dbt-core dbt-snowflakeIn dbt 1.9, the microbatch incremental strategy is a new way to process massive datasets. In earlier versions of dbt, incremental materialization was available to process datasets which were too large to drop and recreate at every build. However, it struggled to efficiently manage very large datasets that are too large to fit into one query. This limitation led to timeouts and complex query management.

The microbatch incremental strategy comes to the rescue by breaking large datasets into smaller chunks for processing using the batch_size, event_time, and lookback configurations to automatically generate the necessary filters for you. However, at the time of this publication this feature is only available on the following adapters: Postgres, Redshift, Snowflake, BigQuery, Spark, and Databricks, with more on the way.

event_time, lookback, and batch_size configurations dbt will generate the necessary filters for each batch. One less thing to worry about! batch_size you set. Each batch is processed separately and in parallel, unless you disable this feature using the +concurrent_batches config. This independence in batch processing improves performance, minimizes the risk of query failures, allows you to retry failed batches using the dbt retry command, and provides the granularity to load specific batches. Gotta love the control without the extra leg work!

To take advantage of the microbatch incremental strategy, first upgrade to dbt 1.9 and ensure your project is configured correctly. By default, dbt will handle the microbatch logic for you, as explained above. However, if you’re using custom logic, such as a custom microbatch macro, don’t forget to set the require_batched_execution_for_custom_microbatch_strategy behavior flag to True in your dbt_project.yml file. This prevents deprecation warnings and ensures dbt knows how to handle your custom configuration.

If you have custom microbatch but wish to migrate, its important to note that earlier versions required setting the environment variable DBT_EXPERIMENTAL_MICROBATCH to enable microbatching, but this is no longer needed. Starting with Core 1.9, the microbatch strategy works seamlessly out of the box, so you can remove it.

With dbt 1.9, snapshots have become easier to use than ever! This is great news for dbt users since snapshots in dbt allow you to capture the state of your data at specific points in time, helping you track historical changes and maintain a clear picture of how your data evolves. Below are a couple of improvements to implement or be aware of.

snapshot_meta_column_names config you now have the option to rename metadata fields to match your project's naming conventions. This added flexibility helps ensure consistency across your data models and simplifies collaboration within teams. dbt_valid_to variable is set to NULL but you can now you can configure it to a data with the dbt_valid_to_current config. It is important to note that dbt will not automatically adjust the current value in the existing dbt_valid_to column. Meaning, any existing current records will still have dbt_valid_to set to NULL and new records will have this value set to your configured date. You will have to manually update existing data to match. Less NULL values to handle downstream! --empty flag is now supported for the dbt snapshot command, allowing you to execute snapshot operations without processing data. This enhancement is particularly useful in Continuous Integration (CI) environments, enabling the execution of unit tests for models downstream of snapshots without requiring actual data processing, streamlining the testing process. The empty flag, introduced in dbt 1.8, also has some powerful applications in Slim CI to optimize your CI/CD worth checking out. hard_deletes configuration enhances the management of deleted records in snapshots. This feature offers three methods: the default ignore, which takes no action on deleted records; invalidate, replacing the invalidate_hard_deletes=trueconfig, which marks deleted records as invalid by setting their dbt_valid_to timestamp to the current time; and lastly new_record, which tracks deletions by inserting a new record with a dbt_is_deleted config set to True.

It's important to note some migration efforts will be required for this. While the invalidate_hard_deletes configuration is still supported for existing snapshots, it cannot be used alongside hard_deletes. For new snapshots, it's recommended to use hard_deletes instead of the legacy invalidate_hard_deletes. If you switch an existing snapshot to use hard_deletes without migrating your data, you may encounter inconsistent or incorrect results, such as a mix of old and new data formats. Keep this in mind when implementing these new configs.

Testing is a vital part of maintaining high data quality and ensuring your data models work as intended. Unit testing was introduced in dbt 1.8 and has seen continued improvement in dbt 1.9.

unit_test: selector. This feature enables more granular control over test execution, allowing you to focus on particular tests without running the entire suite, thereby saving time and resources. dbt test --select unit_test:my_project.my_unit_test

dbt build --select unit_test:my_project.my_unit_test dbt list --resource-type test now correctly include only data tests, excluding unit tests. This distinction enhances clarity and precision when managing different test types within your project. dbt ls --select unit_test:my_project.my_unit_test In dbt version 1.9, the state:modified selector has been enhanced to improve the accuracy of Slim CI workflows. Previously, dynamic configurations—such as setting the database based on the environment—could lead to dbt perceiving changes in models, even when the actual model remained unchanged. This misinterpretation caused Slim CI to rebuild all models unnecessarily, resulting in false positives.

By comparing unrendered configuration values, dbt now accurately detects genuine modifications, eliminating false positives during state comparisons. This improvement ensures that only truly modified models are selected for rebuilding, streamlining your CI processes.

To enable this feature, set the state_modified_compare_more_unrendered_values flag to True in your dbt_project.yml file:

flags:

state_modified_compare_more_unrendered_values: True In dbt 1.9, the dbt docs serve command now has more customization abilities with a new --host flag. This flag allows users to specify the host address for serving documentation. Previously, dbt docs serve defaulted to binding the server to 127.0.0.1 (localhost) without an option to override this setting.

Users can now specify a custom host address using the --host flag when running dbt docs serve. This enhancement provides the flexibility to bind the documentation server to any desired address, accommodating various deployment needs. The default of the --host flag will continue to bind to 127.0.0.1 by default, ensuring backward compatibility and secure defaults.

dbt 1.9 includes several updates aimed at improving performance, usability, and compatibility across projects. These changes ensure a smoother experience for users while keeping dbt aligned with modern standards.

dbt clone command now executes clone operations concurrently, enhancing efficiency and reducing execution time. dbt show and dbt compile commands now support parseable JSON and text outputs when run in quiet mode, facilitating easier integration with other tools and scripts by providing machine-readable outputs. skip_nodes_if_on_run_start_fails Behavior Change Flag: A new behavior change flag, skip_nodes_if_on_run_start_fails, has been introduced to gracefully handle failures in on-run-start hooks. When enabled, if an on-run-start hook fails, subsequent hooks and nodes are skipped, preventing partial or inconsistent runs. dbt 1.9 introduces a range of powerful features and enhancements, reaffirming its role as a cornerstone tool for modern data transformations. The enhancements in this release reflect the community's commitment to innovation and excellence as well as its strength and vitality. There's no better time to join this dynamic ecosystem and elevate your data workflows!

If you're looking to implement dbt efficiently, consider partnering with Datacoves. We can help you reduce your total cost of ownership by 50% and accelerate your time to market. Book a call with us today to discover how we can help your organization in building a modern data stack with minimal technical debt.

Checkout the full release notes.

dbt and Airflow are cornerstone tools in the modern data stack, each excelling in different areas of data workflows. Together, dbt and Airflow provide the flexibility and scalability needed to handle complex, end-to-end workflows.

This article delves into what dbt and Airflow are, why they work so well together, and the challenges teams face when managing them independently. It also explores how Datacoves offers a fully managed solution that simplifies operations, allowing organizations to focus on delivering actionable insights rather than managing infrastructure.

dbt (Data Build Tool) is an open-source analytics engineering framework that transforms raw data into analysis-ready datasets using SQL. It enables teams to write modular, version-controlled workflows that are easy to test and document, bridging the gap between analysts and engineers.

Apache Airflow is an open-source platform designed to orchestrate workflows and automate tasks. Initially created for ETL processes, it has evolved into a versatile solution for managing any sequence of tasks in data engineering, machine learning, or beyond.

While dbt excels at SQL-based data transformations, it has no built-in scheduler, and solutions like dbt Cloud’s scheduling capabilities are limited to triggering jobs in isolation or getting a trigger from an external source. This approach risks running transformations on stale or incomplete data if upstream processes fail. Airflow eliminates this risk by orchestrating tasks across the entire pipeline, ensuring transformations occur at the right time as part of a cohesive, integrated workflow.

Tools like Airbyte and Fivetran also provide built-in schedulers, but these are designed for loading data at a given time and optionally trigger a dbt pipeline. As complexity grows and organizations need to trigger dbt pipelines after data loads via different means such as dlt and Fivetran, then this simple approach does not scale. It is also common to trigger operations after a dbt pipeline and scheduling using the data loading tool will not handle that complexity. With dbt and Airflow, a team can connect the entire process and assure that processes don’t run if upstream tasks fail or are delayed.

Airflow centralizes orchestration, automating the timing and dependencies of tasks—extracting and loading data, running dbt transformations, and delivering outputs. This connected approach reduces inefficiencies and ensures workflows run smoothly with minimal manual intervention.

Modern data workflows extend beyond SQL transformations. Airflow complements dbt by supporting complex, multi-stage processes such as integrating APIs, executing Python scripts, and training machine learning models. This flexibility allows pipelines to adapt as organizational needs evolve.

Airflow also provides a centralized view of pipeline health, offering data teams complete visibility. With its ability to trace issues and manage dependencies, Airflow helps prevent cascading failures and keeps workflows reliable.

By combining dbt’s transformation strengths with Airflow’s orchestration capabilities, teams can move past fragmented processes. Together, these tools enable scalable, efficient analytics workflows, helping organizations focus on delivering actionable insights without being bogged down by operational hurdles.

In our previous article, we discussed building vs buying your Airflow and dbt infrastructure. There are many cons associated with self-hosting these two tools, but Datacoves takes the complexity out of managing dbt and Airflow by offering a fully integrated, managed solution. Datacoves has given many organizations the flexibility of open-source tools with the freedom of managed tools. See how we helped Johnson and Johnson MedTech migrate to our managed dbt and airflow platform.

Datacoves offers the most flexible and robust managed dbt Core environment on the market, enabling teams to fully harness the power of dbt without the complexities of infrastructure management, environment setup, or upgrades. Here’s why our customers choose Datacoves to implement dbt:

Datacoves offers a fully managed Airflow environment, designed for scalability, reliability, and simplicity. Whether you're orchestrating complex ETL workflows, triggering dbt transformations, or integrating with third-party APIs, Datacoves takes care of the heavy lifting by managing the Kubernetes infrastructure, monitoring, and scaling. Here’s what sets Datacoves apart as a managed Airflow solution:

dbt and Airflow are a natural pair in the Modern Data Stack. dbt’s powerful SQL-based transformations enable teams to build clean, reliable datasets, while Airflow orchestrates these transformations within a larger, cohesive pipeline. Their combination allows teams to focus on delivering actionable insights rather than managing disjointed processes or stale data.

However, managing these tools independently can introduce challenges, from infrastructure setup to scaling and ongoing maintenance. That’s where platforms like Datacoves make a difference. For organizations seeking to unlock the full potential of dbt and Airflow without the operational overhead, solutions like Datacoves provide the scalability and efficiency needed to modernize data workflows and accelerate insights.

Book a call today to see how Datacoves can help your organization realize the power of Airflow and dbt.

.png)