A Data Operating Model is the set of decisions that define how a company delivers value from data. It covers ownership, team topology, workflows, standards, SLAs, governance, and the platform layer underneath all of it. The tools sit inside the operating model, not above it.

Most enterprises invest heavily in the tool layer and leave the operating model to emerge on its own during the build. That's the pattern behind nearly every frustrated data leader I talk to: the warehouse works, the transformation tool runs, the SI delivered on the statement of work, and the business still isn't getting what it expected. The absence of a defined operating model before the build started is the usual cause.

This article explains what a Data Operating Model is, what it includes, why foundational gaps compound instead of resolving themselves, and what to do if you're already mid-build and seeing the symptoms.

A Data Operating Model is the blueprint for how your organization turns data into business value. It defines who owns what, how work moves through the system, what standards apply, what "good" looks like, and what the platform underneath must enforce. It sits above the tools and above the architecture. The tools exist to serve the operating model, not the other way around.

Most executives have never been shown a Data Operating Model in concrete terms, so the concept stays abstract. It shouldn't. An operating model is a finite set of decisions that can be written down, agreed on, and enforced. The reason most enterprises don't have one isn't that it's hard to build. It's that it wasn’t scoped and nobody owned the outcome.

A mature Data Operating Model answers seven questions: who owns what, how teams are structured, how work moves through the system, what standards apply, what SLAs the business expects, how governance is enforced, and what the platform layer underneath has to automate.

1. Ownership. Who owns each data product? Who owns each source? Who owns the model that joins them? When something breaks, who is accountable? When something needs to change, whose approval is required? Ownership isn't an org chart. It's the map of accountability across every data asset in the business.

2. Team topology. How do data teams align to the business? Do you have a central data team that services everyone, embedded analytics engineers inside each domain, or a hybrid mesh model? Which decisions are centralized and which are distributed? Team topology is the hardest component to change later, which is why it should be the first decision made.

3. Workflows. How does a request become a data product? How does a code change get from a developer's laptop to production? How do business users request a new metric? How do downstream teams get access to upstream data? These workflows should be documented, repeatable, and the same across every team. When every team invents their own, you get the naming drift, the cross-team gaps, and the late-surfacing issues that frustrate the business.

4. Standards. Naming conventions. Layering semantics. Documentation expectations. Testing requirements. Code review rules. Branching strategy. These are the things that make a platform legible to a new engineer on day one instead of week six. Standards that live only in a Confluence page are not standards. They're suggestions.

5. SLAs. What does the business expect for data freshness? How fast should a new KPI ship? How fast should a new source onboard? What's the acceptable recovery time when a pipeline fails? Without explicit SLAs, every request becomes a negotiation, and every failure becomes a fire drill.

6. Governance. Who can approve a production deployment? Who signs off on a new data product? How is access granted and reviewed? How are sensitive fields handled? Governance isn't a separate project to start next quarter. It's a dimension of every decision the operating model makes.

7. Platform layer. The infrastructure underneath all the above. Git workflows. CI/CD. Orchestration. Development environments. Secrets management. Deployment conventions. This layer exists to enforce the operating model automatically, so the team doesn't have to remember to follow the rules.

Every enterprise already has answers to these seven questions. The difference between a mature operating model and an immature one is whether those answers were decided deliberately, written down, and enforced by the system, or whether they emerged ad hoc as the build progressed.

These terms get used interchangeably in executive conversations and they shouldn't be.

If the operating model is this important, why don't enterprises start there? Because the path to a data platform almost never runs through the operating model. It runs through a tool purchase, a vendor pitch, or a business crisis that demands a fast answer. The operating model is the thing that gets skipped because nobody in the room knows to ask for it, and the people selling the build aren't incentivized to slow things down.

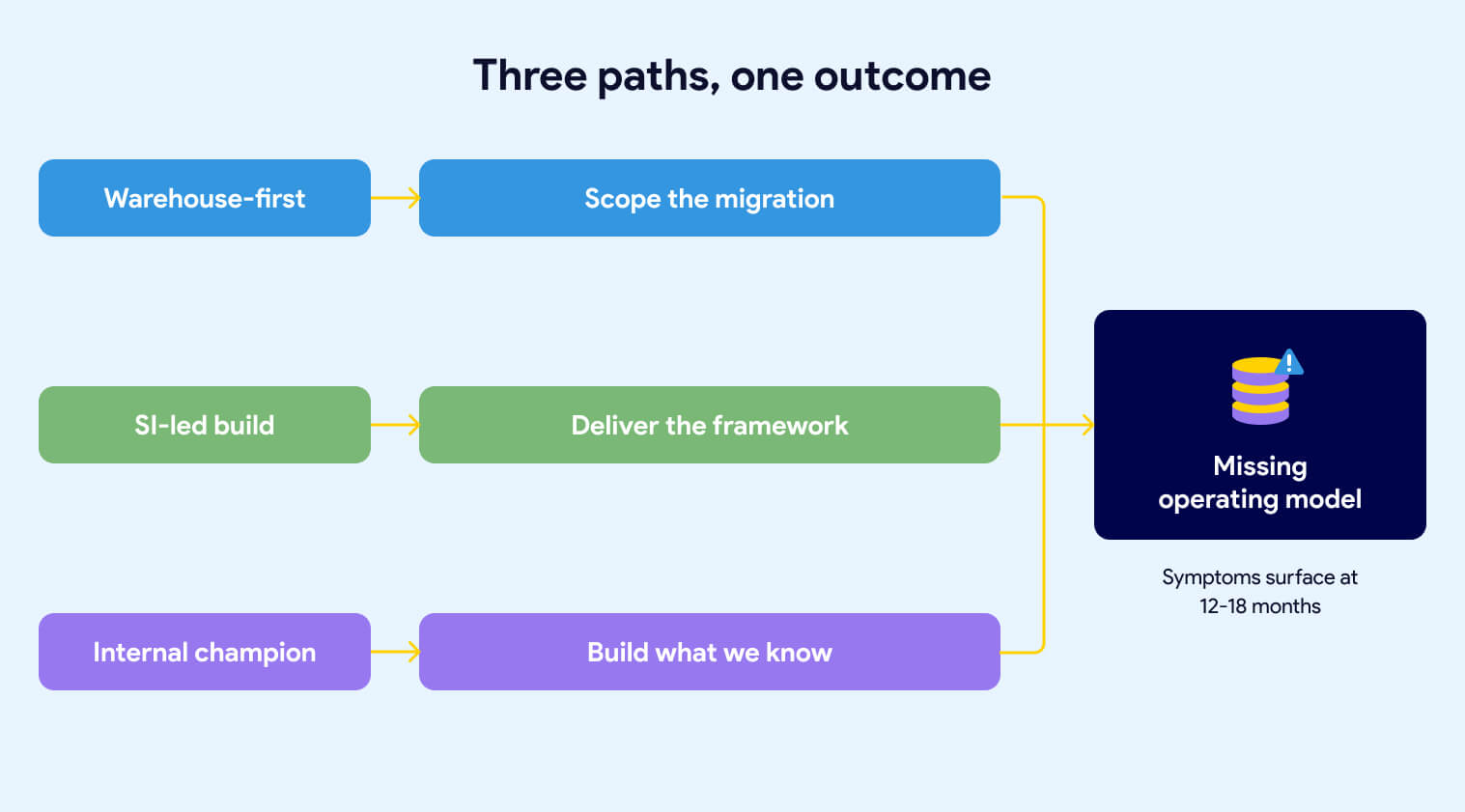

Three patterns show up repeatedly. Each one produces the same outcome: a platform that works technically but doesn't deliver on the business intent.

The first pattern starts with the warehouse. Leadership identifies that the current data infrastructure is too slow, too expensive, or too old. Someone comes back from a Snowflake conference. A decision gets made to modernize. The procurement process kicks off. Within a few months, Snowflake is signed and an implementation partner is scoped.

The scope is the migration. Move data from the legacy system into Snowflake. Replicate the existing transformation logic. Hit the go-live date. That's what the statement of work says, and that's what gets delivered.

What isn't in the scope: the operating model. Nobody wrote into the contract that the team would emerge from the engagement with agreed-upon naming conventions, a defined ownership map, documented SLAs, or a governance framework. The warehouse goes live on schedule. The operating model questions are still open eighteen months later, because nobody owned them and nobody was paid to answer them. We've covered what gets missed when the implementation is scoped around the warehouse in more depth.

The second pattern hands the build to a systems integrator (SI) and watches them default to what they know. Every SI has a playbook. Some propose a custom metadata-driven framework. Some build their own Python-based orchestration layer. Some fall back on what they've shipped at ten other clients: heavy stored procedure logic, ELT patterns from a previous engagement, or a homegrown configuration system that mirrors whatever the team's senior architect built fifteen years ago.

The specific build doesn't matter as much as what the SI is focused on. They're focused on delivering the build. They're not focused on the business outcome the build is supposed to produce. We've seen this pattern documented in detail across enterprise implementations.

That distinction is the source of the problem.

When the engagement is scoped around the framework, the team's energy goes into framework decisions. How should the config tables be structured? What's the deployment mechanism? How do we handle environment promotion? Those are real questions, and they take real effort to answer. What doesn't get asked in the same meetings: Which business units are going to use this, and do they agree on naming? Who owns the data products once they're live? What SLAs is the business expecting? How will cross-team collaboration work when the second and third business units come online?

The framework ships. The first use cases deliver. The demo goes well. Then the symptoms start.

The internal team can read the framework but can't extend it without the SI. Framework changes require a new engagement. New capabilities that land in the open-source ecosystem, new Airflow features, new dbt patterns, new CI/CD tooling, don't land in the custom framework unless someone pays the SI to add them. The team is now operating on two clocks: the clock of the open-source world moving forward, and the clock of the custom build moving only when budget is available.

Meanwhile, the operating model gaps that existed before the SI arrived are still there. The SI wasn't asked to define naming conventions across business units, or to specify how cross-team collaboration should work, or to document who owns what. They were asked to build. So the build got built, the delivery team uses it, and the foundational questions remain unanswered. Now they're harder to address because the system is already in production and the vendor who understands it best is billing by the hour.

None of this is a critique of SIs as a category. Good SIs exist, and they can deliver real value inside a well-defined operating model. The problem is asking an SI to build a platform before the organization has decided what the platform is supposed to enforce. Under those conditions, the SI will default to what they know how to build. And what they know how to build will calcify around their way of working long after they've rolled off.

The third pattern doesn't require an SI. It happens when an internal data leader, often passionate and well-intentioned, drives the modernization themselves. They know the business problem. They've seen the pain. They've done their research on the modern data stack. They build the business case and get the budget.

What they often don't have is deep production experience running a data platform at enterprise scale. They know what outcomes good platforms produce. They haven't necessarily been inside one long enough to see the operating model decisions that make those outcomes possible.

So the modernization gets shaped around what they know: the warehouse, the transformation tool, maybe a basic orchestration layer. The harder operating model questions, ownership, team topology, SLAs, standards enforcement, cross-team workflows, don't get asked because nobody in the room has been burned by skipping them before. The team inherits a modern tool stack and an immature operating model, and the symptoms start showing up twelve to eighteen months in.

Buying Snowflake, buying dbt, and hiring an SI does not give you a Data Operating Model. The tools sit inside the operating model, not above it. Starting the build before the operating model is defined, produces a platform that works technically but doesn't deliver on the business intent.

All three patterns share the same structural problem. The build starts before the operating model is defined, and the operating model is expected to emerge on its own during delivery. It doesn't. Operating models don't emerge. They get decided, or they get compensated for.

The teams that end up with mature operating models aren't the ones who got lucky with their tool choices or their SI. They're the ones who treated the operating model as an explicit deliverable, owned by leadership, scoped at the start of the project, and refined over time as the business learned. That work is not glamorous. It doesn't show up in a conference talk. It's the difference between a platform the business trusts and a platform the business works around.

The symptoms of a missing operating model are concrete, repeatable, and visible without technical expertise. If your platform has any of them, the operating model is doing less work than the team thinks it is.

The same concept gets six different names. CUSTOMER_ORDERS_MONTHLY_US, CUSTOMER_ORDERS_US_MONTHLY, CUSTOMER_ORDERS_MONTHLY_US_FINAL, CUSTOMER_ORDERS_US_MTHLY, and two more variations depending on which team built the model. Every variation is defensible in isolation. Together they make the platform illegible to a new engineer, impossible to govern, and fragile to extend. Naming is the most visible tell of a missing operating model because naming is decided by the operating model. When the operating model is absent, naming is decided by whoever gets there first.

A team needs to answer an ad-hoc question using data that exists in the platform but wasn't shaped for their use case. They can't use the curated layer, so they go upstream and query raw tables directly. They build parallel logic. They duplicate transformations. The platform was supposed to be the source of truth. It's now one of three sources, and the business users don't know which one to trust.

This is a cross-team workflow problem. The operating model was supposed to define how downstream teams extend the platform, how they request new data products, and what process turns an ad-hoc query into a curated asset. It didn't, so each team invented its own answer.

Wide tables work reasonably well for operational reporting. A business analyst can find their way around a hundred-column table if they know what they're looking for. GenAI can't. Large language models answering business questions need narrow, purpose-built tables with clean column-level documentation, consistent naming, and traceable lineage. None of that comes from the warehouse. All of it comes from the operating model.

Enterprises that deferred documentation, skipped column-level descriptions, and let naming drift for three years are discovering that their AI initiative is surfacing every gap at once. The foundation they never built is now the thing blocking the board-mandated priority.

Requirements that should have been caught in the design phase land in UAT instead. The business user sees the data and says "that's not what I asked for." The team goes back to rework. The go-live date slips. The credibility of the delivery process erodes. Everyone agrees that requirements gathering needs to be better next time.

Requirements gathering isn't the problem. The problem is that the operating model never defined how business users participate in data product design, who validates the model before build starts, or what the acceptance criteria look like before UAT begins. Without that definition, the feedback loop closes at the wrong end of the project.

The executive summary lists "governance" as a Q2 initiative, then a Q3 initiative, then a Q1-next-year initiative. It keeps getting pushed because nobody owns it, nobody scoped it, and it doesn't have a clear business sponsor. Meanwhile, the platform is live. Data products are shipping. Access is being granted through manual tickets. Metadata is being maintained by whoever remembers to maintain it.

Governance deferred is governance that never happens. The operating model defines governance as a dimension of every decision, not a separate project. When it lives in the future, it stays there.

Nobody decided to skip documentation. It just wasn't on the project plan. Column-level descriptions don't exist because writing them wasn't part of anyone's definition of done. Lineage isn't captured because the framework doesn't surface it automatically and no one has time to maintain it manually. Business users asking "where does this number come from?" get an answer from whichever engineer built the model, if that engineer is still on the team.

Documentation that depends on discipline is documentation that degrades. The operating model is supposed to make documentation a byproduct of the build, not a deferred task.

The internal team can use the platform but can't extend it. Every new data source, every new transformation pattern, every new capability requires going back to the SI or the original architect. This dependency was never called out explicitly, but it's now the single biggest constraint on the team's ability to move. And it gets more expensive every quarter.

A new table or view is created. Someone is supposed to configure it, so the right roles get access. Sometimes that step gets skipped. When it does, the object exists in the warehouse, but access doesn't propagate. Users can’t see the new object. Someone spends a morning figuring out why. The fix is trivial. The pattern repeats next month with a different table.

The operating model was supposed to decide whether access assignment happens through automation or through a manual checklist. Either answer is defensible. No answer, and automation happens when someone remembers it and breaks when they don't.

DEV is refreshed on an ad-hoc cadence. PRE-PROD is "closer" to PROD but still out of sync. A change passes testing, hits production, and behaves differently because the data shape in production isn't what the team tested against. The business finds out. Trust erodes.

Environment parity is an operating model decision. Without one, every team defaults to "good enough for today" and the divergence between environments becomes structural.

Pipeline dependencies live in configuration files that developers update as they remember. If an upstream dependency is missing from the config, the data quality checks are the last safety net. When DQ coverage has gaps, the pipeline runs on incomplete data and nobody notices until a downstream user raises a ticket.

The operating model should have decided whether dependencies are inferred from the code or declared in configuration, and whether a missed declaration fails the build or silently succeeds. Without that decision, the default is "silently succeeds," which is the failure mode nobody wants, and everybody ends up with.

If three or more of these are familiar, the root cause is a missing operating model. The symptoms are the system telling you so.

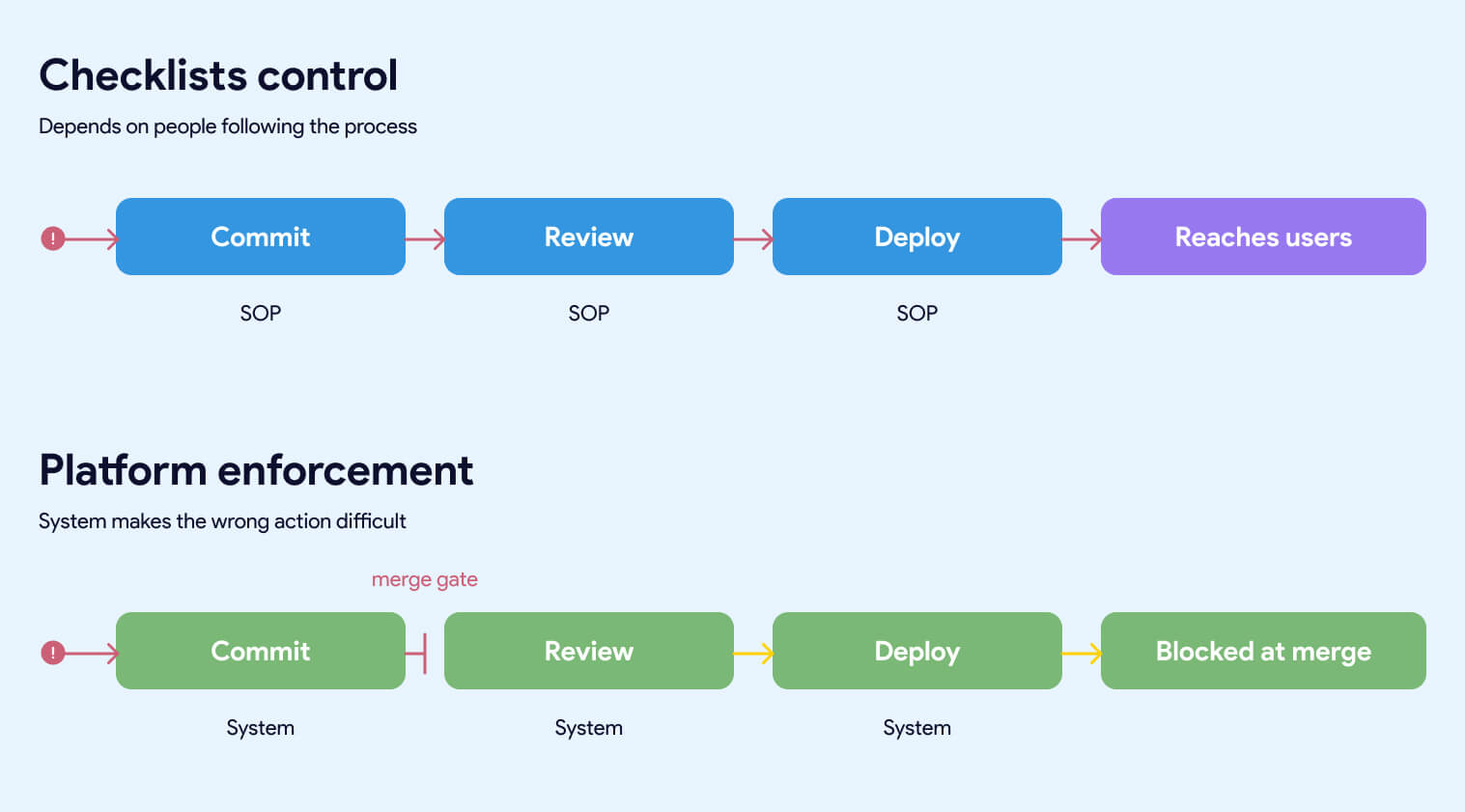

The single most useful frame for diagnosing a data platform is whether the controls that matter are enforced by the system or by people remembering to follow a process. This distinction cuts through every conversation about tools, frameworks, and team maturity. It's also the fastest way to predict how a platform will behave under growth, turnover, and pressure.

Most enterprise data platforms are checklist-controlled and presented as if they were platform-enforced. The gap between the two is where the symptoms in the previous section come from.

A checklist-controlled platform depends on people doing the right thing every time. Naming conventions live in a document that gets read once during onboarding. Access assignment requires someone to update a configuration table after creating a new object. Code quality depends on the reviewer having a good day. Dependencies get declared when the developer remembers to declare them. Documentation happens when there's time.

This works when the team is small, experienced, and under no time pressure. It degrades the moment any of those three conditions change. A new hire inherits the SOPs but not the instincts. A team lead rolls off and takes the context with them. A deadline compresses and the first thing that gets skipped is whatever depends on discipline rather than on the build itself.

Every failure in a checklist-controlled platform produces the same diagnosis: someone didn't follow the process. Which is accurate, and beside the point. The real diagnosis is that the platform was designed to require people to follow a process in a place where the system could have enforced it automatically.

A platform-enforced system makes the wrong action difficult, obvious, or impossible. Naming conventions are validated by CI/CD before a pull request can merge. Access is granted by the system based on rules, not by someone updating a table after the fact. Code quality is enforced by automated linting, testing, and review requirements that run on every commit. Dependencies are inferred from the code and validated against the actual pipeline. Documentation is required for a model to build, not requested after the fact.

The team doesn't have to remember the rules. The rules are the system.

This is the difference between a platform that scales and one that doesn't. A platform that depends on discipline gets more fragile as the team grows. A platform that enforces the rules gets stronger as the team grows, because every new engineer inherits the guardrails on day one without reading a document or asking anyone how things work.

The comparison that matters spans five dimensions:

Who enforces the control. Checklist platforms rely on people. Enforced platforms rely on the system. People get tired, leave, and forget. Systems don't.

The most useful test of a data platform is this: if a person fails to follow the process, does the system stop them, or does the defect propagate? If the defect propagates, the control is a checklist. It may work today. It won't work at scale.

A data leader reading a platform architecture document usually can't tell whether the platform is checklist-controlled or enforced. The document will describe controls either way. The test is to read every control and ask: "if a person fails to follow this, does the system stop them, or does the defect propagate?"

Platforms that look mature in a demo and degrade in production are almost always checklist-controlled platforms. The demo is run by the people who wrote the checklist. The production team is everyone else.

The assumption behind most enterprise data platforms is that the foundational issues surfacing today are growing pains. They'll get fixed as the team matures, as the next phase of the build lands, as the governance workstream finally kicks off. This assumption is wrong.

Foundational gaps don't resolve themselves as the platform grows. They compound. Every new hire inherits the SOPs. Every new business unit multiplies the manual steps. The window to fix foundational issues cheaply closes quickly after go-live.

Four mechanisms make them worse.

Every new engineer who joins the team inherits whatever controls are in place on their start date. If the controls are platform-enforced, they inherit the guardrails automatically. The system makes the right action easy and the wrong action difficult. Onboarding becomes a matter of learning the business, not learning which of fourteen naming conventions applies to which business unit.

If the controls are checklist-based, new engineers inherit a document. Or a wiki. Or a Slack message from someone who remembers how things worked six months ago. The quality of their work becomes a function of how thorough their onboarding was and how carefully they read a Confluence page that may or may not be up to date.

The more engineers you onboard, the more variation accumulates. Naming drift gets worse with every new hire. Documentation gaps multiply. Cross-team conventions diverge. The team isn't doing anything wrong. They're just operating in a system that produces drift as its default behavior, and the drift is proportional to team size.

A platform serving one business unit can absorb a surprising amount of process debt. The people involved know each other. Context gets shared informally. Workarounds get remembered.

A platform serving four business units cannot. Every manual step that exists in the operating model, registering a new source, assigning access to a new object, declaring a pipeline dependency, updating a config table, reviewing a model against naming conventions, has to happen four times, by four different teams, under four different sets of pressures. The error rate doesn't stay constant. It grows.

The platform was built to handle the first business unit. The second business unit stressed it. The third exposed the gaps. By the fourth, the team is spending more time coordinating across business units than building for any of them. None of this was visible in the original design. It becomes visible only at the scale where the gaps matter.

Platforms built around a custom SI-delivered framework, a proprietary metadata layer, or a heavily customized orchestration stack produce a specific kind of debt: the debt of vendor knowledge. The people who built the system understand it. Nobody else does. As time passes, the system gets larger, the edges get more ornate, and the cost of explaining it to a new team gets higher.

The organization reaches a point where it can't extend the platform without the original builder. Every change requires a new engagement. Every new capability has a price tag attached. The open-source world is shipping new Airflow features, new dbt patterns, new CI/CD tooling, and new governance capabilities, none of which land inside the custom framework unless someone pays for the port. The gap between what's possible and what the team can actually use widens every quarter.

This is not a problem you can engineer your way out of once you're in it. The only way to solve it is to replace the custom layer with something the internal team can own, which is a second transformation program on top of the first.

Manual processes produce manual records. Agile board tickets. IT change logs. A spreadsheet that tracks who has access to what. Each of these is updated by a person, which means each of them can drift from the actual state of the system without anyone noticing.

In a small, well-disciplined team, the drift is minor. At enterprise scale, it's structural. Documented controls say one thing. The system is configured another way. Nobody notices until a compliance review surfaces the discrepancy, or until an incident makes it obvious that the access model on paper doesn't match the access model in production.

The teams that avoid this don't have better discipline. They have infrastructure as code, automated audit trails, and platform-enforced access management. The audit log is a byproduct of the system itself, not a ledger someone has to maintain.

The most dangerous assumption about foundational gaps is that they can be addressed later, once the delivery pressure eases. Delivery pressure never eases. The backlog grows. The business adds new use cases. The board adds an AI mandate. The team that was going to refactor the foundation in Q3 is now fighting fires through Q4.

Meanwhile, every new data product built on top of the existing foundation inherits the same gaps. Refactoring gets more expensive every month, not less. The window to fix foundational issues cheaply closes quickly after go-live. After that, every fix is a migration, and every migration competes with the delivery work the business is asking for.

The teams that treat operating model gaps as technical debt to be addressed later are making a bet about time that almost never pays off. The teams that treat operating model gaps as blockers to be addressed now are the ones that come out of the next three years with a platform the business trusts.

Every CEO has a GenAI mandate. Every board is asking about it. And yet a July 2025 MIT NANDA study found that 95% of enterprise GenAI pilots delivered no measurable P&L impact, despite $30–40 billion in enterprise spending. The default assumption behind those investments was that the data foundation was ready. It almost never is.

GenAI is the forcing function that makes operating model gaps impossible to hide. Wide tables, missing column descriptions, undocumented lineage, and manual access management all break AI workloads before they break human users.

A business analyst can work with a hundred-column table. They know what they're looking for, they skip the columns that don't matter, and they ignore the fields with unclear definitions. A large language model can't. When an LLM is given a wide table with inconsistent naming and missing column descriptions, it hallucinates. It picks the column that sounds right. It joins on a field that looks like a key and isn't. The output is confident and wrong.

The fix is narrow, purpose-built tables with clean semantics. Column names that describe what they contain. Column descriptions that explain business meaning. Consistent naming across related tables. Clear primary and foreign key relationships. These aren't data engineering niceties. They're the minimum viable inputs for AI that produces trustworthy answers.

Enterprises that spent three years building wide, denormalized operational reporting tables are now discovering that those tables can't be pointed at GenAI directly. They need a second modeling layer, often called a semantic layer, built for AI consumption. That layer takes real work to build. It's a project nobody scoped, running parallel to the existing delivery pressure.

For years, column descriptions were a nice-to-have. Data catalogs had them when a team made the effort. Documentation quality varied by business unit, by team lead, by quarter. The business mostly worked around the gaps.

GenAI changes that math. An LLM answering a business question needs to know what every column means. If the column descriptions are missing, stale, or wrong, the model fills in the gaps with plausible-sounding guesses. The answers come back polished and authoritative. The errors are invisible until a business user acts on a wrong number.

The operating model was supposed to decide that column descriptions are a requirement, not an afterthought. Most operating models didn't. So now the team is writing three years of back-documentation under board pressure, on top of the existing delivery work, for data products that have been live for months.

When a business user asks an LLM "why is our Q3 revenue in the Northeast region down?", the LLM's answer is only as trustworthy as the lineage of the data it's querying. Where did the number come from? What source fed it? What transformations were applied? Which version of the transformation logic was in effect when the number was computed?

Platforms without end-to-end lineage can't answer those questions. The business user doesn't know what to trust. The data team can't validate the AI's output. The GenAI initiative produces answers that are confidently wrong, fails an executive review, and gets shelved.

Lineage is an operating model decision. Platforms that made the decision to capture lineage automatically as part of the build have it. Platforms that deferred lineage to a future governance project don't. And the second category is scrambling.

Gartner predicts that through 2026, organizations will abandon 60% of AI projects unsupported by AI-ready data. In addition, 63% of organizations either don't have or are unsure about having the right data management practices for AI. This is not a theory, it's already arriving on board agendas.

Operational reporting has predictable access patterns. A business analyst queries the tables they've been granted access to. A dashboard uses a service account with a defined permission scope. Everyone knows what's authorized and what isn't.

GenAI workloads don't behave that way. An LLM with access to "the sales data" may try to answer a question by joining across tables that sit in different access tiers. Natural language queries don't respect the access boundaries that were designed for structured SQL. Platforms with manual access assignment and checklist-controlled permissions produce one of two outcomes: AI that can't answer the question because it can't access the data, or AI that answers the question by accessing data it shouldn't have seen.

Both outcomes are failures. The fix is access management that's granular, automated, and enforced by the system. The operating model was supposed to define that. If it didn't, the GenAI initiative is about to expose exactly which data is governed and which data is governed by accident.

The operating model problems that felt tolerable in 2023 are intolerable in 2026. The board isn't giving the data team three years to refactor. They're asking for GenAI pilots in six months and production AI in twelve.

Teams with a defined operating model and a platform that enforces it are shipping those pilots already. They're not scrambling to back-fill documentation, rebuild wide tables into semantic layers, or retrofit access management. The work was done during the build, because the operating model made it part of the build.

Teams without that foundation are rediscovering every gap under deadline pressure. The AI initiative is failing because the foundation underneath it was never ready, and GenAI is the first workload that refuses to work around the foundation's problems.

If your data platform has the symptoms described earlier in this article, your GenAI initiative will surface every one of them. On a timeline the business is about to compress.

Most executives reading this article are not at the start of a data platform project. They're twelve, eighteen, twenty-four months in. The warehouse is live. The framework is in production. The first business unit is using it. The symptoms are real, and the question isn't whether the operating model should have been defined earlier. It's what to do now.

The answer is not to rip everything out. It's also not to accept the current trajectory and hope the next phase of the build compensates for the gaps in the current one. There's a middle path, and it starts with changing what the team is working on, not what it's working with.

Decisions first. Build second. Even mid-project.

The operating model is a finite set of decisions. A working session with the right people in the room can get most of the way through the list in a week. What matters is that the decisions get made deliberately and written down, not that they get made perfectly on the first try.

The decisions that matter most, in order of impact:

Naming conventions. Pick them. Write them down. Validate them automatically in CI. Every future asset conforms. Existing assets get renamed on a defined schedule.

Ownership map. Every data product has a named owner. Every source has a named owner. Every shared model has a named owner. If ownership is unclear, that's the first decision to make, not the last.

Layering semantics. What is raw data? What is a cleaned source? What is a business entity? What is a data product? Four layers, defined crisply, consistent across business units. Not six layers with three teams using them differently.

Access and environment parity. How is access granted? How is it reviewed? What's the refresh cadence for lower environments? Are DEV and PRE_PROD in sync with PROD, and if not, is that a known and accepted limitation or a problem nobody has prioritized?

SLAs. What does the business expect? For a new KPI. For a source onboarding. For a production incident. These get documented. Trade-offs get discussed explicitly instead of assumed.

Cross-team workflows. When the second and third business units onboard, how do they request data products from the central team? How do they extend models the central team owns? How do they avoid duplicating logic that already exists? This is the workflow that scales the platform beyond its first success.

Governance. Not as a future project. As a dimension of every decision already on this list. Ownership, access, naming, and lineage are all governance. If "governance" is still on the roadmap as a separate workstream, it's already too late.

The output of this work is a document. Short, explicit, and owned by a named executive. Not a deck. Not a wiki page. A written operating model that the team can point to when decisions come up, and that the platform can enforce.

The team's energy should go into operating model decisions, not rebuilding Git workflows, CI/CD, and orchestration from scratch.

If the operating model is a finite set of decisions, the infrastructure underneath it is the larger ongoing cost. Git workflows. CI/CD pipelines. Development environments. Secrets management. Orchestration. Deployment standards. Testing frameworks. Every team that builds a serious data platform eventually must build or buy all of it.

Teams that try to build the operating model and the infrastructure at the same time, with the same people, end up doing neither well. The operating model decisions get rushed because infrastructure is urgent. The infrastructure gets built without operating model clarity because decisions haven't been made yet. Both suffer.

The teams that succeed separate the two. The operating model is their work. The infrastructure underneath it is either delegated to a platform that's already built or scoped as a distinct workstream with its own ownership. When the team's meeting time is spent on operating model decisions instead of CI/CD configuration, the operating model gets defined faster, and the infrastructure stays consistent with it.

The hardest part of acting on a missing operating model is knowing where the gaps are. The executive asking, "is our operating model mature?" is usually not close enough to the platform to answer it. The people close enough to answer are often incentivized to say everything is under control.

A small set of diagnostic questions surfaces where the operating model is doing work and where it isn't. Answering them honestly takes an hour. The pattern of answers tells you where to focus first.

On enforcement. Which of your data platform controls are enforced by the system, and which depend on people following a process? If a team member fails to follow the process, does the system stop them, or does the defect reach production?

On ownership. For every data product in your platform, can you name the owner in under thirty seconds? If not, how many orphans are there, and who inherits them when something breaks?

On naming and layering. Can a new engineer look at a table name and know what layer it belongs to, which business unit owns it, and what it contains? If not, how much context do they have to ask for before they can do their job?

On vendor dependency. If the SI or original architect of your platform disengaged tomorrow, could your internal team extend the framework? If not, how much of your roadmap depends on their continued engagement, and what's the cost?

On governance. Is governance a live dimension of every decision, or is it a future project on a slide deck? If it's a future project, how long has it been there?

On GenAI readiness. Could your current platform support a GenAI product that a business user would trust with a strategic decision? If not, what specifically is missing, and how long would it take to build?

On the time window. If you did nothing to change the current trajectory, what does the platform look like in twelve months? If the answer is "worse than today," the operating model work isn't optional.

The operating model is the set of decisions. The platform layer is the system that makes those decisions automatic. Separating the two is how mature data organizations move fast without degrading quality as they scale.

Datacoves exists because most enterprise data teams are spending their time on the wrong layer. They're rebuilding Git workflows, configuring CI/CD, standing up orchestration, wiring secrets management, and writing deployment conventions from scratch, on top of running the business. That work is necessary. It's also not differentiated. Every enterprise data team needs the same underlying platform capabilities, and every team that builds them in-house takes six to twelve months to get there, plus ongoing maintenance that never ends.

Datacoves delivers those capabilities preconfigured, inside the customer's private cloud, running on open-source tools the internal team can own. The operating model decisions still belong to the organization. The infrastructure underneath them is already built.

Git workflows with branching conventions, pull request requirements, and automated validation on every commit. Naming conventions, testing requirements, and documentation expectations get enforced before code merges. A missed convention doesn't reach production because the system doesn't let it.

CI/CD pipelines that run dbt tests, SQL linting, governance checks, and deployment validation automatically. Quality becomes a property of the pipeline itself, regardless of how attentive the reviewer is that morning.

Managed Airflow for orchestration. Pipeline dependencies, retries, failure alerts, and scheduling work consistently across every team. My Airflow for developer testing, Teams Airflow for production. Engineers don't rebuild orchestration conventions for each new project.

In-browser VS Code environments that come up preconfigured with dbt, Python, SQLFluff, Git integration, and every tool the team needs. A new engineer opens their environment on day one and starts writing code. Onboarding time drops from weeks to hours.

Secrets management integrated with the customer's existing vault or AWS Secrets Manager. Credentials never live in code. Access is controlled by the system itself.

Deployment standards that promote code from development through testing to production on the same workflow every time. No manual deployment steps. No scripts that only one person knows how to run.

Governance enforcement at commit time. dbt-checkpoint catches quality issues before they reach the pipeline. SQLFluff keeps SQL consistent. Naming conventions validate in CI. The team doesn't remember the rules because the system enforces them.

Every control listed above is a system-enforced version of a checklist most enterprise platforms maintain manually. The difference in outcomes is structural, not incremental. A platform that enforces these controls automatically produces consistent quality at any team size. A platform that depends on discipline degrades as the team grows.

Datacoves is built around the assumption that the operating model is the customer's work, and the infrastructure that enforces the operating model should be the platform's work. That separation is what lets the customer's team spend its time on decisions that differentiate the business, not on infrastructure that every data team needs and no data team should have to build.

For a team already running on Snowflake with a custom framework or an SI-built platform, Datacoves is the alternative to a second transformation program. Instead of rebuilding the infrastructure layer internally or paying the SI to port new capabilities, the team moves to a platform that already has them. The operating model foundation the team needs to do anyway becomes the focus. The infrastructure underneath it is no longer the team's ongoing cost.

The customers who've made this move describe the outcome the same way: the engineering team stopped maintaining plumbing and started shipping data products. Guitar Center onboarded in days. Johnson and Johnson described it as a framework accelerator. Those aren't luck. They're the result of a platform layer that enforces the operating model by design.

If the symptoms earlier in this article match what you're seeing, the next step is a conversation about where the gaps are and what the platform layer can take off your team's plate. Book a free architecture review. The review surfaces the operating model gaps driving the symptoms the business is already complaining about, and it's the fastest way to see whether the platform layer can shorten the path to the outcomes you expected when you started the build.

A Data Operating Model is the work most enterprises skip because nobody told them it was the work. The tool purchase felt like progress. The SI engagement felt like progress. The first use cases shipping felt like progress. By the time the symptoms surfaced, the decisions that would have prevented them had been deferred long enough to become expensive.

The executives who get this right aren't smarter than the ones who don't. They're just earlier. They define the operating model before the build starts, or they stop the build long enough to define it once they realize it was never decided. The teams that do that work once ship data products for years afterward. The teams that don't spend those same years compensating for decisions that were never made.

If the symptoms in this article match what you're seeing in your own platform, the message is simple. The tools aren't failing you. The operating model underneath them is, and it will keep failing until somebody decides to define it. That work is smaller than it looks, it's faster to do than to defer, and it's the only path to the outcomes the business was expecting when the project started.

Your team has spent eighteen months proving they can build. The next eighteen months are going to be about whether the business trusts what got built. That outcome is decided at the operating model layer, not at the tool layer. The sooner leadership treats it that way, the sooner the symptoms stop.

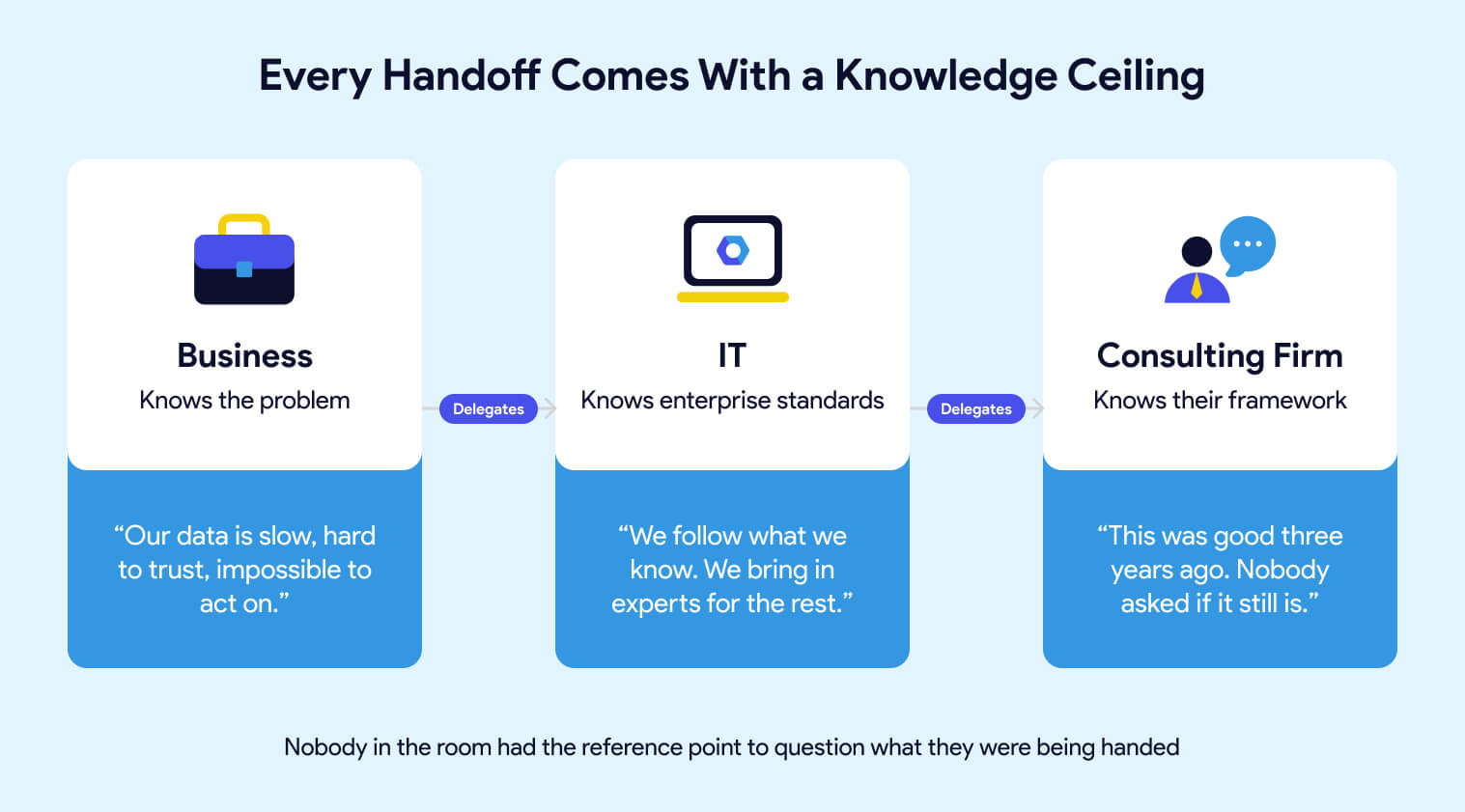

Most enterprise data platform projects don't fail because of the tools. They fail because nobody in the room, not IT, not the consulting firm, not the vendor, had ever seen what a well-built platform actually looks like. So decisions get made by people delegating to other people who are also delegating. Enterprise standards get followed without being questioned. And two years later, you have a platform that works well enough to demo, moves too slowly to trust, and can only be changed by the team that built it. That's a predictable outcome of a broken process.

Most enterprise data platform projects don't fail because of the tools. They fail because nobody in the room had ever seen what a well-built platform looks like.

This article explains why it happens, what the warning signs look like from the outside, and what you should demand before you hand the keys to anyone again.

Two years ago, you had a problem. Your data was slow to get to, hard to trust, and impossible to act on. You brought it to IT. IT brought it to a consulting firm with the right logos and the right industry experience. Contracts were signed. A roadmap was presented. Everyone nodded.

What nobody said out loud is that each handoff in that chain came with a knowledge ceiling. You knew the business problem but not the technical solution. IT knew their enterprise standards but not necessarily what best-in-class data engineering looks like. The consulting firm knew their framework, the one they'd been building for years, the one that was genuinely good when they built it. Whether it was still the right answer for where the industry is today was a question nobody asked, because nobody in the room had the reference point to ask it.

This isn't about blame. The system worked exactly as designed. It just wasn't designed to produce what you actually needed.

You don't need to understand the architecture to recognize that something is wrong. You've seen it in other ways.

Reports that take three sprints to change. Business users have stopped asking for new metrics because the process is too slow. Analysts who still use Excel because the data in the platform doesn't match what they expect. A go-live that keeps moving. Issues that surface in final testing, never earlier. A data team that spends more time firefighting than building.

These aren't symptoms of a tool problem. They're symptoms of a platform that was built to pass a demo, not to operate at scale. The foundation looked solid in the PowerPoint. Then one corner started sinking. Someone ran outside, propped it up, called it good enough, and everyone went back inside to talk about the drapes. Until the next corner started sinking.

The business lives with the consequences of decisions that were never explained to them. That's the part that should make you uncomfortable. Not because anyone was careless, but because the people making the technical decisions had never been asked to connect them back to your actual business problem.

Consulting firms sell services. The migration is the engagement. They have every incentive to deliver something that looks complete at handoff, and very little incentive to think about what happens eighteen months later when your team needs to extend it, upgrade it, or adapt it to something they didn't anticipate. If you're evaluating migration partners, here's what to look for.

That's just the business model. A platform built on open-source tools with documented conventions, enforced by the system rather than by people remembering to follow a process, doesn't generate the same ongoing billing as one that requires the original team to come back every time something needs to change. Someone who built one of these frameworks told me it was "good three years ago." That's a telling phrase. Good three years ago means it was already aging when it was delivered.

dbt, Airflow, SQLFluff, and the open-source ecosystem around them move fast because there are thousands of contributors and companies whose entire existence depends on making them better. A proprietary consulting framework moves at the pace of whoever owns it, if it moves at all.

The gap compounds. New Airflow capabilities require the consulting firm to schedule an upgrade. Framework changes require them to build it. Every month that passes, the distance between what's possible and what your team can actually access gets wider. Your engineers know it. They read the release notes. They see what other teams are doing. They just can't do it themselves because the platform wasn't built to be owned by them.

The teams that don't have this problem didn't get lucky. They built on a foundation that was designed to evolve, with tools maintained by communities that have no interest in keeping anyone dependent.

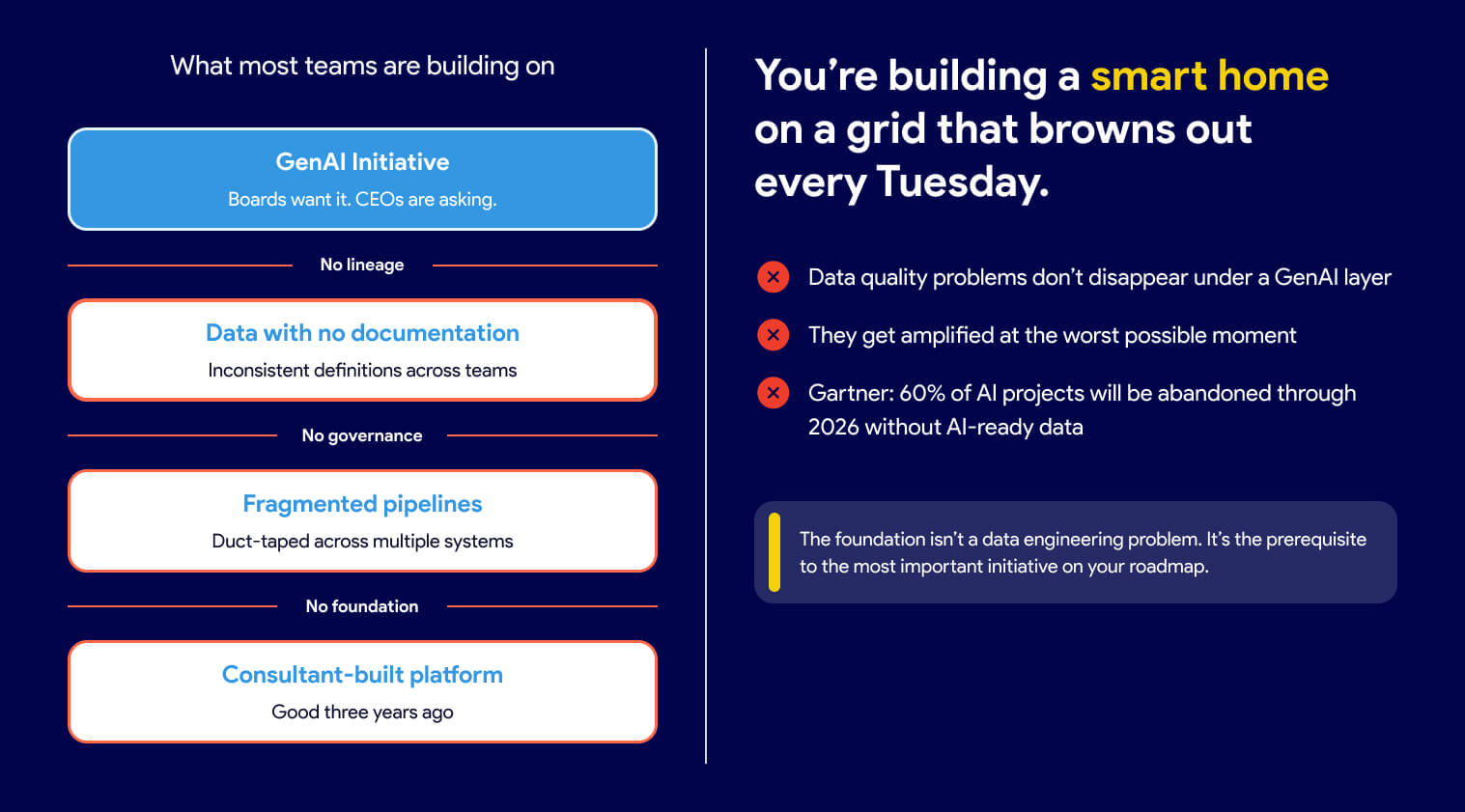

Every business leader has a GenAI mandate right now. Boards want it. CEOs are asking about it. And the instinct is to treat it as a separate initiative, something you layer on top of what you already have.

That's the mistake.

Think about your power grid. Nobody questions whether the lights will turn on when they flip the switch. That reliability exists because someone built generation, transmission, and distribution correctly before anyone thought about what to plug in. You're being asked to build a smart home on a grid that browns out every Tuesday.

AI is only as reliable as the data underneath it. If your data has no lineage, inconsistent definitions, and no documentation, your AI initiative will produce outputs nobody trusts, at scale, faster than you've ever produced untrustworthy outputs before.

According to Gartner, organizations will abandon 60% of AI projects unsupported by AI-ready data by 2026.

The data quality problems that were always there don't disappear under a GenAI layer. They get amplified and made visible in the worst possible moment, in front of the stakeholders you most need to impress.

The foundation isn't a data engineering problem. It's the prerequisite to the most important initiative on your roadmap. Getting it right isn't IT's job to delegate. It's your decision to make.

A well-built platform doesn't rely on people following the process correctly every time. It makes the wrong action difficult.

The difference between a platform that scales and one that doesn't usually isn't visible in a demo. It shows up six months after go-live, when the team doubles, when a consultant rolls off, when someone new joins, and has to figure out how things work by reading documentation that may or may not exist.

A well-built platform doesn't rely on people following the process correctly every time. It makes the wrong action difficult. Automated checks run before anything can merge, so code quality doesn't depend on a reviewer having a good day. Naming conventions live in the tooling itself, not in a document that gets read once during onboarding. Pipeline dependencies are declared explicitly and validated automatically, so a missed configuration doesn't quietly cause a downstream failure three sprints later. A new engineer who joins the team inherits those guardrails on day one without reading a document or asking anyone how things work. The knowledge is in the system, not in the heads of the people who built it.

Good data engineering is a thought-out combination of tools that work well together, each maintained by a community that cares about making it better. The people improving dbt, Airflow, and SQLFluff aren't doing it on a consulting firm's project timeline. They're doing it because their companies depend on those tools getting better continuously.

dbt-coves automates the tedious parts of dbt development. dbt-checkpoint enforces data quality standards at commit time, before bad code ever reaches a pipeline. SQLFluff keeps SQL consistent across every engineer on the team. Snowcap manages Snowflake infrastructure as code, the thing that's perpetually "on the roadmap" in consulting-built platforms but never quite arrives.

None of these tools is the answer on its own. The answer is that someone chose them deliberately, integrated them carefully, and built a platform where they work together. That's the difference between a workshop that was designed and one that just accumulated tools over time.

It also means your platform can adapt. When a better approach emerges, you adopt it. Your engineers can use Claude Code, GitHub Copilot, Snowflake Cortex, or any other AI tool that fits their workflow. They're not waiting for a vendor to build an integration or a consulting firm to schedule a framework update. Some platforms give you one AI tool and call it done. That's a product decision masquerading as a strategy.

If you're about to start a data platform engagement with a consulting firm, a platform vendor, or anyone else, these are the questions worth asking before you sign.

Can you show me a client whose team ships on their own cadence, without your firm in the critical path for day-to-day decisions? Managing infrastructure is a legitimate service. What you want to avoid is a vendor who ends up managing your decisions, your roadmap, and your team's ability to move without them. That relationship tends to get more expensive over time, not less.

What open-source tools does your framework use, and how do you handle upgrades when new versions are released? A proprietary framework that wraps open-source tools is only as current as whoever maintains the wrapper. Find out who that is and how often it happens.

What happens if a business user needs a new metric? Walk me through the process end-to-end, including who approves it, how long it takes, and what the business user can do themselves. The answer will tell you whether they're building you an industrial kitchen or planning to cook everything for you. Business users are smart enough to use a knife. A platform built on that assumption looks very different from one built on the assumption that they aren't.

Who on our team will own the business logic, the models, and the deployment process? Not the infrastructure, the work. A good partner manages the complexity underneath so your engineers can focus on delivering. If the answer to ownership is vague, the engagement was designed around their continuity, not yours.

How do you handle the Snowflake security model, and can you show me examples? This one is worth asking even if you don't fully understand the answer. Pay attention to whether they answer with confidence and specificity, or whether they say "this came directly from Snowflake" as if that settles it. It doesn't. A recommendation from a Snowflake account team is a starting point, not an architecture.

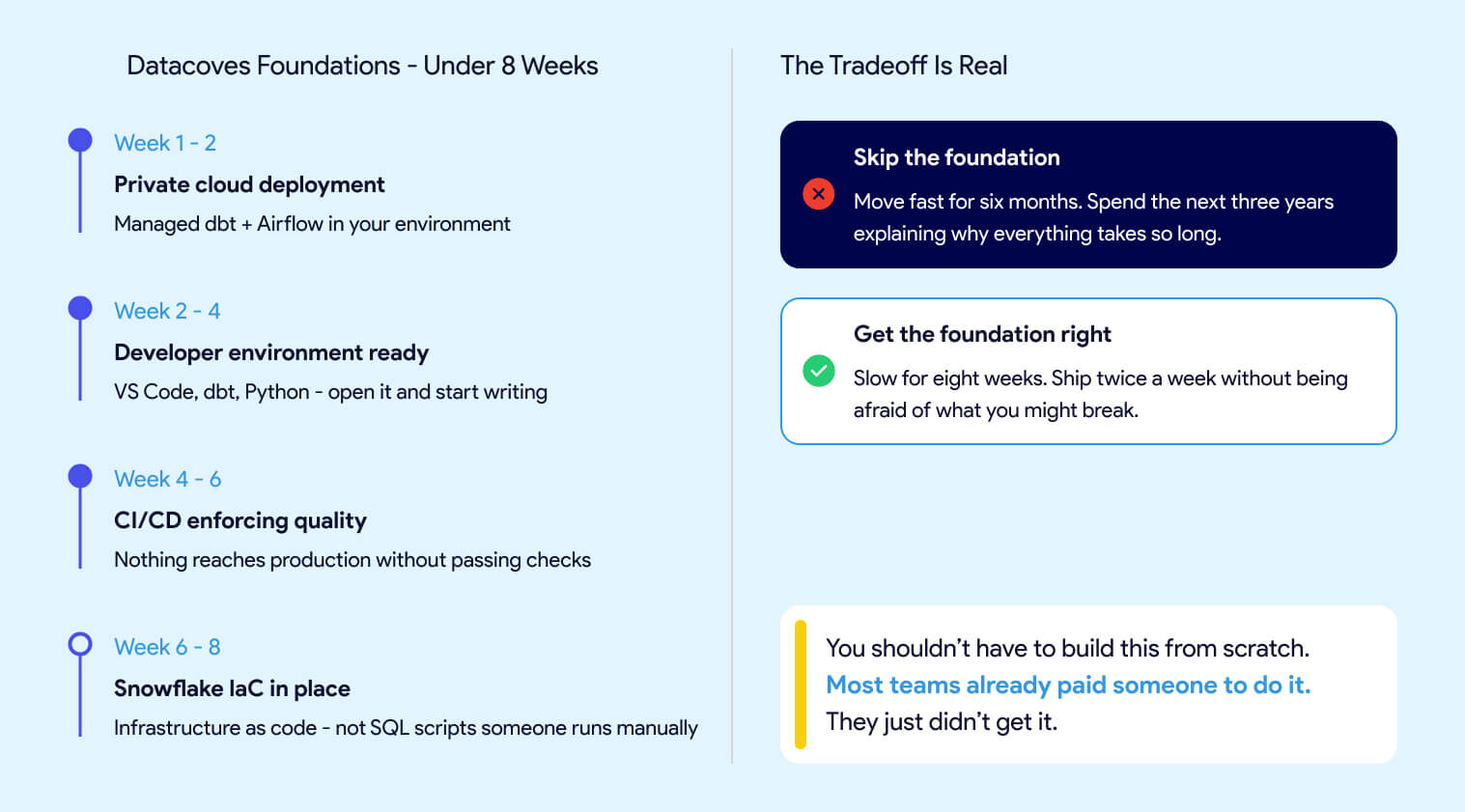

Most leaders assume that doing things right means slowing down. That getting the foundation in place before starting to deliver means months of invisible work before anyone sees results. That's not how this works.

The Datacoves Foundation's engagement takes less than two months.

In that time, you get:

The goal is to move slowly to move fast. Not slow forever. Slow for eight weeks, so your team isn't spending the next three years propping up corners. Skip the foundation, and you move fast for the first six months, then spend the next three years explaining why everything takes so long. Get it right and your team ships twice a week without being afraid of what they might break.

Guitar Center onboarded in days, not months. Johnson and Johnson described it as a framework accelerator. A team at DataDrive saved over 200 hours annually by replacing a fragile self-built pipeline with something that actually held up at scale. None of them spent 18 months waiting to find out if it would work.

Without a solid foundation, your GenAI initiative will surface data problems at the worst possible moment. Your business users will keep working around the platform instead of in it. And your engineers will keep moving carefully instead of moving fast.

You shouldn't have to build this from scratch. Most teams already paid someone to do it. They just didn't get it.

Data orchestration is the foundation that ensures every step in your data value chain runs in the correct order, with the right dependencies, and with full visibility. Without it, even the best tools such as dbt, Airflow, Snowflake, or your BI platform operate in silos. This disconnect creates delays, data fires, and unreliable insights.

For executives, data orchestration is not optional. It prevents fragmented workflows, reduces operational risk, and helps teams deliver trusted insights quickly and consistently. When orchestration is built into the data platform from the start, organizations eliminate hidden technical debt, scale more confidently, and avoid the costly rework that slows innovation.

In short, data orchestration is how modern data teams deliver reliable, end-to-end value without surprises.

In today’s fast-paced business environment, executives are under increased pressure to deliver quick wins and measurable results. However, one capability that is often overlooked is data orchestration.

This oversight can sabotage progress as the promise of data modernization efforts fails to deliver expected outcomes in terms of ROI and improved efficiencies.

In this article, we will explain what data orchestration is, the risks of not implementing proper data orchestration, and how executives benefit from end-to-end data orchestration.

Data orchestration ensures every step in your data value chain runs in the right order, with the right dependencies, and with full visibility.

Data orchestration is the practice of coordinating all the steps in your organization’s data processes so they run smoothly, in the right order, and without surprises. Think of it as the conductor ensuring each instrument plays at the right time to create beautiful music.

Generating insights is a multi-tool process. What’s the problem with this setup? Each of these tools may include its own scheduler, and they will each run in a silo. Even if an upstream step fails or is delayed, the subsequent steps will run. This disconnect leads to surprises for executives expecting trusted insights. This in turn, leads to delays and data fires, which are disruptive and inefficient for the organization.

Imagine you are baking a chocolate cake. You would need a recipe, all the ingredients, and a functioning oven. However, you wouldn’t turn on the oven before buying the ingredients and mixing the batter if your milk had spoiled. Not having someone orchestrating all the steps in the right sequence would lead to a disorganized process that is inefficient and wasteful. You also know not to continue if there is a critical issue, such as spoiled milk.

Data orchestration solves the problem of having siloed tools by connecting all the steps in the data value chain. This way, if one step is delayed or fails, subsequent steps do not run. With a data orchestration tool, we can also notify someone to resolve the issue so they can act quickly, reducing fires and providing visibility to the entire process.

ETL (Extract, Transform, and Load) focuses on moving and transforming data, but data orchestration is about making sure everything happens in the right sequence across all tools and systems. It’s the difference between just having the pieces of a puzzle and putting them together into a clear picture.

Without data orchestration, even the best tools operate in silos, creating delays, data fires, and unreliable insights.

Executives make many decisions but rarely have the time to dive into technical details. They delegate research and expect quick wins, which often leads to mixed messaging. Leaders want resilient, scalable, future-proof solutions, yet they also pressure teams to deliver “something now.” Vendors exploit this tension. They sell tools that solve one slice of the data value chain but rarely explain that their product won't fix the underlying fragmentation. Quick wins may ship, but the systemic problems remain.

Data orchestration removes this friction. When workflows are unified, adding steps to the data flow is straightforward, pipelines are predictable, and teams deliver high-quality data products faster and with far fewer surprises.

A major Datacoves customer summarized the difference clearly:

“Before, we had many data fires disrupting the organization. Now issues still occur, but we catch them immediately and prevent bad data from reaching stakeholders.”

Without orchestration, each new tool adds another blind spot. Teams don’t see failures until they hit downstream systems or show up in dashboards. This reactive posture creates endless rework, late-night outages, and a reputation problem with stakeholders.

With orchestration, failures surface early. Dependencies, quality checks, and execution paths are clear. Teams prevent incidents instead of reacting to them.

Data orchestration isn’t just about automation; it’s about governance.

It ensures:

This visibility dramatically improves trust. Stakeholders no longer get “chocolate cake” made with spoiled milk. A new tool may bake faster, but if upstream data is broken, the final product is still compromised.

Orchestration ensures the entire value chain is healthy, not just one ingredient.

Modern data teams rely heavily on tools like dbt and Airflow, but these tools do not magically align themselves. Without orchestration:

With orchestration in place, ingestion, dbt scheduling, and activation become reliable, governed, and transparent, ensuring every step runs at the right time, in the right order, with the right dependencies. Learn more in our guide on the difference between dbt Cloud vs dbt Core.

For more details on how dbt schedules and runs models, see the official dbt documentation.

To learn how Airflow manages task dependencies and scheduling, visit the official Apache Airflow documentation.

It is tempting to postpone data orchestration until the weight of data problems makes it unavoidable. Even the best tools and talented teams can struggle without a clear orchestration strategy. When data processes aren’t coordinated, organizations face inefficiencies, errors, and lost opportunities.

Implementing data orchestration early reduces hidden technical debt, prevents rework, and helps teams deliver trusted insights faster.

When data pipelines rely on multiple systems that don’t communicate well, teams spend extra time manually moving data, reconciling errors, and firefighting issues. This slows decision-making and increases operational costs.

Common symptoms of fragmented tools include:

Many organizations focus on a “quick wins” approach only to discover that the cost of moving fast was long-term lack of agility and technical debt. This approach may deliver immediate results but leads to technical debt, wasted spend, and fragile data processes that are hard to scale. A great example is Data Drive’s journey, before adding data orchestration, when issues occurred, they had to spend time debugging each step of their disconnected process. Now it is clear where an issue has occurred, enabling them to resolve issues faster for their stakeholders.

As organizations grow, the absence of orchestration forces teams to revisit and fix processes repeatedly. Embedding orchestration from the start avoids repeated firefighting, accelerates innovation, and makes scaling smoother. Improving one step alone cannot deliver the desired outcome, just like a single egg cannot make a cake.

Organizations without data orchestration are effectively flying blind. Disconnected processes run out of order and issues are discovered by frustrated stakeholders. Resource-constrained data teams spend their time firefighting instead of delivering new insights. The result is delays in decision-making, higher operating costs, and an erosion of trust in data. Embedding orchestration from the start avoids repeated firefighting, accelerates innovation, and makes scaling smoother.

If data orchestration is so important, why do organizations go without it? We often hear some common objections:

Many organizations have not heard of data orchestration and tool vendors rarely highlight this need. It’s only after a painful experience that they realize this essential need.

It’s true that data orchestration adds another layer, but without it, you have disconnected, siloed processes. The real cost comes from chaos, not from coordination.

Vendor sprawl can indeed introduce additional risks, that’s why all-in-one platforms like Datacoves reduce integration overhead by bundling enterprise-grade orchestration, like Airflow, without increasing vendor lock-in. Explore Datacoves’ Integrated Orchestration Platform.

Data value chains are inherently complex, with multiple data sources, ingestion processes, transformations, and data consumers. Data orchestration does not introduce complexity; it provides visibility and control over this complexity.

It may seem reasonable to postpone data orchestration in the short term. But every mature data organization, both large and small, eventually needs to scale. By building-in data orchestration into the data platform from the start, you set up your teams for success, reduce firefighting, and avoid costly and time-consuming rework. Most importantly, the business receives trustworthy insights faster.

Implementing data orchestration doesn’t have to be complicated. The key is to approach it strategically, ensuring that every process is aligned, visible, and scalable.

Begin by mapping your existing data processes and identifying where inefficiencies or risks exist. Knowing exactly how data flows across teams and tools allows you to prioritize the areas that will benefit most from orchestration.

Key outcomes:

Focus first on automating repetitive and error-prone steps such as data collection, cleaning, and routing. Automation reduces manual effort, frees up your team for higher-value work, and ensures processes run consistently.

Key outcomes:

Implement dashboards or monitoring tools that provide executives and teams with real-time visibility into data flows. Early detection of errors prevents costly mistakes and increases confidence in the insights being delivered.

Key outcomes:

Start small with high-impact processes and expand orchestration across more workflows over time. Scaling gradually ensures that teams adopt the changes effectively and that processes remain manageable as data volume grows.

Key outcomes:

Select tools that integrate well with your existing systems, and provide flexibility for future growth. Popular orchestration tools include dbt and Airflow, but the best choice depends on your organization’s specific workflows and needs. Explore how these capabilities come packaged in the Datacoves Platform Features overview.

Key outcomes:

Investing in data orchestration delivers tangible business value. Organizations that implement orchestration gain efficiency, reliability, and confidence in their decision-making.

Data orchestration reduces manual work, prevents duplicated efforts, and streamlines processes. Teams can focus on higher-value initiatives instead of firefighting data issues.

With coordinated workflows and monitoring, executives and stakeholders can trust the data they rely on. Decisions are backed by accurate, timely, and actionable insights.

By embedding data orchestration early, organizations avoid expensive rework, reduce errors, and prevent the accumulation of technical debt from ad hoc solutions.

Data orchestration ensures that data pipelines scale smoothly as the organization grows. Teams can launch new analytics initiatives faster, confident that their underlying processes are robust and repeatable.

Executives gain a clear view of the entire data lifecycle, enabling better oversight, risk management, and strategic planning.

Data orchestration should not be seen as a “nice to have” feature that can be postponed. Mature organizations understand that it is the foundation needed to deliver trusted insights faster. Without it, companies risk setting up siloed tools, increased data firefighting, and eroding trust in both the data and the data team. With it, organizations gain visibility, agility, and the confidence that insights fueling decisions are accurate.

The real question for strategic leaders is whether to try to piece together disconnected solutions, focusing only on short-term wins, or invest in data orchestration early and unlock the full potential of a connected ecosystem.

For executives, prioritizing data orchestration will mean fewer data fires, accelerated innovation, and an environment where trusted insights flow as reliably as the business demands.

To see how orchestration is built into the Datacoves platform, visit our Integrated Orchestration page.

Don’t wait until complexity forces your hand. Your team deserves to move faster and fight fewer fires.

Book a personalized demo to see how data orchestration with Datacoves helps leaders unlock value from day one.

Large Language Models (LLMs) like ChatGPT and Claude are becoming common in modern data workflows. From writing SQL queries to summarizing dashboards, they offer speed and support across both technical and non-technical teams. But as organizations begin to rely more heavily on these tools, the risks start to surface.

The dangers of AI are not in what it cannot do, but in what it does too confidently. LLMs are built to sound convincing, even when the information they generate is inaccurate or incomplete. In the context of data analytics, this can lead to hallucinated metrics, missed context, and decisions based on misleading outputs. Without human oversight, these issues can erode trust, waste time, and create costly setbacks.

This article explores where LLMs tend to go wrong in analytics workflows and why human involvement remains essential. Drawing from current industry perspectives and real-world examples, we will look at how to use LLMs effectively without sacrificing accuracy, accountability, or completeness.

LLMs like ChatGPT and Claude are excellent at sounding smart. That’s the problem.

They’re built to generate natural-sounding language, not truth. So, while they may give you a SQL query or dashboard summary in seconds, that doesn’t mean it’s accurate. In fact, many LLMs can hallucinate metrics, invent dimensions, or produce outputs that seem plausible but are completely wrong.

You wouldn’t hand over your revenue targets to someone who “sounds confident,” right? So why are so many teams doing that with AI?

Here's the challenge. Even experienced data professionals can be fooled. The more fluent the output, the easier it is to miss the flaws underneath.

This isn’t just theory, Castor warns that “most AI-generated queries will work, but they won’t always be right.” That tiny gap between function and accuracy is where risk lives.

If you’re leading a data-driven team or making decisions based on LLM-generated outputs, these are the real risks you need to watch out for.

LLMs can fabricate filters, columns, and logic that don’t exist. In the moment, you might not notice but if those false insights inform a slide for board meeting or product decision, the damage is done.

Here is an example where an image was provided to ChatGPT and asked to point out the three differences between the two pictures as illustrated below:

Here is the output from ChatGPT:

Here are the 3 differences between the two images:

Let me know if you want these highlighted visually!

When asked to highlight the differences, ChatGPT produced the following image:

As you can see, ChatGPT skewed the information and exaggerated the differences in the image. Only one (the shirt stripe) out of the three was correct, while it missed the differences with the sock, the hair, and it even changed the author in the copyright!

AI doesn’t know your KPIs, fiscal calendars, or market pressures. Business context still requires a human lens to interpret properly. Without that, you risk misreading what the data is trying to say.

AI doesn’t give sources or confidence scores. You often can’t tell where a number came from. Secoda notes that teams still need to double-check model outputs before trusting them in critical workflows.

One of the great things about LLMs is how accessible they are. But that also means anyone can generate analytics even if they don’t understand the data underneath. This creates a gap between surface-level insight and actual understanding.

Over-relying on LLMs can create more cleanup work than if a skilled analyst had just done it from the start. Over-relying on automation often creates cleanup work that slows teams down. As we noted in Modern Data Stack Acceleration, true speed comes from workflows designed with governance and best practices.

If you’ve ever skimmed an LLM-generated responce and thought, “That’s not quite right,” you already know the value of human oversight.

AI is fast, but it doesn’t understand what matters to your stakeholders. It can’t distinguish between a seasonal dip and a business-critical loss. And it won’t ask follow-up questions when something looks off.

Think of LLMs like smart interns. They can help you move faster, but they still need supervision. Your team’s expertise, your mental model of how data maps to outcomes, is irreplaceable. Tools like Datacoves embed governance throughout the data journey to ensure humans stay in the loop. Or as Scott Schlesinger says, “AI is the accelerator, not the driver.” It’s a reminder that human hands still need to stay on the wheel to ensure we’re heading in the right direction.

Datacoves helps teams enforce best practices around documentation, version control, and human-in-the-loop development. By giving analysts structure and control, Datacoves makes it easier to integrate AI tools without losing trust or accountability. Here are some examples where Datacoves’ integrated GenAI can boost productivity while keeping you in control.

Want to keep the speed of LLMs and the trust of your team? Here’s how to make that balance work.

AI can enhance analytics but should not be used blindly. LLMs bring speed, scale, and support, but without human oversight they can also introduce costly errors that undermine trust in decision-making.

Human judgment remains essential. It provides the reliability, context, and accountability that AI alone cannot deliver. Confidence doesn’t equal correctness, and sounding right isn’t the same as being right.

The best results come from collaboration between humans and AI. Use LLMs as powerful partners, not replacements. How is your team approaching this balance? Explore how platforms like Datacoves can help you create workflows that keep humans in the loop.

.png)

The world of data moves at a lightning-fast pace, and you may be looking to keep up by migrating your data to a modern infrastructure. As you plan your data migration, you’ll quickly see the many moving parts involved, from data compatibility and security to performance optimization. Choosing the right partner is critical—making the wrong choice can lead to data loss or corruption, compliance failures, project delays, hidden costs and more. At worst, you could end up with a costly new process that fails to gain user adoption! This article provides 10 key factors to consider in a partner to ensure these pitfalls don’t happen to you, guiding you toward a smooth and successful migration. Lets dive in!

Data migration is the process of moving data pipelines from one platform to another. This process can include upgrading or replacing legacy platforms, performing critical maintenance, or transitioning to new infrastructure such as a cloud platform. Whether it's moving data to a modern data center or migrating workloads to the cloud, data migration is a pivotal undertaking that demands meticulous planning and execution.

Organizations may embark on this complex journey for many reasons. A common driver is the need to modernize and adopt cutting-edge solutions like cloud platforms such as Snowflake, which offer unparalleled scalability, performance, and the flexibility of ephemeral resources. Data migration may also be necessitated by mergers and acquisitions, where consolidating and standardizing data across multiple systems becomes essential for unified operations. Additionally, organizations might pursue migration to improve security, streamline workflows, or boost analytics capabilities.

Done right, data migration can be transformative, enhancing data usage and enabling organizations to unlock new opportunities for efficiency, deeper insights, and strategic growth.

Migrating data is a complex undertaking with many moving parts that vary based on your current system and the target system. Careful assessment of your current state and your desired future state is a critical step that should never be overlooked in this planning process. Key considerations include data security, optimizing configurations in the new environment, and transitioning existing pipelines seamlessly. Joe Reis and Matt Housley often emphasize that much of data engineering revolves around "plumbing"—the foundational connections and data flows—which must be meticulously managed for any successful migration.

A lift-and-shift approach, where pipelines are simply moved without modifications, should be avoided as much as possible. This method often undermines the purpose of migrating in the first place: to capitalize on modern features and enhancements offered by newer tools, such as dbt, to improve data quality, documentation, and impact analysis. Moving to dbt without re-thinking how data is cleansed and transformed can lead to outcomes that are worse than your current state such as increased compute costs and difficulty in debugging issues.

Given these complexities, detailed planning, skilled execution, prioritization, decommissioning unused assets, and effective risk management are crucial for a successful migration. Achieving this demands experienced professionals who can execute flawlessly while remaining adaptable to unexpected challenges.