Most enterprise data platform projects don't fail because of the tools. They fail because nobody in the room, not IT, not the consulting firm, not the vendor, had ever seen what a well-built platform actually looks like. So decisions get made by people delegating to other people who are also delegating. Enterprise standards get followed without being questioned. And two years later, you have a platform that works well enough to demo, moves too slowly to trust, and can only be changed by the team that built it. That's a predictable outcome of a broken process.

Most enterprise data platform projects don't fail because of the tools. They fail because nobody in the room had ever seen what a well-built platform looks like.

This article explains why it happens, what the warning signs look like from the outside, and what you should demand before you hand the keys to anyone again.

The delegation chain nobody talks about

Two years ago, you had a problem. Your data was slow to get to, hard to trust, and impossible to act on. You brought it to IT. IT brought it to a consulting firm with the right logos and the right industry experience. Contracts were signed. A roadmap was presented. Everyone nodded.

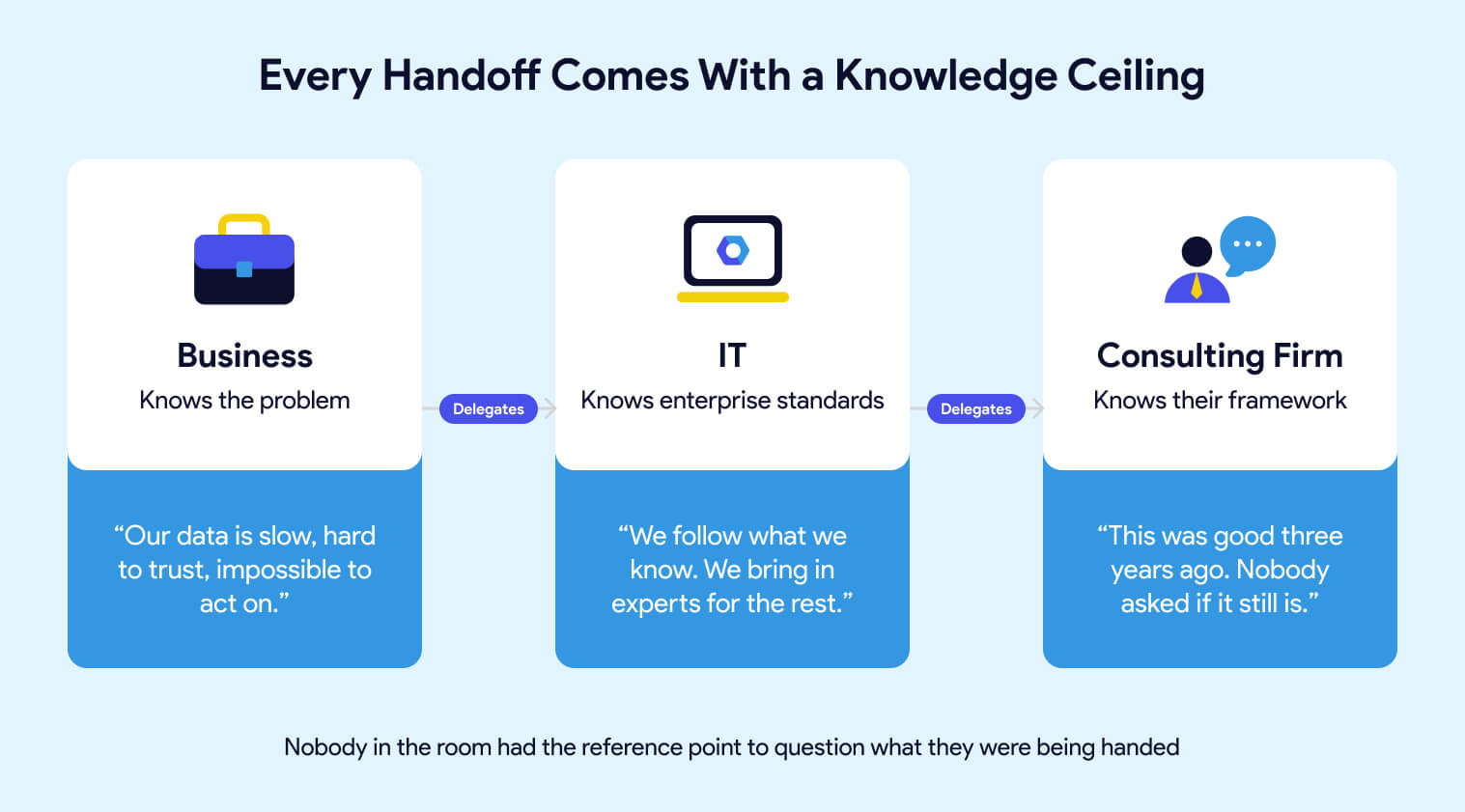

What nobody said out loud is that each handoff in that chain came with a knowledge ceiling. You knew the business problem but not the technical solution. IT knew their enterprise standards but not necessarily what best-in-class data engineering looks like. The consulting firm knew their framework, the one they'd been building for years, the one that was genuinely good when they built it. Whether it was still the right answer for where the industry is today was a question nobody asked, because nobody in the room had the reference point to ask it.

This isn't about blame. The system worked exactly as designed. It just wasn't designed to produce what you actually needed.

The warning signs you can see without being a data engineer

You don't need to understand the architecture to recognize that something is wrong. You've seen it in other ways.

Reports that take three sprints to change. Business users have stopped asking for new metrics because the process is too slow. Analysts who still use Excel because the data in the platform doesn't match what they expect. A go-live that keeps moving. Issues that surface in final testing, never earlier. A data team that spends more time firefighting than building.

These aren't symptoms of a tool problem. They're symptoms of a platform that was built to pass a demo, not to operate at scale. The foundation looked solid in the PowerPoint. Then one corner started sinking. Someone ran outside, propped it up, called it good enough, and everyone went back inside to talk about the drapes. Until the next corner started sinking.

The business lives with the consequences of decisions that were never explained to them. That's the part that should make you uncomfortable. Not because anyone was careless, but because the people making the technical decisions had never been asked to connect them back to your actual business problem.

Why consultant-built platforms age badly

They're incentivized to build, not to maintain

Consulting firms sell services. The migration is the engagement. They have every incentive to deliver something that looks complete at handoff, and very little incentive to think about what happens eighteen months later when your team needs to extend it, upgrade it, or adapt it to something they didn't anticipate. If you're evaluating migration partners, here's what to look for.

That's just the business model. A platform built on open-source tools with documented conventions, enforced by the system rather than by people remembering to follow a process, doesn't generate the same ongoing billing as one that requires the original team to come back every time something needs to change. Someone who built one of these frameworks told me it was "good three years ago." That's a telling phrase. Good three years ago means it was already aging when it was delivered.

The platform freezes while everything else moves forward

dbt, Airflow, SQLFluff, and the open-source ecosystem around them move fast because there are thousands of contributors and companies whose entire existence depends on making them better. A proprietary consulting framework moves at the pace of whoever owns it, if it moves at all.

The gap compounds. New Airflow capabilities require the consulting firm to schedule an upgrade. Framework changes require them to build it. Every month that passes, the distance between what's possible and what your team can actually access gets wider. Your engineers know it. They read the release notes. They see what other teams are doing. They just can't do it themselves because the platform wasn't built to be owned by them.

The teams that don't have this problem didn't get lucky. They built on a foundation that was designed to evolve, with tools maintained by communities that have no interest in keeping anyone dependent.

Your GenAI initiative won't save you. It will expose you

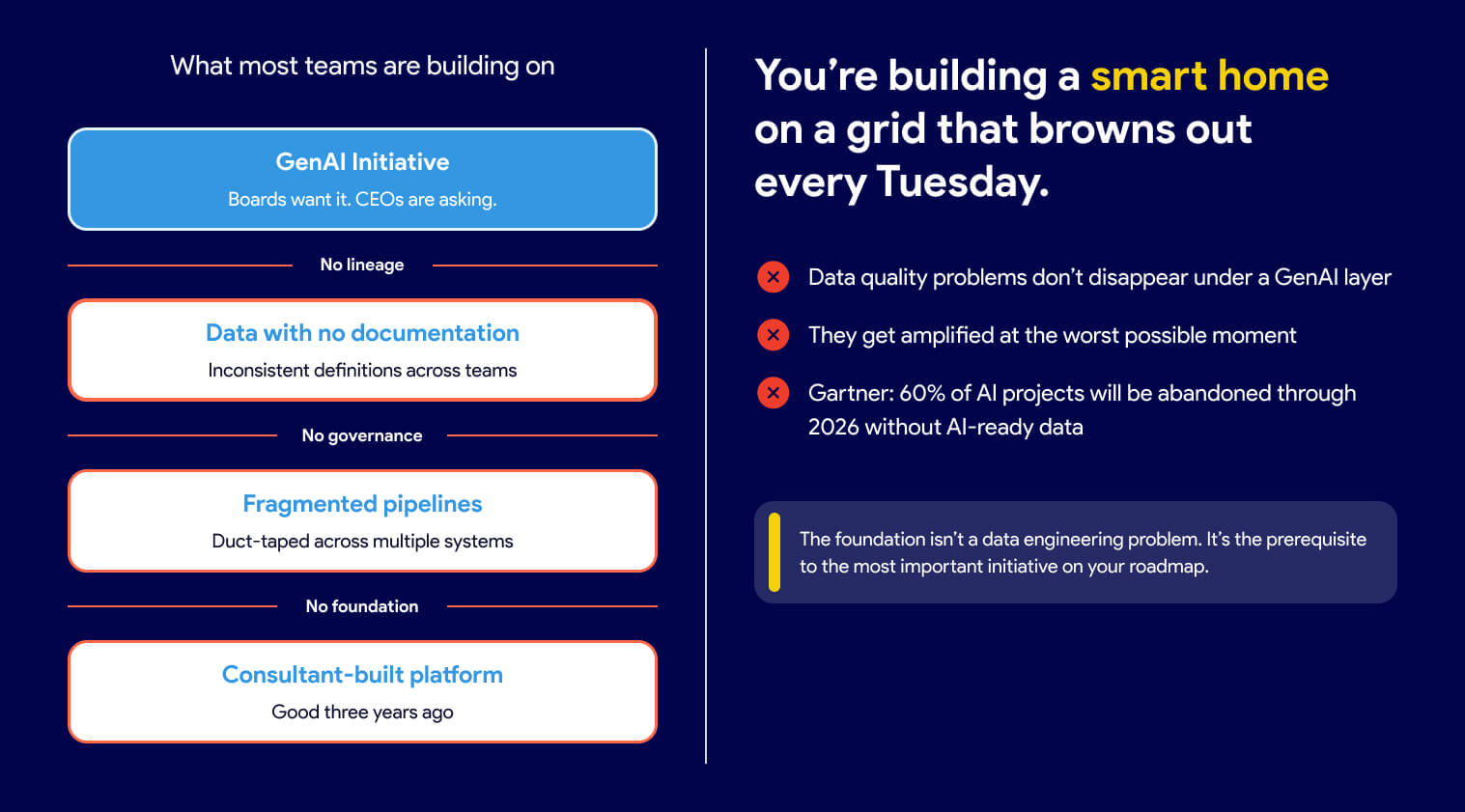

Every business leader has a GenAI mandate right now. Boards want it. CEOs are asking about it. And the instinct is to treat it as a separate initiative, something you layer on top of what you already have.

That's the mistake.

Think about your power grid. Nobody questions whether the lights will turn on when they flip the switch. That reliability exists because someone built generation, transmission, and distribution correctly before anyone thought about what to plug in. You're being asked to build a smart home on a grid that browns out every Tuesday.

AI is only as reliable as the data underneath it. If your data has no lineage, inconsistent definitions, and no documentation, your AI initiative will produce outputs nobody trusts, at scale, faster than you've ever produced untrustworthy outputs before.

According to Gartner, organizations will abandon 60% of AI projects unsupported by AI-ready data by 2026.

The data quality problems that were always there don't disappear under a GenAI layer. They get amplified and made visible in the worst possible moment, in front of the stakeholders you most need to impress.

The foundation isn't a data engineering problem. It's the prerequisite to the most important initiative on your roadmap. Getting it right isn't IT's job to delegate. It's your decision to make.

What good actually looks like

The platform enforces good behavior. People don't have to remember it.

A well-built platform doesn't rely on people following the process correctly every time. It makes the wrong action difficult.

The difference between a platform that scales and one that doesn't usually isn't visible in a demo. It shows up six months after go-live, when the team doubles, when a consultant rolls off, when someone new joins, and has to figure out how things work by reading documentation that may or may not exist.

A well-built platform doesn't rely on people following the process correctly every time. It makes the wrong action difficult. Automated checks run before anything can merge, so code quality doesn't depend on a reviewer having a good day. Naming conventions live in the tooling itself, not in a document that gets read once during onboarding. Pipeline dependencies are declared explicitly and validated automatically, so a missed configuration doesn't quietly cause a downstream failure three sprints later. A new engineer who joins the team inherits those guardrails on day one without reading a document or asking anyone how things work. The knowledge is in the system, not in the heads of the people who built it.

One ecosystem, not one framework

Good data engineering is a thought-out combination of tools that work well together, each maintained by a community that cares about making it better. The people improving dbt, Airflow, and SQLFluff aren't doing it on a consulting firm's project timeline. They're doing it because their companies depend on those tools getting better continuously.

dbt-coves automates the tedious parts of dbt development. dbt-checkpoint enforces data quality standards at commit time, before bad code ever reaches a pipeline. SQLFluff keeps SQL consistent across every engineer on the team. Snowcap manages Snowflake infrastructure as code, the thing that's perpetually "on the roadmap" in consulting-built platforms but never quite arrives.

None of these tools is the answer on its own. The answer is that someone chose them deliberately, integrated them carefully, and built a platform where they work together. That's the difference between a workshop that was designed and one that just accumulated tools over time.

It also means your platform can adapt. When a better approach emerges, you adopt it. Your engineers can use Claude Code, GitHub Copilot, Snowflake Cortex, or any other AI tool that fits their workflow. They're not waiting for a vendor to build an integration or a consulting firm to schedule a framework update. Some platforms give you one AI tool and call it done. That's a product decision masquerading as a strategy.

What to ask before you sign anything

If you're about to start a data platform engagement with a consulting firm, a platform vendor, or anyone else, these are the questions worth asking before you sign.

Can you show me a client whose team ships on their own cadence, without your firm in the critical path for day-to-day decisions? Managing infrastructure is a legitimate service. What you want to avoid is a vendor who ends up managing your decisions, your roadmap, and your team's ability to move without them. That relationship tends to get more expensive over time, not less.

What open-source tools does your framework use, and how do you handle upgrades when new versions are released? A proprietary framework that wraps open-source tools is only as current as whoever maintains the wrapper. Find out who that is and how often it happens.

What happens if a business user needs a new metric? Walk me through the process end-to-end, including who approves it, how long it takes, and what the business user can do themselves. The answer will tell you whether they're building you an industrial kitchen or planning to cook everything for you. Business users are smart enough to use a knife. A platform built on that assumption looks very different from one built on the assumption that they aren't.

Who on our team will own the business logic, the models, and the deployment process? Not the infrastructure, the work. A good partner manages the complexity underneath so your engineers can focus on delivering. If the answer to ownership is vague, the engagement was designed around their continuity, not yours.

How do you handle the Snowflake security model, and can you show me examples? This one is worth asking even if you don't fully understand the answer. Pay attention to whether they answer with confidence and specificity, or whether they say "this came directly from Snowflake" as if that settles it. It doesn't. A recommendation from a Snowflake account team is a starting point, not an architecture.

The faster path

Most leaders assume that doing things right means slowing down. That getting the foundation in place before starting to deliver means months of invisible work before anyone sees results. That's not how this works.

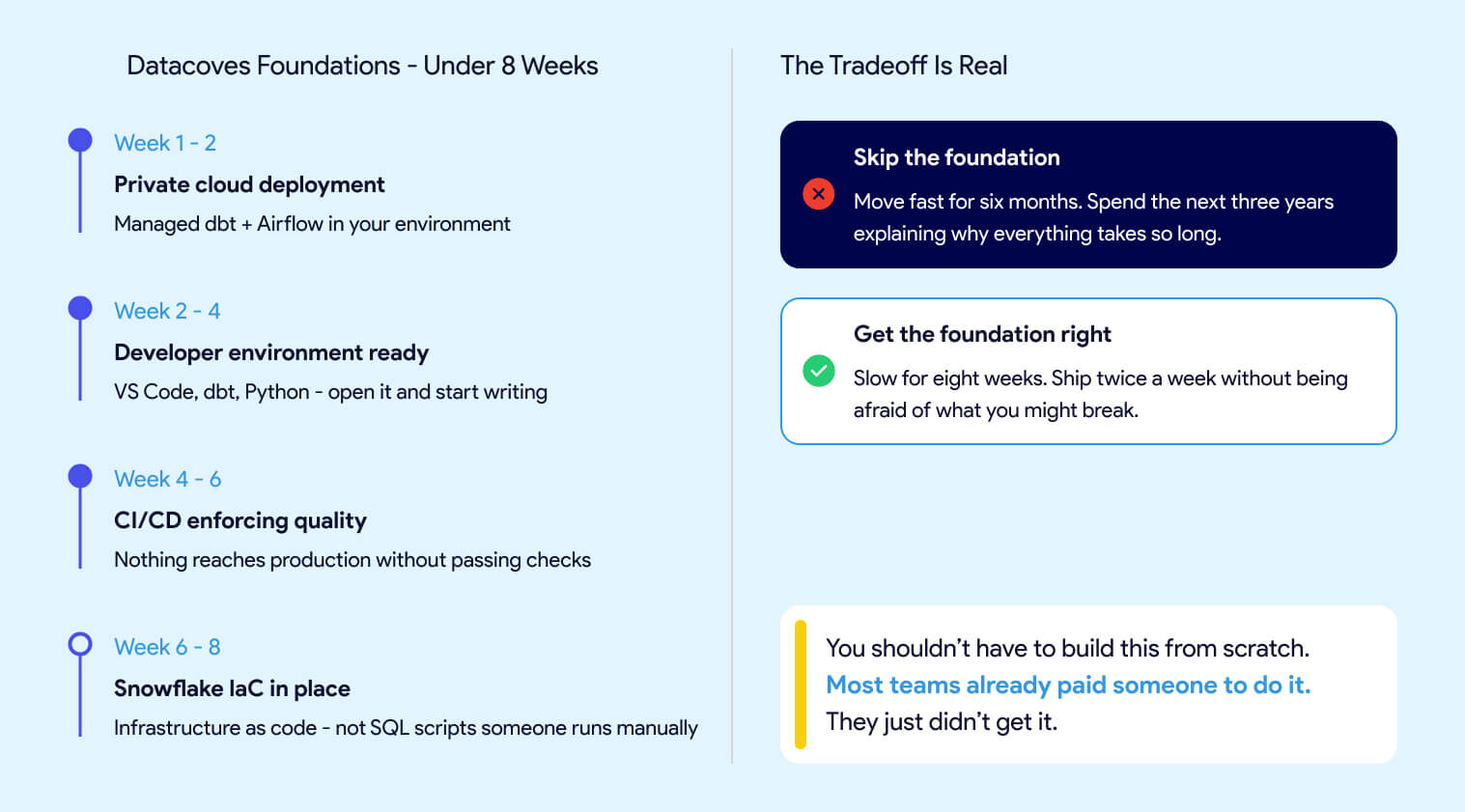

The Datacoves Foundation's engagement takes less than two months.

In that time, you get:

- Managed dbt and Airflow deployed in your private cloud

- A VS Code environment that your engineers can open on day one and start writing code in

- CI/CD pipelines that enforce quality before anything reaches production

- Snowflake infrastructure managed as code, not a collection of SQL scripts someone runs manually when they remember to

The goal is to move slowly to move fast. Not slow forever. Slow for eight weeks, so your team isn't spending the next three years propping up corners. Skip the foundation, and you move fast for the first six months, then spend the next three years explaining why everything takes so long. Get it right and your team ships twice a week without being afraid of what they might break.

Guitar Center onboarded in days, not months. Johnson and Johnson described it as a framework accelerator. A team at DataDrive saved over 200 hours annually by replacing a fragile self-built pipeline with something that actually held up at scale. None of them spent 18 months waiting to find out if it would work.

Without a solid foundation, your GenAI initiative will surface data problems at the worst possible moment. Your business users will keep working around the platform instead of in it. And your engineers will keep moving carefully instead of moving fast.

You shouldn't have to build this from scratch. Most teams already paid someone to do it. They just didn't get it.

FAQ

How can I tell if my data platform has a foundation problem without being a data engineer?

The signs are visible without technical knowledge. Reports that take weeks to change, analysts reverting to Excel, a go-live date that keeps moving, and a data team that spends more time firefighting than building are all symptoms of a platform built to pass a demo, not to operate at scale.

How long does it take to get the foundation right with Datacoves?

The Datacoves Foundation's engagement takes less than two months. In that time, you get managed dbt and Airflow deployed in your private cloud, a VS Code environment your engineers can start writing code in on day one, CI/CD pipelines that enforce quality before anything reaches production, and Snowflake infrastructure managed as code.

What does a well-built data platform actually look like?

It enforces good behavior through the system itself rather than relying on people following the process correctly. Automated checks run before anything merges. Naming conventions live in the tooling, not in a document. A new engineer who joins the team inherits guardrails on day one without asking anyone how things work.

Why do consultant-built data platforms age so badly?

The consulting business model rewards delivery, not maintainability. Once the engagement ends, every future change becomes a new billable project. Meanwhile, the open-source ecosystem around dbt, Airflow, and SQLFluff keeps moving. The gap between what's possible and what your team can actually access widens every month.

Why do enterprise data platform projects fail so often?

Most failures trace back to a delegation chain where no one had the reference point to question what they were being handed. The business knew the problem. IT knew their standards. The consulting firm knew its framework. Nobody in the room could connect all three, so decisions got made by default rather than by design.

Why does getting the data foundation right matter for GenAI?

AI is only as reliable as the data underneath it. Poor lineage, inconsistent definitions, and missing documentation don't disappear when you add a GenAI layer. They get amplified. Gartner predicts that organizations will abandon 60% of AI projects unsupported by AI-ready data through 2026.

-Photoroom.jpg)