.png)

The modern data stack promised to simplify everything. Pick best-in-class tools, connect them, and ship insights. The reality for most data teams looks different: months spent configuring Kubernetes, debugging Airflow dependencies, and managing Python environments before a single pipeline runs in production. Who manages the infrastructure around those tools matters more than which tools you pick.

This article breaks down the build vs. buy decision for the two tools at the core of every modern data platform: dbt Core for transformation and Apache Airflow for orchestration. Both are open source. Both are powerful. And both are significantly harder and more expensive to self-host than most teams anticipate.

In the context of the modern data stack, this decision is not about building software from scratch. dbt Core and Apache Airflow already exist. They are battle-tested, open source, and free to use under permissive licenses.

The real question is: who manages the infrastructure that makes them run in production?

Building means your team owns the infrastructure. You provision and manage Kubernetes clusters, configure Git sync for DAGs, handle Python virtual environments, manage secrets, set up CI/CD pipelines, and keep everything running as tools release new versions. The tools are free. The operational burden is not.

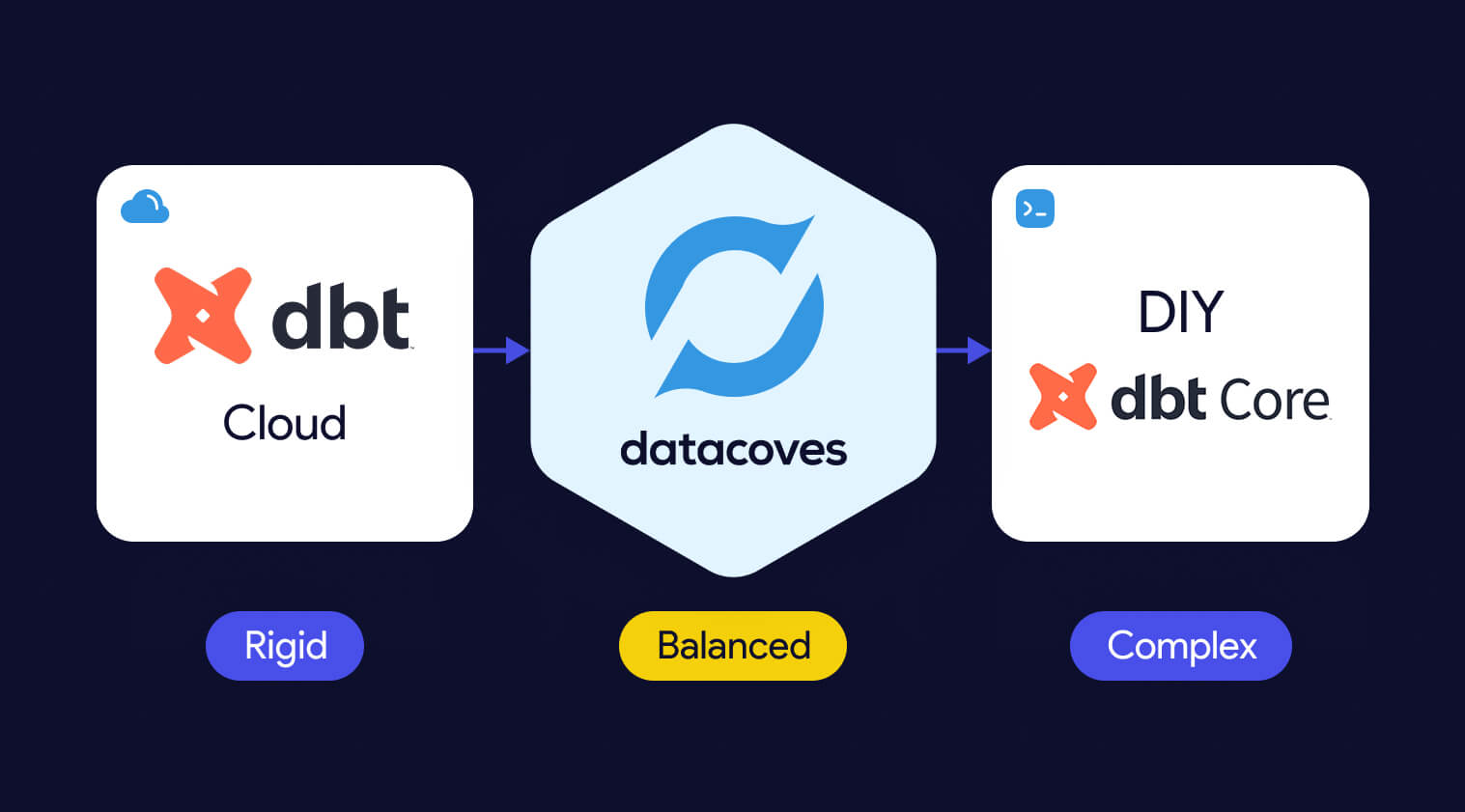

Buying means a managed platform handles that infrastructure for you. Vendors like dbt Cloud, MWAA, Astronomer, and Datacoves build on top of the open-source foundation and manage the environment so your team does not have to. For a detailed feature comparison, see dbt Core vs dbt Cloud. You trade some control for significantly less operational overhead. The key word is "some," the best managed platforms give up very little flexibility while eliminating most of the burden.

This begs the important question: Should you self-manage or pay for your open-source analytics tools?

Both options have legitimate strengths. The right call depends on your team's size, technical depth, compliance requirements, and how much platform maintenance you can absorb without slowing down delivery. Here is a look at each.

The primary argument for building is control. Your team owns every configuration decision: how secrets are stored, how DAGs are synced, how environments are structured, and how tools integrate with your existing systems. For organizations with specialized workflows that no managed platform supports, this matters.

The tradeoff is real and significant. A production-grade Airflow deployment on Kubernetes requires deep DevOps expertise. You will spend weeks on initial setup before writing a single DAG. Ongoing maintenance, dependency management, version upgrades, and security hardening become a permanent part of your team's workload. And when the engineer who built it leaves, that institutional knowledge walks out the door.

Building also means your team is running version 1 of your own platform. Edge cases, security gaps, and scaling issues will surface in production. That is not a risk with a managed solution that has been hardened across many enterprise deployments.

Managed platforms eliminate the infrastructure burden so your team can focus on what actually drives business value: building data models, delivering pipelines, and getting insights to stakeholders faster.

The common concern is flexibility. Many managed platforms lock you into standardized workflows, limit your tool choices, or make migration difficult. That concern is valid for some vendors, not the category as a whole. The right question is not "build or buy" but "which managed platform gives us the control we need without the overhead we do not want.

A well-chosen managed platform gets your team writing and running code in days, not months. It handles upgrades, secrets management, CI/CD scaffolding, and environment consistency. And unlike version 1 of your homegrown solution, it has already solved the edge cases you have not encountered yet.

Open source looks free the way a free puppy looks free. The license costs nothing. Everything that comes after it does. For most data teams, self-hosting dbt Core and Airflow on Kubernetes carries high hidden costs in engineering time alone, before infrastructure spend.

For dbt and Airflow, the real costs fall into three categories: engineering time, security and compliance, and scaling complexity. Most teams underestimate all three.

Before diving into each category, here is what self-hosting dbt Core and Airflow actually costs your team:

.svg)

Setting up a production-grade Airflow environment on Kubernetes is not a weekend project. Teams routinely spend weeks configuring DAG sync via Git or S3, managing Python virtual environments, wiring up secrets management, and debugging dependency conflicts before anything runs reliably.

Then there is the ongoing cost. Upgrades, incident response, onboarding new engineers, and keeping the environment consistent across developers all consume time that could be spent delivering data products. A senior data engineer earns between $126,000 and $173,000 per year (Glassdoor, ZipRecruiter). For a team of two to four engineers spending 25 to 50 percent of their time on platform management, that's $5,250 to $28,830 per month in engineering costs alone, before a dollar of infrastructure spend. And that's assuming no one leaves. For a deeper breakdown of what these tools actually cost to run, see what open source analytics tools really cost.

A managed platform can have your team writing and running code in days. Datacoves helped J&J set up their data stack in weeks, with full visibility and automation from day one.

With open-source tools, your team is responsible for implementing security best practices from the ground up. Secrets management, credential rotation, SSO integration, audit logging, and network isolation do not come preconfigured. Each one requires research, implementation, and ongoing maintenance.

For regulated industries like healthcare, finance, or government, compliance requirements add another layer. Meeting HIPAA, SOX, or internal governance standards through a self-managed stack is a process of iteration and refinement. Every hour spent here is an hour not spent on data products, and every gap is a potential audit finding.

Scaling a self-hosted Airflow deployment means scaling your Kubernetes expertise alongside it. As DAG count grows, as team size increases, and as pipeline complexity compounds, the operational surface area expands. Memory issues, worker contention, and environment drift become recurring problems.

Extended downtime at scale is not just an engineering problem. Business users who depend on fresh data feel it directly. The hidden cost is not just the engineering hours spent fixing it. It is the trust lost with stakeholders when the data is late or wrong.

The strongest argument for a managed platform is compounding speed, not convenience.

Every week your team spends managing infrastructure is a week not spent building data products. That gap compounds. A team that gets into production in days instead of months delivers more value, builds more trust with stakeholders, and develops faster than one still debugging Kubernetes configurations three months in.

Managed platforms handle the infrastructure layer your team should not be owning: upgrades, secrets management, environment consistency, CI/CD scaffolding, and scaling. What used to take months of setup is available on day one. And because you are running a platform that has been hardened across many enterprise deployments, the edge cases have already been solved.

The reliability argument matters too. Your homegrown solution is version 1. A mature managed platform is version 1,000. The difference shows up in production at the worst possible times.

The most common objection to buying is vendor lock-in. It is a legitimate concern, and it applies to some platforms more than others.

The risk is real when a managed platform abstracts away the underlying tools with a proprietary layer, when you do not own your code and metadata, or when switching providers requires a full rebuild. Some vendors in this space do exactly that.

The risk is low when the platform is built on open-source tooling at the core, when you retain full ownership of your code, models, and DAGs, and when the architecture is designed to be warehouse and tool agnostic. Before signing with any vendor, ask three questions: Can I see the underlying dbt Core and Airflow configurations? Do I own everything I build? Can I swap components as my stack evolves?

If the answers are yes, lock-in is not the risk. Slow delivery is.

Pipeline orchestration and transformation do not exist in isolation. For a deeper look at how dbt and Airflow work together as a unified pair, see dbt and Airflow: The Natural Pair for Data Analytics.

Not all managed platforms are built for enterprise complexity. Some are designed for fast starts, not long-term scale. The most common failure modes are rigid workflow standardization that does not match how your team actually works, SaaS-only deployment that cannot meet strict data sovereignty requirements, and limited support once the contract is signed.

MWAA, for example, manages Airflow infrastructure but still requires significant configuration to integrate with dbt and handle memory issues at scale. dbt Cloud covers the transformation layer well but uses per-seat pricing that scales steeply for larger teams and does not address orchestration. Neither covers the full data engineering lifecycle in a unified environment.

The right managed platform gives your tools a proper home.

Datacoves was designed so you don't have to sacrifice.

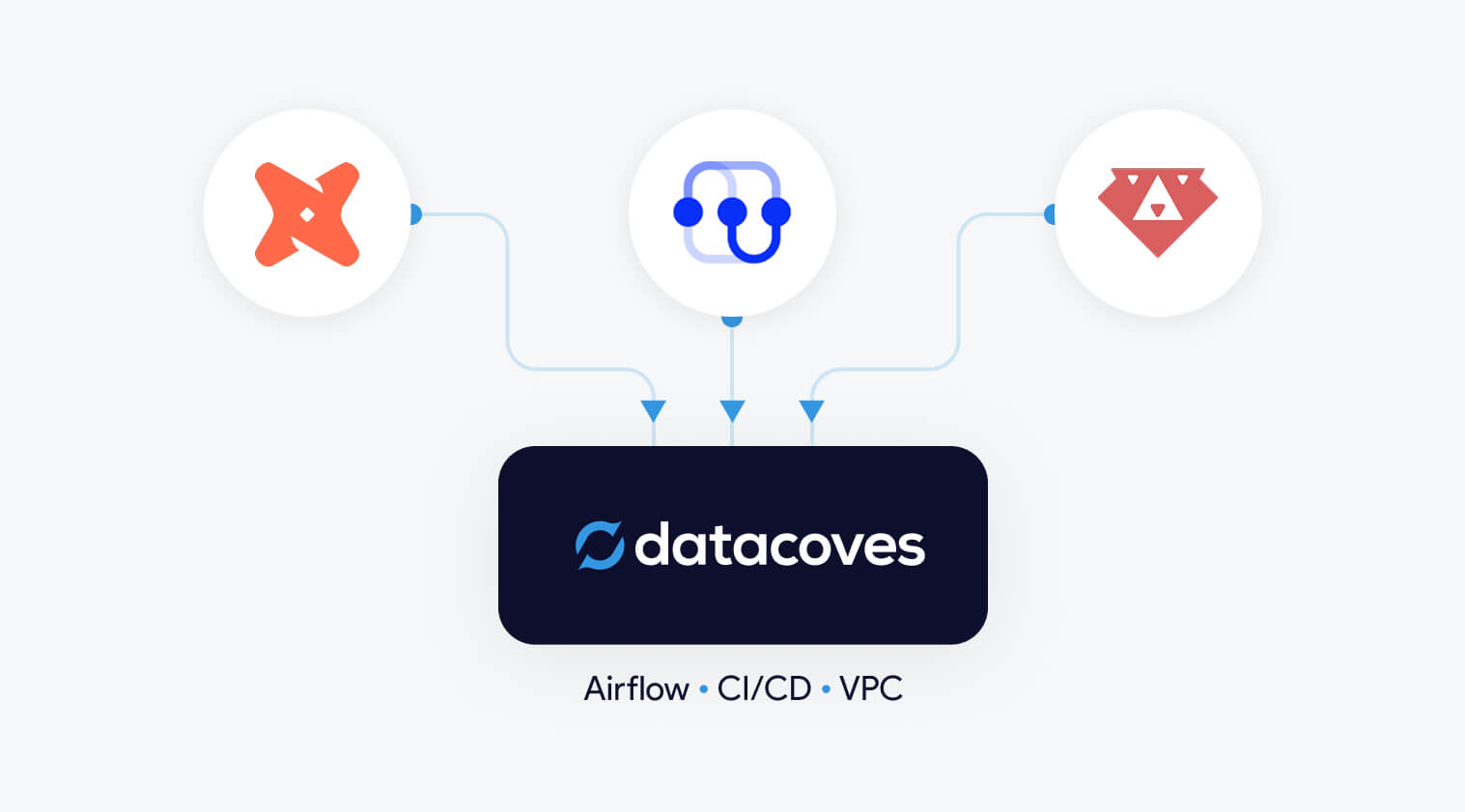

Datacoves is an end-to-end data engineering platform that runs entirely inside your cloud, under your security controls, and adapts to the tools your team already uses. It manages the infrastructure layer so your team does not have to, without locking you into a rigid workflow or a proprietary toolchain.

Every developer gets the same consistent workspace from day one: in-browser VS Code, dbt Core, Python virtual environments, Git integration, CI/CD pipelines, and secrets management, all preconfigured and aligned to best practices. There is no weeks-long setup. There is no "figure it out yourself" onboarding. Your team opens the environment and everything works.

Managed Airflow covers both development and production. My Airflow gives individual developers a personal sandbox for fast iteration. Teams Airflow handles shared production orchestration, with DAG syncing from Git, built-in dbt operators, and simplified retry logic. Troubleshooting across the full pipeline, from ingestion through transformation to deployment, happens in one place.

Datacoves is warehouse agnostic. It works with Snowflake, Databricks, BigQuery, Redshift, DuckDB, and any database with a dbt adapter. It supports dbt Mesh for multi-project, multi-team setups. It integrates with your existing identity provider, logging systems, and ingestion tools. You bring what you have. Datacoves manages the rest.

Unlike dbt Cloud, which is locked to its own runtime and per-seat pricing, or MWAA, which still requires significant configuration work, Datacoves covers the full data engineering lifecycle in a single environment. And because it is built entirely on open-source tooling, there is no proprietary layer trapping your code or your team.

For security-conscious and regulated organizations, Datacoves is the only managed platform in this category that can be deployed entirely within your private cloud account. Your data never leaves your environment. No VPC peering required. No external access to internal resources. Full SSO and role-based access integration with your existing security controls.

This is the difference between a platform that asks you to trust their security and one that puts security entirely in your hands. For teams in healthcare, finance, pharma, or government, that distinction is not a nice-to-have. It is a requirement.

Beyond infrastructure, Datacoves brings a proven architecture foundation. Branching standards, CI/CD enforcement, secrets management patterns, deployment guardrails, and onboarding templates are all pre-baked into the platform. Your team does not need to research and implement best practices from scratch. They inherit them on day one.

Dedicated onboarding, a Resident Solutions Architect on call, and white-glove support mean that best practices do not stay with the champion who led the evaluation. They spread across the whole team. Most tool purchases don't change how a team works. This one does.

Standardized environments and templates reduce onboarding time significantly. Guitar Center onboarded in days, not months, with their full data stack running on Datacoves from the start.

The build vs. buy question is really a resource allocation question. What should your team own, and what should be managed for you?

The answer for most data teams is clear. Own your data models, your business logic, your stakeholder relationships and your architecture decisions. Do not own Kubernetes clusters, Airflow upgrades, and CI/CD pipeline scaffolding. That work consumes engineering time without delivering business value, and it compounds the longer you wait to address it.

As Joe Reis and Matt Housley argue in Fundamentals of Data Engineering, data teams should prioritize extracting value from data rather than managing the tools that support them. The teams that move fastest are not the ones who built the most. They are the ones who made smart decisions about what not to build.

Open source isn't free, and self-hosting is harder than it looks. And the gap between a working proof of concept and a production-grade, secure, scalable data platform is wider than most teams expect until they are already in it.

Datacoves closes that gap. It gives your team the flexibility of a custom build, the reliability of a mature platform, and the security of a private cloud deployment, without the operational burden that makes building so expensive. Your team focuses on data products. Datacoves handles everything underneath them.

If your team is spending more time managing infrastructure than building pipelines, that’s the signal. See Datacoves in action and discover how teams simplify their data platform so they can focus on building, not maintaining.

The top dbt alternatives include Datacoves, SQLMesh, Bruin Data, Dataform, and visual ETL tools such as Alteryx, Matillion, and Informatica. Code-first engines offer stronger rigor, testing, and CI/CD, while GUI platforms emphasize ease of use and rapid prototyping. Teams choose these alternatives when they need more security, governance, or flexibility than dbt Core or dbt Cloud provide.

The top dbt alternatives include Datacoves, SQLMesh, Bruin Data, Dataform, and GUI-based ETL tools such as Alteryx, Matillion, and Informatica.

Teams explore dbt alternatives when they need stronger governance, private deployments, or support for Python and code-first workflows that go beyond SQL. Many also prefer GUI-based ETL tools for faster onboarding. Recent market consolidation, including Fivetran acquiring SQLMesh and merging with dbt Labs, has increased concerns about vendor lock-in, which makes tool neutrality and platform flexibility more important than ever.

Teams look for dbt alternatives when they need stronger orchestration, consistent development environments, Python support, or private cloud deployment options that dbt Cloud does not provide.

Organizations evaluating dbt alternatives typically compare tools across three categories. Each category reflects a different approach to data transformation, development preferences, and organizational maturity.

Organizations consider alternatives to dbt Cloud when they need more flexibility, stronger security, or support for development workflows that extend beyond dbt. Teams comparing platform options often begin by evaluating the differences between dbt Cloud vs dbt Core.

Running enterprise-scale ELT pipelines often requires a full orchestration layer, consistent development environments, and private deployment options that dbt Cloud does not provide. Costs can also increase at scale (see our breakdown of dbt pricing considerations), and some organizations prefer to avoid features that are not open source to reduce long-term vendor lock-in.

This category includes platforms that deliver the benefits of dbt Cloud while providing more control, extensibility, and alignment with enterprise data platform requirements.

Datacoves provides a secure, flexible platform that supports dbt, SQLMesh, and Bruin in a unified environment with private cloud or VPC deployment.

Datacoves is an enterprise data platform that serves as a secure, flexible alternative to dbt Cloud. It supports dbt Core, SQLMesh, and Bruin inside a unified development and orchestration environment, and it can be deployed in your private cloud or VPC for full control over data access and governance.

Benefits

Flexibility and Customization:

Datacoves provides a customizable in-browser VS Code IDE, Git workflows, and support for Python libraries and VS Code extensions. Teams can choose the transformation engine that fits their needs without being locked into a single vendor.

Handling Enterprise Complexity:

Datacoves includes managed Airflow for end-to-end orchestration, making it easy to run dbt and Airflow together without maintaining your own infrastructure. It standardizes development environments, manages secrets, and supports multi-team and multi-project workflows without platform drift.

Cost Efficiency:

Datacoves reduces operational overhead by eliminating the need to maintain separate systems for orchestration, environments, CI, logging, and deployment. Its pricing model is predictable and designed for enterprise scalability.

Data Security and Compliance:

Datacoves can be deployed fully inside your VPC or private cloud. This gives organizations complete control over identity, access, logging, network boundaries, and compliance with industry and internal standards.

Reduced Vendor Lock-In:

Datacoves supports dbt, SQLMesh, and Bruin Data, giving teams long-term optionality. This avoids being locked into a single transformation engine or vendor ecosystem.

Running dbt Core yourself is a flexible option that gives teams full control over how dbt executes. It is also the most resource-intensive approach. Teams choosing DIY dbt Core must manage orchestration, scheduling, CI, secrets, environment consistency, and long-term platform maintenance on their own.

Benefits

Full Control:

Teams can configure dbt Core exactly as they want and integrate it with internal tools or custom workflows.

Cost Flexibility:

There are no dbt Cloud platform fees, but total cost of ownership often increases as the system grows.

Considerations

High Maintenance Overhead:

Teams must maintain Airflow or another orchestrator, build CI pipelines, manage secrets, and keep development environments consistent across users.

Requires Platform Engineering Skills:

DIY dbt Core works best for teams with strong Kubernetes, CI, Python, and DevOps expertise. Without this expertise, the environment becomes fragile over time.

Slow to Scale:

As more engineers join the team, keeping dbt environments aligned becomes challenging. Onboarding, upgrades, and platform drift create operational friction.

Security and Compliance Responsibility:

Identity, permissions, logging, and network controls must be designed and maintained internally, which can be significant for regulated organizations.

Teams that prefer code-first tools often look for dbt alternatives that provide strong SQL modeling, Python support, and seamless integration with CI/CD workflows and automated testing. These are part of a broader set of data transformation tools. Code-based ETL tools give developers greater control over transformations, environments, and orchestration patterns than GUI platforms. Below are four code-first contenders that organizations should evaluate.

Code-first dbt alternatives like SQLMesh, Bruin Data, and Dataform provide stronger CI/CD integration, automated testing, and more control over complex transformation workflows.

SQLMesh is an open-source framework for SQL and Python-based data transformations. It provides strong visibility into how changes impact downstream models and uses virtual data environments to preview changes before they reach production. SQLMesh was originally developed by Tobiko Data, acquired by Fivetran in 2025, and donated to the Linux Foundation in March 2026.

Benefits

Efficient Development Environments:

Virtual environments reduce unnecessary recomputation and speed up iteration.

Community Governance Under the Linux Foundation:

In March 2026, Fivetran contributed SQLMesh to the Linux Foundation, establishing an open community governance model. Founding members including Benzinga, CloudKitchens, Harness, and others joined to support its ongoing development. The project remains publicly available on GitHub, which increases its neutrality and long-term independence from any single vendor.

Considerations

Governance Is New:

While Linux Foundation stewardship is a positive signal for openness, the community governance model is still in its early stages. It remains to be seen how active and independent the contributor community will become over time.

Dataform is a SQL-based transformation framework focused specifically for BigQuery. It enables teams to create table definitions, manage dependencies, document models, and configure data quality tests inside the Google Cloud ecosystem. It also provides version control and integrates with GitHub and GitLab.

Benefits

Centralized BigQuery Development:

Dataform keeps all modeling and testing within BigQuery, reducing context switching and making it easier for teams to collaborate using familiar SQL workflows.

Considerations

Focused Only on the GCP Ecosystem:

Because Dataform is geared toward BigQuery, it may not be suitable for organizations that use multiple cloud data warehouses.

AWS Glue is a serverless data integration service that supports Python-based ETL and transformation workflows. It works well for organizations operating primarily in AWS and provides native integration with services like S3, Lambda, and Athena.

Benefits

Python-First ETL in AWS:

Glue supports Python scripts and PySpark jobs, making it a good fit for engineering teams already invested in the AWS ecosystem.

Considerations

Requires Engineering Expertise:

Glue can be complex to configure and maintain, and its Python-centric approach may not be ideal for SQL-first analytics teams.

Bruin is a modern SQL-based data modeling framework designed to simplify development, testing, and environment-aware deployments. It offers a familiar SQL developer experience while adding guardrails and automation to help teams manage complex transformation logic.

Benefits

Modern SQL Modeling Experience:

Bruin provides a clean SQL-first workflow with strong dependency management and testing.

Considerations

Growing Ecosystem:

Bruin is newer than dbt and has a smaller community and fewer third-party integrations.

While code-based transformation tools provide the most flexibility and long-term maintainability, some organizations prefer graphical user interface (GUI) tools. These platforms use visual, drag-and-drop components to build data integration and transformation workflows. Many of these platforms fall into the broader category of no-code ETL tools. GUI tools can accelerate onboarding for teams less comfortable with code editors and may simplify development in the short term. Below are several GUI-based options that organizations often consider as dbt alternatives.

GUI-based dbt alternatives such as Matillion, Informatica, and Alteryx use drag-and-drop interfaces that simplify development and accelerate onboarding for mixed-skill teams.

Matillion is a cloud-based data integration platform that enables teams to design ETL and transformation workflows through a visual, drag-and-drop interface. It is built for ease of use and supports major cloud data warehouses such as Amazon Redshift, Google BigQuery, and Snowflake.

Benefits

User-Friendly Visual Development:

Matillion simplifies pipeline building with a graphical interface, making it accessible for users who prefer low-code or no-code tooling.

Considerations

Limited Flexibility for Complex SQL Modeling:

Matillion’s visual approach can become restrictive for advanced transformation logic or engineering workflows that require version control and modular SQL development.

Informatica is an enterprise data integration platform with extensive ETL capabilities, hundreds of connectors, data quality tooling, metadata-driven workflows, and advanced security features. It is built for large and diverse data environments.

Benefits

Enterprise-Scale Data Management:

Informatica supports complex data integration, governance, and quality requirements, making it suitable for organizations with large data volumes and strict compliance needs.

Considerations

High Complexity and Cost:

Informatica’s power comes with a steep learning curve, and its licensing and operational costs can be significant compared to lighter-weight transformation tools.

Alteryx is a visual analytics and data preparation platform that combines data blending, predictive modeling, and spatial analysis in a single GUI-based environment. It is designed for analysts who want to build workflows without writing code and can be deployed on-premises or in the cloud.

Benefits

Powerful GUI Analytics Capabilities:

Alteryx allows users to prepare data, perform advanced analytics, and generate insights in one tool, enabling teams without strong coding skills to automate complex workflows.

Considerations

High Cost and Limited SQL Modeling Flexibility:

Alteryx is one of the more expensive platforms in this category and is less suited for SQL-first transformation teams who need modular modeling and version control.

Azure Data Factory (ADF) is a fully managed, serverless data integration service that provides a visual interface for building ETL and ELT pipelines. It integrates natively with Azure storage, compute, and analytics services, allowing teams to orchestrate and monitor pipelines without writing code.

Benefits

Strong Integration for Microsoft-Centric Teams:

ADF connects seamlessly with other Azure services and supports a pay-as-you-go model, making it ideal for organizations already invested in the Microsoft ecosystem.

Considerations

Limited Transformation Flexibility:

ADF excels at data movement and orchestration but offers limited capabilities for complex SQL modeling, making it less suitable as a primary transformation engine

Talend provides an end-to-end data management platform with support for batch and real-time data integration, data quality, governance, and metadata management. Talend Data Fabric combines these capabilities into a single low-code environment that can run in cloud, hybrid, or on-premises deployments.

Benefits

Comprehensive Data Quality and Governance:

Talend includes built-in tools for data cleansing, validation, and stewardship, helping organizations improve the reliability of their data assets.

Considerations

Broad Platform, Higher Operational Complexity:

Talend’s wide feature set can introduce complexity, and teams may need dedicated expertise to manage the platform effectively.

SQL Server Integration Services is part of the Microsoft SQL Server ecosystem and provides data integration and transformation workflows. It supports extracting, transforming, and loading data from a wide range of sources, and offers graphical tools and wizards for designing ETL pipelines.

Benefits

Strong Fit for SQL Server-Centric Teams:

SSIS integrates deeply with SQL Server and other Microsoft products, making it a natural choice for organizations with a Microsoft-first architecture.

Considerations

Not Designed for Modern Cloud Data Warehouses:

SSIS is optimized for on-premises SQL Server environments and is less suitable for cloud-native architectures or modern ELT workflows.

Recent consolidation, including Fivetran acquiring SQLMesh and merging with dbt Labs, has increased concerns about vendor lock-in and pushed organizations to evaluate more flexible transformation platforms.

Organizations explore dbt alternatives when dbt no longer meets their architectural, security, or workflow needs. As teams scale, they often require stronger orchestration, consistent development environments, mixed SQL and Python workflows, and private deployment options that dbt Cloud does not provide.

Some teams prefer code-first engines for deeper CI/CD integration, automated testing, and strong guardrails across developers. Others choose GUI-based tools for faster onboarding or broader integration capabilities. Recent market consolidation, including Fivetran acquiring SQLMesh and merging with dbt Labs, has also increased concerns about vendor lock-in.

These factors lead many organizations to evaluate tools that better align with their governance requirements, engineering preferences, and long-term strategy.

DIY dbt Core offers full control but requires significant engineering work to manage orchestration, CI/CD, security, and long-term platform maintenance.

Running dbt Core yourself can seem attractive because it offers full control and avoids platform subscription costs. However, building a stable, secure, and scalable dbt environment requires significantly more than executing dbt build on a server. It involves managing orchestration, CI/CD, and ensuring development environment consistency along with long-term platform maintenance, all of which require mature DataOps practices.

The true question for most organizations is not whether they can run dbt Core themselves, but whether it is the best use of engineering time. This is essentially a question of whether to build vs buy your data platform. DIY dbt platforms often start simple and gradually accumulate technical debt as teams grow, pipelines expand, and governance requirements increase.

For many organizations, DIY works in the early stages but becomes difficult to sustain as the platform matures.

The right dbt alternative depends on your team’s skills, governance requirements, pipeline complexity, and long-term data platform strategy.

Selecting the right dbt alternative depends on your team’s skills, security requirements, and long-term data platform strategy. Each category of tools solves different problems, so it is important to evaluate your priorities before committing to a solution.

If these are priorities, a platform with secure deployment options or multi-engine support may be a better fit than dbt Cloud.

Recent consolidation in the ecosystem has raised concerns about vendor dependency. Organizations that want long-term flexibility often look for:

Consider platform fees, engineering maintenance, onboarding time, and the cost of additional supporting tools such as orchestrators, IDEs, and environment management

dbt remains a strong choice for SQL-based transformations, but it is not the only option. As organizations scale, they often need stronger orchestration, consistent development environments, Python support, and private deployment capabilities that dbt Cloud or DIY dbt Core may not provide. Evaluating alternatives helps ensure that your transformation layer aligns with your long-term platform and governance strategy.

Code-first tools like SQLMesh, Bruin Data, and Dataform offer strong engineering workflows, while GUI-based tools such as Matillion, Informatica, and Alteryx support faster onboarding for mixed-skill teams. The right choice depends on the complexity of your pipelines, your team’s technical profile, and the level of security and control your organization requires.

Datacoves provides a flexible, secure alternative that supports dbt, SQLMesh, and Bruin in a unified environment. With private cloud or VPC deployment, managed Airflow, and a standardized development experience, Datacoves helps teams avoid vendor lock-in while gaining an enterprise-ready platform for analytics engineering.

Selecting the right dbt alternative is ultimately about aligning your transformation approach with your data architecture, governance needs, and long-term strategy. Taking the time to assess these factors will help ensure your platform remains scalable, secure, and flexible for your future needs.

dbt testing gives data teams a way to validatedata quality, transformation logic, and governance standards directly insidetheir development workflow. Out of the box, dbt Core includes generic tests (unique, not_null, accepted_values, relationships), singular tests for custom SQL assertions, and unit tests for verifying transformation logic before it reaches production. Community packages like dbt-utils and dbt-expectations add dozens more. Combined with CI/CD tools like dbt-checkpoint, teams can enforce testing and documentation standards on every pull request.

This guide covers every layer: what ships with dbt Core, which packages to add, how to store and review results, and how to integrate testing into your CI/CD pipeline.

dbt has two main categories of tests: data tests and unit tests.

Data tests validate the integrity of actual warehouse data and run with every pipeline execution. They come in two forms: generic tests, defined in YAML and applied across models, and singular tests, written as standalone SQL assertions for specific conditions. Under the hood, dbt compiles each data test to a SQL SELECT statement and executes it against your database. If any rows are returned, dbt marks the test as failed.

Unit tests, introduced in dbt 1.8, validate transformation logic using static, predefined inputs. Unlike data tests, they are designed to run during development and CI only, not in production.

.png)

dbt Core ships with four built-in generic tests that cover the most common data quality checks.

unique verifies that every value in a column contains no duplicates. Use this on identifiers like customer_id or order_id to catch accidental duplication in your models.not_null checks that a column contains no null values. This is especially useful for catching silent upstream failures where a field stops being populated.accepted_values validates that a column only contains values from a defined list. For example, a payment_status column might only allow pending, failed, accepted, or rejected. This test will catch it if a new value like approved appears unexpectedly.relationships checks referential integrity between two tables. If an orders table references customer_id, this test verifies that every customer_id in orders has a matching record in the customers table.You apply generic tests by adding them to the model's property YAML file.

Generic tests also support additional configurations that give you more control over how and when they fail.

where limits the test to a subset of rows, useful on large tables where you want to test only recent data or exclude specific values.severity controls whether a test failure blocks execution. Set to warn to flag issues without stopping the pipeline, or keep the default error for critical checks.name overrides the auto-generated test name. Since dbt generates long default names, a custom name makes logs and audit tables much easier to read.

Singular tests are custom SQL assertions saved in your tests/ directory. Each file contains a query that returns the rows failing the test. If the query returns any rows, dbt marks the test as failed.

Use singular tests when built-in generic tests or package tests do not cover your specific business logic. A good example: verifying that sales for one product stay within +/- 10% of another product over a given period. That kind of assertion is too specific for a reusable generic test but straightforward to express in SQL.

When you find yourself writing similar singular tests repeatedly across models, that is a signal to convert the logic into a custom generic test instead.

Custom generic tests work like dbt macros. They can be stored in tests/generic/ or in your macros/ directory. Datacoves recommends tests/generic/ as the default location, but macros/ makes more sense when the test depends on complex macro logic. At minimum, a custom generic test accepts a model parameter, with an optional column_name if the test applies to a specific column. Additional parameters can be passed to make the test more flexible.

Once defined, a custom generic test can be applied across any model or column in your project, just like the built-in tests. This makes them the right choice when you have business logic that repeats across multiple models but is too specific to find in a community package.

Unit tests, available natively since dbt 1.8, validate that your SQL transformation logic produces the expected output before data reaches production.

Unlike data tests that run against live warehouse data on every pipeline execution, unit tests use static, predefined inputs defined as inline values, seeds, or SQL queries in your YAML config. Because the expected outputs don’t change between runs, there’s no value in running unit tests in production. Run them locally during development and in your CI pipeline when new transformation code is introduced.

If your project is on a version older than dbt 1.8, upgrading is the recommended path. Community packages that previously filled this gap (dbt-unit-testing, dbt_datamocktool, dbt-unittest) are no longer actively maintained and are not recommended for new projects.

Source freshness checks aren’t technically dbt tests, but they solve one of the most common silent failure modes in data pipelines: a source stops updating, but the pipeline keeps running without any errors.

Freshness checks are configured in your sources.yml file with warn_after and error_after thresholds. When dbt detects that a source has not been updated within the defined window, it raises a warning or error before your models run. This is especially critical for time-sensitive reporting, where stale data can be worse than no data at all.

dbt Core's built-in tests cover the fundamentals, but the community has built a rich ecosystem of packages that extend testing well beyond what ships out of the box. Packages are installed via your packages.yml file and sourced from dbt Hub.

dbt-utils, maintained by dbt Labs, is the most widely used dbt testing package. It adds 16 generic tests alongside SQL generators and helper macros that complement dbt Core's built-in capabilities.

not_accepted_values is the inverse of accepted_values. Use it to assert that specific values are never present in a column.equal_rowcount confirms that two tables have the same number of rows. This is particularly useful after transformation steps where row counts should be preserved.fewer_rows_than validates that a target table has fewer rows than a source table, which is the expected result after any aggregation step.For the full list of all 16 generic tests with usage examples, see the Datacoves dbt-utils cheatsheet.

dbt-expectations, maintained by Metaplane, ports the Python library Great Expectations into the dbt ecosystem. It gives analytics engineers 62 reusable generic tests without adding a separate tool to the stack.

The package covers seven categories:

The string matching and aggregate function categories in particular cover validations that would otherwise require custom singular tests. Full documentation is available on the dbt-expectations GitHub repository.

dbt_constraints, created by Snowflake, generates database-level primary key, unique key, and foreign key constraints directly from your existing dbt tests. It is primarily designed for Snowflake, with limited support on other platforms.

When added to a project, it automatically creates three types of constraints.

dbt_utils.unique_combination_of_columns.relationships tests.This package is most valuable for teams that want database constraints alongside dbt's test-based validation, not instead of it. Snowflake doesn’t enforce most constraints at write time, but the query optimizer uses them for better execution plans. Some tools also read constraints to reverse-engineer data model diagrams or add joins automatically between tables.

For most dbt projects, dbt-utils and dbt-expectations are the two packages worth adding first. Layer in dbt_constraints when your use case specifically calls for database-level constraints on Snowflake.

By default, dbt doesn’t persist test results between runs. You can change this by setting store_failures: true in your dbt_project.yml or at the individual test level.

This is useful for spot-checking failures after a run, but each execution overwrites the previous results, so it does not give you historical visibility into data quality trends over time. The tools below address that gap with lightweight reporting options.

When store_failures: true is enabled at the project or test level, dbt writes the rows that fail each test into tables in your warehouse under a schema named [your_schema]_dbt_test__audit. This creates one table per test, containing the actual records that triggered the failure.

This is a practical first step for teams that want to inspect failures without setting up a dedicated observability platform. The main limitation: each run overwrites the previous results, so you cannot track failure trends over time without additional tooling.

Datacoves recommends dbt audit tables in a separate database to keep your production environment clean. On platforms like Snowflake, this also reduces overhead for operations like database cloning.

dq-tools stores dbt test results and makes them available for visualization in a BI dashboard. If your team already has a BI layer in place, this is a lightweight way to surface data quality metrics alongside existing reporting without adding a separate observability tool.

datacompy is a Python library for migration validation. It performs detailed table comparisons, reporting on row-level differences, column mismatches, and statistical summaries. It’s not a dbt package, but it integrates naturally into migration workflows that use dbt for transformation.

Standard dbt tests aren’t designed for row-by-row comparison across systems, which makes datacompy a useful complement when you’re migrating from legacy platforms.

The tests covered so far validate data. The tools below validate your dbt project itself, catching governance and compliance issues at the development and PR stage rather than in production.

dbt-checkpoint, maintained by Datacoves, enforces project governance standards automatically so teams don’t rely on manual code review to catch missing documentation, unnamed columns, or hardcoded table references. It runs as a pre-commit hook, blocking non-compliant code before it’s pushed to the main branch, and can also run in CI/CD pipelines to enforce the same checks on every pull request.

Out of the box it validates things like whether models and columns have descriptions, whether all columns defined in SQL are present in the corresponding YAML property file, and whether required tags or metadata are in place.

It's a natural fit for dbt Core teams that want Git-native governance without additional infrastructure. The tradeoff: it requires familiarity with pre-commit configuration to set up and extend. Like all Python-based governance tools, it doesn't run inside the dbt Cloud IDE.

dbt-bouncer, maintained by Xebia, takes an artifact-based approach, validating against manifest.json, catalog.json, and run_results.json rather than running as a pre-commit hook. It requires no direct database connection and can run in any CI/CD pipeline that has access to dbt artifacts. Checks cover naming patterns, directory structure, and description coverage, and it can run as a GitHub Action or standalone Python executable.

dbt-project-evaluator, maintained by dbt Labs, takes a different approach. Rather than running as an external tool, it is a dbt package: it materializes your project's DAG structure into your warehouse and runs dbt tests against it.

It checks for DAG issues (model fanout, direct joins to sources, unused sources), testing and documentation coverage, naming convention violations, and performance problems like models that should be materialized differently.

The main limitation is adapter support: it works on BigQuery, Databricks, PostgreSQL, Redshift, and Snowflake, but not on Fabric or Synapse. Because it materializes models into your warehouse, it has a slightly higher execution cost than artifact-based tools.

dbt-score, maintained by Picnic Technologies, is a Python-based CLI linter that reads your manifest.json and assigns each model a score from 0 to 10 based on metadata quality: missing descriptions, absent owners, undocumented columns, models without tests.

The scoring approach makes it easy to track improvement over time and prioritize which models need attention, without enforcing hard pass/fail gates on every PR. Custom rules are fully supported. It requires no database connection and no dbt run, just a manifest.json.

These tools aren’t mutually exclusive, and many teams combine them. For dbt Core teams, dbt-checkpoint is the recommended starting point: it enforces governance at the PR stage with minimal setup and can run both locally and in CI/CD pipelines. dbt-bouncer is worth evaluating if your team wants artifact-based validation running in an external CI/CD pipeline. Add dbt-project-evaluator when you want DAG-level structural checks alongside documentation and testing coverage. Layer in dbt-score for ongoing visibility into metadata quality across your project without hard enforcement gates.

dbt testing isn’t an all-or-nothing investment. The most effective approach is to start with what ships in dbt Core and expand coverage as your team builds confidence.

A practical progression looks like this: start with unique, not_null, and relationships tests on source tables and mart models. Add dbt-utils and dbt-expectations as your testing needs grow beyond the basics. When your team is ready to enforce governance and metadata standards, dbt-checkpoint is the simplest starting point for dbt Core teams, with dbt-project-evaluator and dbt-score as complementary layers.

If your organization is still running transformations in legacy tools like Talend, Informatica, or Python, you do not need to wait for a full migration to start benefiting from dbt testing. dbt sources can point to any table in your warehouse, whether or not dbt created it, so you can layer dbt tests and documentation on top of your existing pipeline today. See Using dbt to Document and Test Data Transformed with Other Tools for a step-by-step walkthrough.

Where teams hit friction isn’t usually the tests themselves. It’s managing the infrastructure, environments, CI/CD pipelines, and orchestration around them. Datacoves handles that layer, giving your team a managed dbt and Airflow environment so they can focus on building and testing models rather than maintaining tooling. Learn more about how Datacoves compares to other dbt solutions.

You now know what dbt (data build tool) is all about. You are being productive, but you forgot what `dbt build` does or you forgot what the @ dbt graph operator does. This handy dbt cheat sheet has it all in one place.

With the advent of dbt 1.6, we updated the awesome dbt cheat sheet created originally by Bruno de Lima

We have also moved the dbt jinja sheet sheet to a dedicated post.

This reference summarizes all the dbt commands you may need as you run your dbt jobs or study for your dbt certification.

If you ever wanted to know what the difference between +model and @model is in your dbt run, you will find the answer. Whether you are trying to understand dbt graph operators or what the dbt retry command does, but this cheat sheet has you covered. Check it out below.

These are the principal commands you will use most frequently with dbt. Not all of these will be available on dbt Cloud

The dbt commands above have options that allow you to select and exclude models as well as deferring to another environment like production instead of building dependent models for a given run. This table shows which options are available for each dbt command

By combining the arguments above like "-s" with the options below, you can tell dbt which items you want to select or exclude. This can be a specific dbt model, everything in a specific folder, or now with the latest versions of dbt, the specific version of a model you are interested in.

dbt Graph Operator provide a powerful syntax that allow you to hone in on the specific items you want dbt to process.

The following commands are used less frequently and perform actions like initializing a dbt project, installing dependencies, or validating that you can connect to your database.

The flags below immediately follow the dbt command and go before the subcommand e.g. dbt <FLAG> run

Read the official dbt documentation

As a managed dbt Core solution, the Datacoves platform simplifies the dbt Core experience and retains its inherent flexibility. It effectively bridges the gap, capturing many benefits of dbt Cloud while mitigating the challenges tied to a pure dbt Core setup. See if Datacoves dbt pricing is right for your organization or visit our product page.

Please contact us with any errors or suggestions.

I read an article on Anchor Data Modeling, more specifically, Atomic modeling where the author proposes a different way of Data Modeling. The rationale for this change is that there is a lack of skills to model data well. We are giving powerful tools to novices, and that is bound to lead to problems.

From the article:

"we are in a distressful situation both concerning the art as a whole but also its place in modern architectures"

Is this the case? Do we have a big problem on the horizon that requires us to make this big shift?

I'd say I am open-minded and expose myself to different ways of thinking so I can broaden my views. A few years ago, I learned a bit about COBOL, not because I had any real use for it but because I was curious. I found it very interesting and even saw its similarities with SQL. I approached the topic with no preconceived ideas; this is the first time I heard of Atomic Modeling.

The issues I see with ideas like Atomic data modeling are not in their goal. I am 100% aligned with the goal; the problem is the technology, process, and people needed to get there.

What we see in the market is a direct result of a backlash against doing things "perfectly." But why is this the case? I believe it is because we haven't communicated how we will achieve this vision of ideas like atomic data. The author even says a key phrase in the first paragraph:

"practitioners shying away from its complexity"

If doing anchor data modeling is "complex" how are we going to up-skill people? Is this feasible? I am happy if I can get more people to use SQL vs a GUI tool like Alterix 😁

I am by no means an expert. Yet, I am fairly technical, and if I am not convinced, how will we convince decision-makers?

As I read this article, here's what I am thinking:

1. First, I will need to deconstruct the data I get from some source like material master data form SAP. That will be a bunch of tables, and who is going to do all this data modeling? It sounds expensive and time-consuming.

2. I am going to need some tooling for this, and I am either going to build it or use something a few others are using. Will my company want to take a chance on something this early? This sounds risky.

3. After I deconstruct all this data, I need to catalog all these atoms. I now have a lot of metadata, and that's good, but is the metadata business-friendly? We can't get people to add table descriptions how is this going to happen with this explosion of objects? Who will maintain it? How will we expose this? Is there a catalog for it already? Does that catalog have the other features people need? It sounds like I need to figure out a bunch of things, the biggest one being the change management aspect.

4. What sort of database will I use to store this? This is a great use case for a graph database. But graph databases are not widely adopted, and I have seen graph databases choke at scale. We can use a relational database, but these joins are going to be complex. Someone may have figured all this out, but there's more tech and learning needed. It sounds like this will also add time and expense.

5. When I have managed to do all the above, I will need to construct what people can actually use. We need objects that work with tools that are available. I need to make relational tables I can query with SQL and viz tools, which are more user-friendly. This sounds like more work, more time, and more money.

I may have missed some steps and oversimplified what's needed for this type of change. I am also aware that I may not know what exists to solve all the above. However, if I don't know it, then there are a ton of other people who also don't know it and this is where we need to start. We need to understand how we will tactically achieve this "better" world.

I've had conversations on metadata-driven automation, and like atomic modeling, I am not clear on who we are helping and how. What are we improving and in what timeframe? In the end, it feels like we have optimized for something only a few companies can do. To do anchor modeling well would be a huge expense, and when things go wrong, there are several points of failure. When we look at business problems, we need to be sure to optimize the end-to-end system. We can't locally optimize one area because we are likely moving the problem somewhere else. This can be in terms of time, money, or usability.

Decision-makers are not interested in data modeling. They are expecting results and a faster time to market. It's hard enough getting people to do things "better." This is why I find it hard to imagine that we can get to this level of maturity any time soon.

There are incremental steps we can take to incorporate best practices into the modern data stack. We need to help people mature their data practice faster, and we should not let perfection get in the way of good. Most companies are not large enterprises with millions of dollars to spend on initiatives like atomic modeling. That being said, I have yet to see anchor modeling in practice, so I welcome the opportunity to learn. I remember years ago the debates about how Ruby on Rials was teaching people "bad practices." The other side of that argument is that Rails helped companies like Twitter and Github launch businesses faster. Rails was also better than the alternative at the time, which included messy PHP code. Others advocated for well-crafted "scalable" and expensive Java applications. Rails may not be the powerhouse it once was, but it has had a huge influence on how we build software. I even see its influence in dbt even if it might not have been intentional or direct.

Tools like Snowflake and dbt allow us to build processes that are much better than what most people have. Should we focus on all the "bad" things that may come with the modern data stack? Should we focus on how practitioners are not well educated, and so we need to throw all they are doing out?

I don't think so; I believe that we can help companies mature their data practices faster. Will we have the best data models? Maybe not. Will users do things perfectly? Nope. But can we help them move faster and guide them along their journey to avoid big pitfalls? I think we can. Getting people to use git, automating testing, and creating DataOps processes is a huge step forward for many organizations. Let's start there.

There's a reason Data Mesh and the Modern Data Stack resonate with so many people. There's a desire to do things faster with more autonomy at many companies, not just the ones with multi-million-dollar budgets. Let's focus on what is achievable, do the best we can, and help people mature along the way. We don't need more complexity; we need less.

A few years ago, we identified a need to simplify how companies implement the Modern Data Stack, both from an infrastructure and process perspective. This vision led to the creation of Datacoves. Our efforts were recognized by Snowflake as we became a top-10 finalist in the 2022 Snowflake Startup Challenge. We have become trusted partners at Fortune 100 companies, and now we are ready for our next chapter.

Datacoves is thrilled to announce that we have been selected to be part of the Spring 2023 cohort at TinySeed. Their philosophy and culture align flawlessly with our values and aspirations. Our goal has always been to help our customers revolutionize the way their teams work with data, and this investment will enable us to continue delivering on that vision.

The TinySeed investment will also strengthen our commitment to supporting open-source projects within the dbt community, such as SQLFluff, dbt-checkpoint, dbt-expectations, and dbt-coves. Our contributions will not only benefit our customers but also the broader dbt community, as we believe open-source innovation enhances the tools and resources available to everyone.

We want to express our deep gratitude to Tristan Handy, CEO & Founder at dbt Labs and the entire dbt Labs team for partnering with us on the 2023 State of Analytics Engineering Community survey and for creating the amazing dbt framework. Without dbt, Datacoves would not be where it is today, and our customers would not enjoy the benefits that come with our solutions.

As we continue to grow and evolve, we look forward to finding new ways to collaborate with dbt Labs and the dbt community to further enhance how people use dbt and provide the best possible experience for everyone. Together, we can empower data teams around the world and revolutionize the way they work.

.webp)

In continuation of our previous blog discussing the importance of implementing DataOps, we now turn our attention to the tools that can efficiently streamline your processes. Additionally, we will explore real-life examples of successful implementations, illustrating the tangible benefits of adopting DataOps practices.

There are a lot of DataOps tools that can help you automate data processes, manage data pipelines, and ensure the quality of your data. These tools can help data teams work faster, make fewer mistakes, and deliver data products more quickly.

Here are some recommended tools needed for a robust DataOps process:

DataOps has been successfully used in the real world by companies of all sizes, from small startups to large corporations. The DataOps methodology is based on collaboration, automation, and monitoring throughout the entire data lifecycle, from collecting data to using it. Organizations can get insights faster, be more productive, and improve the quality of their data. DataOps has been used successfully in many industries, including finance, healthcare, retail, and technology.

Here are a few examples of real-world organizations that have used DataOps well:

DataOps has a bright future because more and more businesses are realizing how important data is to their success. With the exponential growth of data, it is becoming more and more important for organizations to manage it well. DataOps will likely be used by more and more companies as they try to streamline their data management processes and cut costs. Cloud-based data management platforms have made it easier for organizations to manage their data well. Some of the main benefits of these platforms are that they are scalable, flexible, and cost-effective. With DataOps teams can improve collaboration, agility, and build trust in data by creating processes that test changes before they are rolled out to production.

With the development of modern data tools, companies can now adopt software development best practices in analytics. In today’s fast-paced world, it's important to give teams the tools they need to respond quickly to changes in the market by using high-quality data. Companies should use DataOps if they want to manage data better and reduct the technical debt created from uncontrolled processes. Putting DataOps processes in place for the first time can be hard, and it's easier said than done. DataOps requires a change in attitude, a willingness to try out new technologies and ways of doing things, and a commitment to continuous improvement. If an organization is serious about using DataOps, it must invest in the training, infrastructure, and cultural changes that are needed to make it work. With the right approach, companies can get the most out of DataOps and help their businesses deliver better outcomes.

At Datacoves, we offer a suite of DataOps tools to help organizations implement DataOps quickly and efficiently. We enable organizations to start automating simple processes and gradually build out more complex ones as their needs evolve. Our team has extensive experience guiding organizations through the DataOps implementation process.

Schedule a call with us, and we'll explain how dbt and DataOps can help you mature your data processes.

The amount of data produced today is mind-boggling and is expanding at an astonishing rate. This explosion of data has led to the emergence of big data challenges that organizations must overcome to remain competitive. Organizations must effectively manage data, guarantee its accuracy and quality, in order to derive actionable insights from it. This is where DataOps comes in. In this first post we go through an overview of DataOps and in our next post we will discuss tooling that support DataOps as well as discuss companies that have seen the benefit of implementing DataOps Processes.

DataOps is a methodology that merges DevOps principles from software development with data management practices. This helps to improve the process of developing, deploying, and maintaining data-driven applications. The goal is to increase the delivery of new insights and the approach emphasizes collaboration between data scientists, data engineers, data analysts, and analytics engineers.

Automation and continuous delivery are leveraged for faster deployment cycles. Organizations can enhance their data management procedures, decrease errors, and increase the accuracy and timeliness of their data by implementing DataOps.

The overarching goal is to improve an organization's capacity for decision-making by facilitating quicker, more precise data product deliveries. DataOps can speed up task completion with fewer errors by encouraging effective team collaboration, ultimately enhancing the organization's success.

DataOps is now feasible thanks to modern data stack tools. Modern data tools like Snowflake and dbt can enable delivery of new features more quickly while upholding IT governance standards. This allows for employee empowerment and improves their agility all while helping to create a culture that values data-driven decision-making. Effective DataOps implementation enables businesses to maximize the value of their data and build a data-driven culture for greater success.

It is impossible to overstate the role that DataOps plays in overcoming big data challenges. Organizations must learn how to manage data effectively as it continues to increase if they want to stay competitive.

DataOps addresses these challenges by providing a set of best practices and tools that enable data teams to work more efficiently and effectively. Some of the key benefits of DataOps include:

In a world without DataOps, data-related processes can be slow, error-prone, and documentation is often outdated. DataOps provides the opportunity to rethink how data products are delivered. This promotes a culture of federated delivery. Everyone in the organization with the necessary skills is empowered to participate in insight generation.

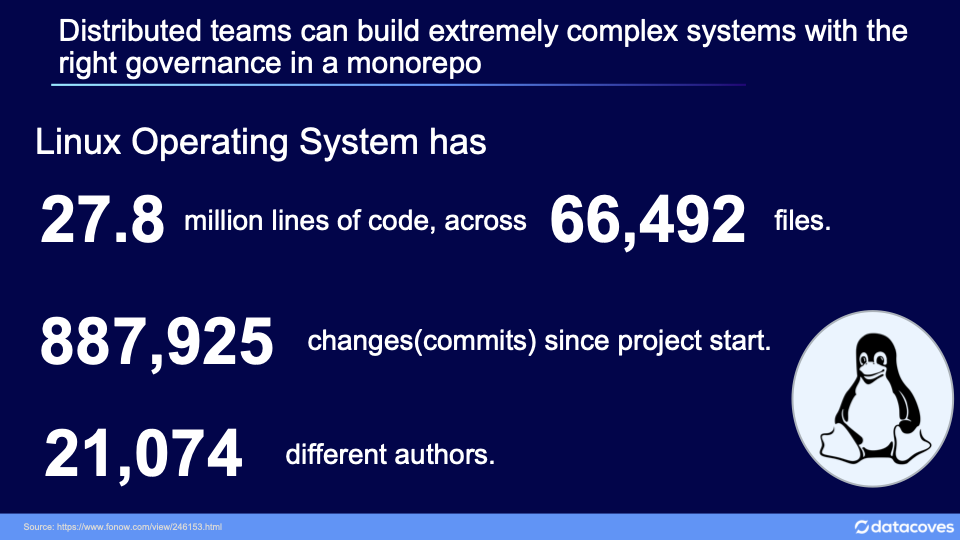

We can achieve comparable results while preserving governance over the delivery process by learning from large, distributed open-source software development projects like the Linux project. To ensure high-quality output, a good DataOps process places an emphasis on rules and procedures that are monitored and controlled by a core team.

To implement DataOps well, one needs to take a holistic approach that brings together people, processes, and technology. Here are the five most important parts of DataOps that organizations need to think about in order to implement it successfully:

Having identified what needs to be done to achieve a good DataOps implementation, you may be wondering how we turn these ideas into actionable steps.

At Datacoves, we offer a comprehensive suite of DataOps tools to help organizations implement robust processes quickly and efficiently. One of the key benefits of using Datacoves is the ability to implement DataOps incrementally using our dynamic Infrastructure. Organizations can start by automating simple processes, such as data collection and cleaning, and gradually build out more complex processes as their needs evolve.

Our agile methodology allows for iterative development and continuous deployment, ensuring that our tools and processes are tailored to meet the evolving needs of our clients. This approach allows organizations to see the benefits of DataOps quickly, without committing to a full-scale implementation upfront. We have helped teams implement mature DataOps processes in as little as six weeks.

The Datacoves platform is being used by top global organizations and our team has extensive experience in guiding organizations through the DataOps implementation process. We ensure that you benefit from best practices and avoid common pitfalls when implementing DataOps.

Set aside some time to speak with us to discuss how we can support you in maturing your data processes with dbt and DataOps.

Don't miss out on our latest blog, where we showcase the best tools and provide real-life examples of successful implementations.

Get ready to take your data operations to the next level!

No one bakes for the sake of baking alone; cakes are meant to be shared. If no one bought, ate, or gifted someone with their delicious chocolate cake, then there would be no bakers. The same is true for data analytics. Our goal as data practitioners is to feed our organization with the information needed to make decisions.

If our cake doesn’t taste good or isn’t available when people want dessert, then it doesn’t matter that we made it from scratch. When it comes to big data, your goal should be to have the equivalent of a delicious cake - usable data - available when someone needs it.

.webp)

Life would be simple if everyone were happy with a single flavor of cake. Metrics play a crucial role in our organization, and two of the most fundamental ones are ARR (Annual Recurring Revenue) and NRR (Net Recurring Revenue). These metrics are like chocolate and vanilla - they remain popular and relevant. Yet, these flavors alone are not enough. Just as with ice cream flavors, we eventually need to try something new, the same goes for insights. It's important to experiment and explore different perspectives.

When something is novel, we love it. When we first start baking, we are not consistent. But, over time, quality improves. We go from ok, to good, and to great. Even with our newfound expertise, the chocolate cake will become boring. We all want something new.

With data, you often start with a simple metric. Having something is better than nothing, but that only lasts so long. We discover something new about our business and we translate that information into action. This is good for a while, but we will eventually see diminishing returns. While a LTV (Life Time Value) analysis may have a significant impact today, its usefulness is likely to diminish within a short period of time. Your stakeholders will crave something new, something more innovative and updated.

Someone is going to ask for a deep dive into how CAC (Customer Acquisition Cost) impacts LTV, or they might ask for a new kind of icing on that cake you just made. Either way, the point remains – as those around you start making use (or eating) of what you’re providing, they will inevitably ask for something more.

It depends. Some organizations can do more than enough with the reports and dashboards built into their CRM, web analytics, or e-commerce system. This works for many companies. There is no need to spend extra time and money on more complex data systems when something simple will suffice. There is a reason that chocolate and vanilla are popular flavors; many people like their taste and know what they are getting with their order. The same can be said for your data infrastructure.

However, flexibility is the challenge. You are limited by your reporting options and the data analytics you can access. You can have a cake you can eat, but it’s going to look a certain way, having only one type of icing, and it certainly will not have any premium fillings.

If you want those things, you need to look for something a bit more nuanced.

How do you start to accommodate your organization’s new demand for analysis or your family’s newly refined palate? By using new tools and techniques.

The Easy Bake Oven is the simplest first step – you get a pouch of ingredients, mix them, and within a few minutes, you have a cake. You might think it’s a children’s toy, but there are very creative recipes out there for the adventurous types. Unfortunately, you can only go so far; you’re limited by the size of the oven and the speed you can bake each item.

The corollary in the data world is Excel – a fabulous tool, but something that has its limitations. While it is possible to extract data from your tools and manipulate it in a spreadsheet to make it more manageable, you are still limited by the pre-designed extracts offered by the vendor tools. Excel is flexible enough for many, but it is not a perfect end-state reporting solution for everyone.

Eventually, you’ll need to address inconsistencies between systems and automate the process of data prep before it gets into your spreadsheet.

We have a feel for the basics, but now we want to improve our process. There are certain things we do repeatedly, regardless of the recipes we’re making. We need to create an assembly line. Whether we’re calculating metrics or mixing the wet ingredients and the dry ingredients, there are steps we need to complete in a specific order and to a certain level of quality.

We’ve moved on from the Easy Bake Oven and are now baking using a full-size oven. We need to be more careful about our measurements, ensure the oven is at the correct temperature, and write down notes about the various steps in our more complicated recipes. We need to make sure that different cakes, fillings, and decorations are ready at the same time, even if they have wildly different prep times. We need consistent results every time we make the cake, and we need others to be able to make the same recipe and achieve the same results by following our instructions.

Documenting and transferring this knowledge to others is difficult. Sure, you can write things down, but it is easy to skip a step that you take for granted. Perhaps your handwriting is hard to read, or someone is having trouble with the oven. You may be aware that using a hair dryer while baking can cause the circuit breaker to trip, but unless you document this information, others will not know this quirk. If your recipe has changed, such as using individual ingredients instead of a pre-made cake mix, it is important to clearly specify these adjustments, otherwise your friends and family may struggle to replicate your delicious cake.

There are plenty of data analytics tools out there that assist at this stage – Alteryx, Tableau Prep, and Datameer are just a few of many. In large enterprise organizations, you might find Informatica Power Center, Talend, or Matillion. These types of tools have graphical user interfaces (GUI); they give you the flexibility to extract data, load data, inspect data and transform it. Many enable you to define and calculate metrics. But they require you to work within each tool’s set of rules and constraints. This works well if you are starting and need something less complex.

The process that was once simple now is not; there are hidden assumptions, configurations, and requirements. You’re not using a pen-and-paper recipe anymore; now you’re working within a new system.

GUI-based tools are great for companies whose workflows fit the way the systems work. But between the way some tools are licensed, and the skill needed to use them, they are only available to the IT organization. This leaves users to find shortcuts, develop workarounds, and become dependent on the business’ shadow IT. Inevitably, you’re going to run into maintainability issues.

Recipes often have plenty of steps and ingredients; “data recipes” are no different. There are dependencies between operations, different run times for different transformations, different release cadences, and data availability SLAs. Your team might be able to manage your entire workflow quite well with one of these tools, but once your team starts introducing custom SQL logic or additional overlapping tools into the ecosystem, you introduce another layer of complexity. The result is an increase in the total cost of ownership.

Often, this complexity is opaque, too. It is not obvious what that GUI component is doing, but they are strung together to build something usable. Over time, the complexity continues to grow; custom SQL logic is introduced, and more steps in the chain. Eventually, abstractions begin to form. The data engineers decide that these processing pipelines look quite similar and can be customized based on some basic configurations. Less overhead, more output.

You’re now on the path of building custom ELT (Extract, Transform, and Load) pipelines, stitched together within the constraints of a GUI-based system. For some companies, this is okay, and it works. But there is a hidden cost – it is harder to maintain high quality inputs and outputs. The layers are tightly coupled and a mistake in one step is not caught until the whole pipeline is complete.

IT may not be aware of downstream issues because they occur in other tools outside of their domain; one change here breaks something else there. This is like buying a ready-made cake mix and “enhancing it” with your custom ingredients. It works until it does not. One day, Duncan Hines changes their ingredients, and without you realizing, there is a bad reaction between your “enhancements” and the new mix. Your once great recipe is not so great anymore, but there was no way for Duncan Hines to know. They expected you to follow their instructions; everything has been going according to plan until now.

Even if your tool has strong version control built in, it’s often difficult to reverse the changes before it is too late. If your recipe calls for 1 cup of sugar but you accidentally add in 11, we don’t want to wait until the cake is baked to discover the error. We want to catch that mistake as soon as it happens.

Everything up to this point serves a specific profile of a business, but what happens when the business matures beyond what these tools offer? What happens when you know how to bake a cake, but struggle to consistently produce hundreds or thousands of the same quality cakes?

When we aim to expand our baking operations, it's crucial to maintain consistency among bakers, minimize accidental mistakes, and have the ability to swiftly recover from any errors that occur. We need enough mixers and ovens to support the demand for our cakes, and we need an organized pantry with the correct measuring cups and spoons. We need to know which ingredients are running low, which are delayed, and which have common allergens.

In data analytics, we have our own supply chain, often called ELT. You will find tools like Airbyte and Fivetran are common choices for bringing in our data “ingredient delivery”. They manage data extraction and ingestion so you can skip the manual CSV downloads that once served you so well.

We want to ensure quality, have traceability, document our process, and successfully produce and deliver our cakes. To do this, we need a repeatable process, with clear sequential steps. In the world of baking, we use recipes to achieve this.

All baking recipes have a series of steps, some of which are common across different recipes. For example, creaming eggs and sugar, combining the wet and dry ingredients, and whipping the icing are repeatable steps that, when performed in the correct order, result in a delicious dessert. Recipes also follow a standard format: preheat the oven to the specified temperature, list the ingredients in the order in which they are used, and provide the preparation steps last. The sequence is intentional and provides a clear understanding of what to expect during the baking process.

We can apply this model to our data infrastructure by using a tool called dbt (data build tool). Instead of repeating miscellaneous transformation steps in various places, we can centralize our transformation logic into reusable components. Then we can reference those components throughout our project. We can also identify which data is stale, review the chain of dependencies between transformations, and capture the documentation alongside that logic.

We no longer need a GUI-driven tool to review our data; instead, we use the process as defined in the code to inform our logic and documentation. Our new teammate can now confidently create her cake to the same standard as everyone else; she can be confident that she is avoiding common allergens, too.