The amount of data produced today is mind-boggling and is expanding at an astonishing rate. This explosion of data has led to the emergence of big data challenges that organizations must overcome to remain competitive. Organizations must effectively manage data, guarantee its accuracy and quality, in order to derive actionable insights from it. This is where DataOps comes in. In this first post we go through an overview of DataOps and in our next post we will discuss tooling that support DataOps as well as discuss companies that have seen the benefit of implementing DataOps Processes.

What is DataOps?

DataOps is a methodology that merges DevOps principles from software development with data management practices. This helps to improve the process of developing, deploying, and maintaining data-driven applications. The goal is to increase the delivery of new insights and the approach emphasizes collaboration between data scientists, data engineers, data analysts, and analytics engineers.

Automation and continuous delivery are leveraged for faster deployment cycles. Organizations can enhance their data management procedures, decrease errors, and increase the accuracy and timeliness of their data by implementing DataOps.

The overarching goal is to improve an organization's capacity for decision-making by facilitating quicker, more precise data product deliveries. DataOps can speed up task completion with fewer errors by encouraging effective team collaboration, ultimately enhancing the organization's success.

DataOps is now feasible thanks to modern data stack tools. Modern data tools like Snowflake and dbt can enable delivery of new features more quickly while upholding IT governance standards. This allows for employee empowerment and improves their agility all while helping to create a culture that values data-driven decision-making. Effective DataOps implementation enables businesses to maximize the value of their data and build a data-driven culture for greater success.

The importance of DataOps in solving big data challenges

It is impossible to overstate the role that DataOps plays in overcoming big data challenges. Organizations must learn how to manage data effectively as it continues to increase if they want to stay competitive.

DataOps addresses these challenges by providing a set of best practices and tools that enable data teams to work more efficiently and effectively. Some of the key benefits of DataOps include:

- Improved agility and flexibility: DataOps empowers data teams to work in an agile manner, enabling them to quickly adapt to shifting requirements and business needs. By basing decisions on up-to-the-minute information, businesses can stay one step ahead of the competition.

- Increased collaboration: DataOps encourages collaboration between different teams involved in the data management process, including data engineers, data scientists, and business analysts. This leads to better communication, faster feedback loops, and more efficient workflows.

- Enhanced quality and accuracy: DataOps lowers the risk of errors and inconsistencies in the data by automating data deliverable processing and analysis pipelines. As a result, businesses are better able to make decisions based on accurate and trustworthy data

- Reduced costs: DataOps helps businesses save money by reducing the time and resources needed to manage and perform data analytics. As a result, they can invest in other aspects of their business to maintain their competitiveness.

In a world without DataOps, data-related processes can be slow, error-prone, and documentation is often outdated. DataOps provides the opportunity to rethink how data products are delivered. This promotes a culture of federated delivery. Everyone in the organization with the necessary skills is empowered to participate in insight generation.

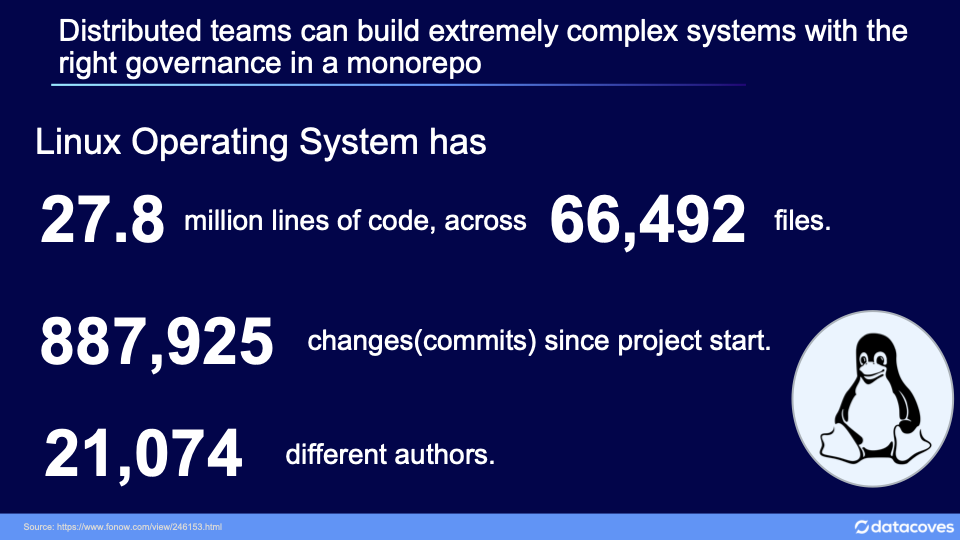

We can achieve comparable results while preserving governance over the delivery process by learning from large, distributed open-source software development projects like the Linux project. To ensure high-quality output, a good DataOps process places an emphasis on rules and procedures that are monitored and controlled by a core team.

What are the components for successful DataOps implementation?

To implement DataOps well, one needs to take a holistic approach that brings together people, processes, and technology. Here are the five most important parts of DataOps that organizations need to think about in order to implement it successfully:

- Collaborative Culture: Organizations must foster a collaborative culture and they must encourage cross-functional teamwork and make it easy for data scientists, data engineers, data analysts, and analytics engineers to talk to each other and have visibility into each other's work. Collaboration breaks down silos and makes it easier for everyone to understand how data-driven applications work. A collaborative culture also helps team members feel like they own their work and are responsible for it, which can help improve the quality of the data and the applications that use it.

- Automation: Automation is a key part of DataOps because it helps organizations streamline their feature deliveries and cut down on mistakes. Data ingestion, data integration, and data validation are all examples of tasks that are done over and over again. By automating these tasks, organizations can free up their resources to focus on more complex and valuable tasks, like analyzing data and deriving insights from it.

- Continuous Integration and Delivery: Continuous integration and delivery (CI/CD) are important parts of DataOps. Together they enable the deployment of working code to production in a continuous and iterative way. CI/CD can help speed up the time it takes to get data-driven apps to market so companies can respond quickly to changing business needs and provide more value to their customers.

- Monitoring and Feedback: Monitoring and feedback are important parts of DataOps because they help make sure that data-driven applications work as they should. By keeping an eye on how well data-driven applications work, organizations can catch problems early and fix them as needed. By asking users for feedback, the code can be made more reliable and maintainable leading to better outcomes.

- Data Governance: Data governance is a set of policies, procedures, and controls that make sure data is managed correctly throughout its lifecycle. Data governance includes data ownership, data quality, data security, data privacy, and data lineage. By putting in place strong data governance practices, organizations can make sure that their data is correct, consistent, and in line with company standards. Data governance can help make the data more trustworthy and lower the chance of a data breach.

Having identified what needs to be done to achieve a good DataOps implementation, you may be wondering how we turn these ideas into actionable steps.

What are the best ways to implement DataOps in organizations?

- Start Small and Grow: When putting DataOps in place, it's important to start small and grow gradually. This method lets companies try out and improve their DataOps processes before putting them to use on bigger projects. By starting out small, organisations can find potential problems and solve them before rolling them out to a larger audience.

- Align DataOps with Business Goals: For an organization's DataOps implementation to work, its DataOps processes need to be in line with its business goals. Organizations should figure out what their most important business goals are for their data-driven applications, and then design their DataOps processes to help them reach these goals. This approach makes sure that DataOps is focused on adding value to the business and not just on implementing new technology.

- Foster collaboration between teams: One of the most important parts of DataOps is getting teams to work together. Organizations need to help data scientists, data engineers, data analysts, and analytics engineers work together. This method breaks down silos and makes it easier for everyone to understand how data-driven applications work. Organizations can make it easier for people to work together by putting them on cross-functional teams, encouraging open communication, fostering transparency, and where possible encouraging the same tools, e.g. using dbt for data pipelines.

- Invest in tools for automation. Automation is a key part of DataOps. Organizations should invest in automation tools that can help them speed up their data management processes, such as data ingestion, data integration, and data validation. Automation can help cut down on mistakes, increase productivity, and free up resources to be used on more complicated tasks, like analyzing data.

- Implement CI/CD processes: Creating a robust feature release process with automated validations and code review will encourage collaboration and build transparency in the process. Users will be encouraged to move faster by removing the fear that a new feature will break production.

- Monitor Performance and Get Feedback: Two important parts of DataOps are monitoring performance and getting feedback. Organizations should use monitoring tools that can keep track of how well data-driven applications are working and get feedback from users. This method helps find problems early on and makes the data more accurate and reliable. It also gives organizations useful information about how users leverage tools and this can help them improve the user experience.

Looking to implement DataOps quickly and efficiently?

At Datacoves, we offer a comprehensive suite of DataOps tools to help organizations implement robust processes quickly and efficiently. One of the key benefits of using Datacoves is the ability to implement DataOps incrementally using our dynamic Infrastructure. Organizations can start by automating simple processes, such as data collection and cleaning, and gradually build out more complex processes as their needs evolve.

Our agile methodology allows for iterative development and continuous deployment, ensuring that our tools and processes are tailored to meet the evolving needs of our clients. This approach allows organizations to see the benefits of DataOps quickly, without committing to a full-scale implementation upfront. We have helped teams implement mature DataOps processes in as little as six weeks.

The Datacoves platform is being used by top global organizations and our team has extensive experience in guiding organizations through the DataOps implementation process. We ensure that you benefit from best practices and avoid common pitfalls when implementing DataOps.

Set aside some time to speak with us to discuss how we can support you in maturing your data processes with dbt and DataOps.

Don't miss out on our latest blog, where we showcase the best tools and provide real-life examples of successful implementations.

Get ready to take your data operations to the next level!

-Photoroom.jpg)